Claude Opus 4.7

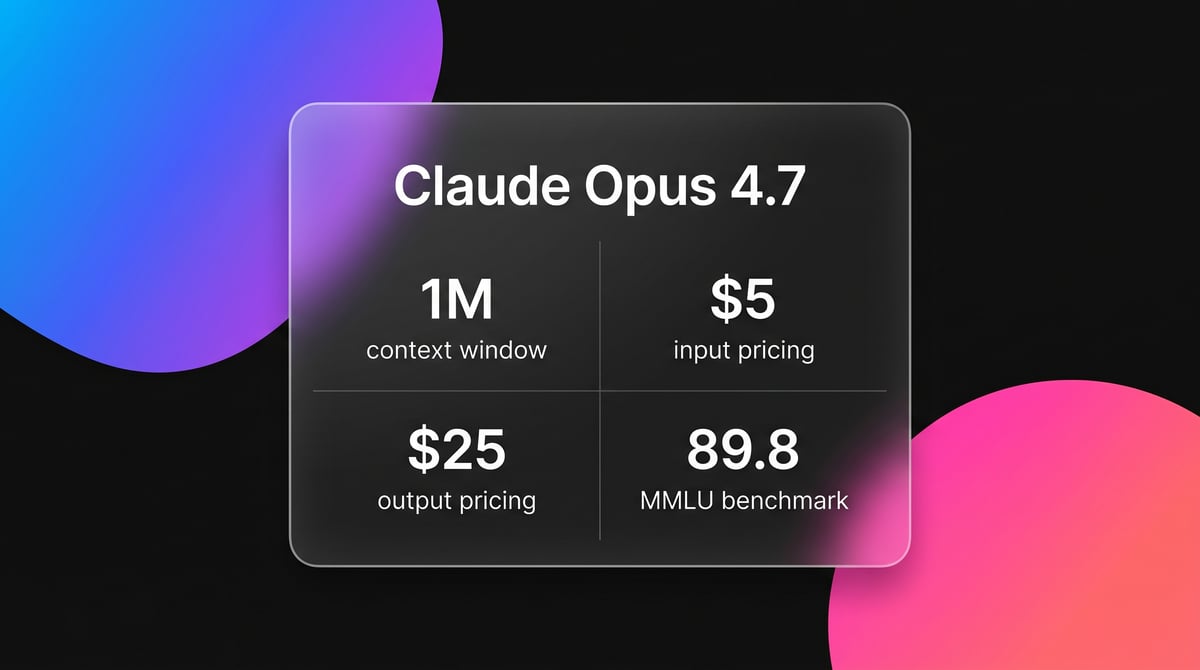

Claude Opus 4.7 is Anthropic's flagship model with a 1-million-token context, adaptive reasoning, and 3.3x vision resolution for enterprise-scale agents.

About Claude Opus 4.7

Learn about Claude Opus 4.7's capabilities, features, and how it can help you achieve better results.

Model Overview

Claude Opus 4.7 is the flagship model in the Claude 4 architecture series. It uses an Adaptive Thinking framework that allows the model to scale its cognitive effort based on the perceived difficulty of a task. This replaces fixed reasoning budgets with dynamic logic levels. Developers can now control internal reasoning depth through an API effort parameter, allowing for a better balance between latency and logical rigor. The model is specifically tuned for high-stakes enterprise workflows and autonomous agentic loops.

Context and Multimodal Capabilities

This model provides a 1-million-token context window without a long-context pricing premium. It includes a 128,000-token output limit, enabling the generation of massive technical documents or complete code repositories in one response. The vision resolution is 3.3x higher than previous iterations. This allows for pixel-perfect UI understanding and 1:1 coordinate mapping in images up to 2576 pixels. These improvements make it a reliable choice for document analysis and visual auditing tasks.

Agentic Engineering and Safety

Architectural updates target long-horizon tasks and software engineering. It scores 87.6% on the SWE-bench Verified leaderboard, currently leading in its ability to resolve real GitHub issues. The model introduces task budgets to help manage token consumption across multi-turn agent sessions. Anthropic has integrated real-time cybersecurity safeguards into the core architecture to prevent the model from participating in malicious exploits while maintaining utility for security researchers.

Use Cases

Discover the different ways you can use Claude Opus 4.7 to achieve great results.

Agentic Software Engineering

Utilizing high effort levels to autonomously refactor repositories and resolve complex cross-file dependencies.

Large-Scale Repository Synthesis

Processing 1 million tokens of source code to map architectural flows and generate technical documentation.

High-Resolution Vision Analysis

Analyzing dense charts and pixel-level UI screenshots with 3.3x more detail than previous frontier models.

Cybersecurity Vulnerability Research

Performing deep security audits and zero-day analysis within verified safety boundaries.

Enterprise Knowledge Extraction

Extracting structured data from massive technical libraries and performing complex cross-document redlining.

Interactive 3D Prototyping

Generating functional 3D environments and game logic from natural language descriptions.

Strengths

Limitations

API Quick Start

anthropic/claude-opus-4-7

import Anthropic from '@anthropic-ai/sdk';

const anthropic = new Anthropic({

apiKey: process.env.ANTHROPIC_API_KEY,

});

const msg = await anthropic.messages.create({

model: "claude-opus-4-7",

max_tokens: 4096,

thinking: { type: "adaptive" },

messages: [{ role: "user", content: "Analyze this architecture for concurrency bugs." }],

});

console.log(msg.content[0].text);Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Claude Opus 4.7

“Claude Opus 4.7 leads on SWE-bench and agentic reasoning, beating GPT-5.4 and Gemini 3.1 Pro.”

“The fact it can generate a procedural 3D skate game in one go is evidence of the model's logic density.”

“Opus 4.7 just dropped. cursorbench jumped from 58% to 70%. XBOW visual acuity 98.5% vs 54.5% on opus 4.6.”

“Claude tends to over-engineer: you ask for a simple function and get an architecture designed to scale for the next decade.”

“Early feedback on Claude Opus 4.7 points to higher token usage and stricter prompting requirements.”

“The X-High reasoning effort is the missing middle ground we needed for complex agentic workflows.”

Related Videos

Watch tutorials, reviews, and discussions about Claude Opus 4.7

“Claude has been and is still the best quoting model available today.”

“It's actually the same price as it was before, but they gave you more control over its reasoning.”

“This is working perfectly right. It picked the tools I would have picked myself.”

“The model feels noticeably faster when you don't use the highest thinking levels.”

“You can see it thinking about the edge cases before it even writes a single line of code.”

“This model is way more expensive to run... you're going to be paying 35% more for Opus 4.7.”

“The vision upgrade alone is worth it... it can take images three times the resolution without cropping.”

“If you use the API, you can expect to pay 35% more than before.”

“The tokenization change is the silent killer for your API bills if you aren't careful.”

“It handles deep context much better than the earlier version of Opus 4.”

“The vision capabilities of this model are substantially better.”

“The X-High reasoning effort is the missing middle ground we needed for complex agentic workflows.”

“This absolutely 100% warrants an insane title. This seriously blew me away.”

“It correctly identified a bug in my legacy codebase that three other models missed.”

“The level of autonomy in the agent loops is what differentiates this from GPT-5.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Claude Opus 4.7 and achieve better results.

Activate Adaptive Thinking

Explicitly enable the adaptive thinking mode in API calls to ensure Claude selects the optimal reasoning depth.

Use X-High for Agents

Set the effort parameter to xhigh for agentic loops to maximize self-verification and logical precision.

Remove Scaffolding

Remove legacy prompts like double-check your work as the model is optimized for internal self-correction.

Monitor Token Consumption

Use the new tokenizer tracking to manage the 35% increase in token counts for identical text inputs.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Gemini 3.1 Pro

Gemini 3.1 Pro is Google's elite multimodal model featuring the DeepThink reasoning engine, a 1M+ context window, and industry-leading ARC-AGI logic scores.

Gemini 3.1 Flash Live Preview

Gemini 3.1 Flash Live Preview is Google's ultra-low-latency, audio-to-audio model featuring a 131K context window, high-fidelity multimodal reasoning, and...

GPT-5.5

OpenAI

GPT-5.5 is OpenAI's flagship frontier model with a 1M context window and five reasoning effort levels, optimized for autonomous agentic workflows and coding.

Grok-3

xAI

Grok-3 is xAI's flagship reasoning model, featuring deep logic deduction, a 128k context window, and real-time integration with X for live research and coding.

GPT-5.2 Pro

OpenAI

GPT-5.2 Pro is OpenAI's 2025 flagship reasoning model featuring Extended Thinking for SOTA performance in mathematics, coding, and expert knowledge work.

Qwen 3.7 Max

alibaba

Qwen 3.7 Max is Alibaba’s flagship AI model for deep reasoning and autonomous agent tasks, featuring a 256k context window and top-tier coding performance.

Gemini 3 Pro

Google's Gemini 3 Pro is a multimodal powerhouse featuring a 1M token context window, native video processing, and industry-leading reasoning performance.

Claude Opus 4.6

Anthropic

Claude Opus 4.6 is Anthropic's flagship model featuring a 1M token context window, Adaptive Thinking, and world-class coding and reasoning performance.

Frequently Asked Questions

Find answers to common questions about Claude Opus 4.7