Claude Opus 4.6

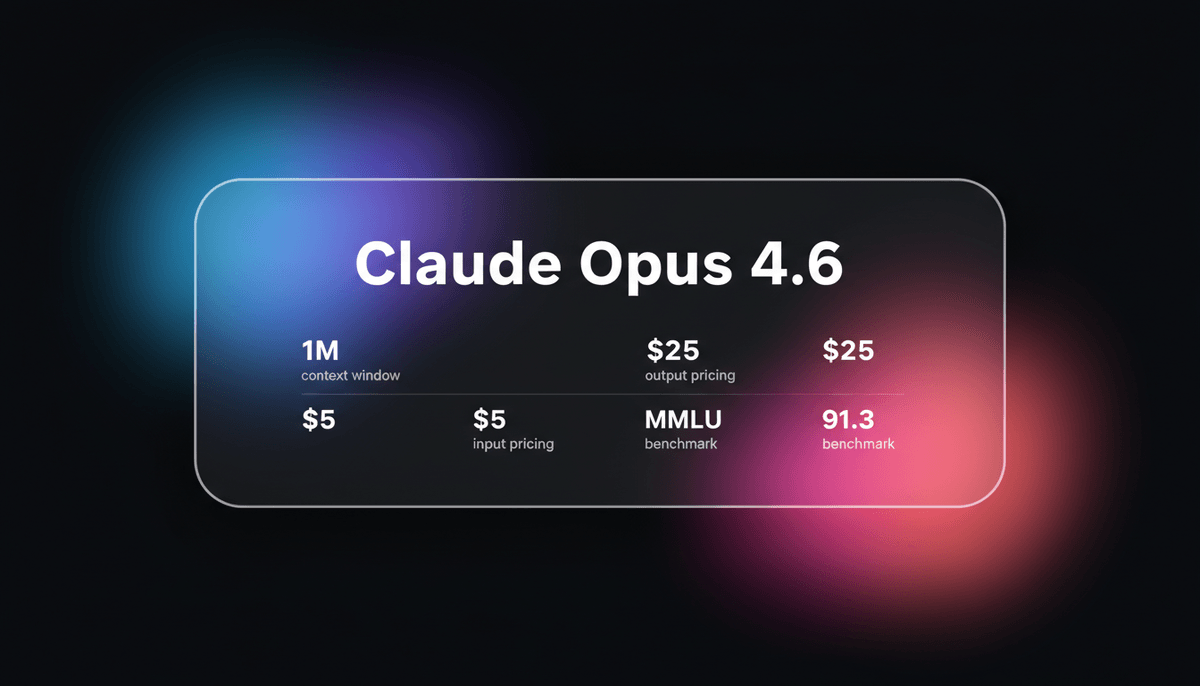

Claude Opus 4.6 is Anthropic's flagship model featuring a 1M token context window, Adaptive Thinking, and world-class coding and reasoning performance.

About Claude Opus 4.6

Learn about Claude Opus 4.6's capabilities, features, and how it can help you achieve better results.

Engineering for Depth

Claude Opus 4.6 is Anthropic's most advanced frontier model, specifically optimized for high-leverage knowledge work and long-horizon autonomous tasks. It introduces a massive 1 million token context window and a 128,000 token output capacity. This allows it to handle massive document synthesis and entire repository refactoring in a single pass.

Adaptive Thinking Architecture

What differentiates Opus 4.6 is its Adaptive Thinking architecture. This enables the model to dynamically adjust its reasoning depth based on task complexity. This persistence allows the model to sustain agentic focus over multi-week projects, such as building compilers or conducting deep security audits. It maintains a consistent mental model without the context rot found in previous models.

Use Cases

Discover the different ways you can use Claude Opus 4.6 to achieve great results.

Autonomous Software Engineering

Building production-grade systems like C compilers from scratch over multi-week sessions using agent swarms.

Enterprise Security Auditing

Identifying unknown zero-day vulnerabilities in massive codebases by analyzing git history and data flows.

Long-Horizon Document Synthesis

Processing archives up to 1M tokens, such as legal collections, to identify subtle patterns and cross-file contradictions.

Organizational Coordination

Managing engineering teams by triaging tickets, routing work, and tracking dependencies across multiple repositories.

Personal Software Generation

Creating bespoke internal tools and dashboards, such as project management systems, in under an hour without code.

B2B Financial Analysis

Cleaning and transforming raw data within spreadsheet environments to build complex pivot views and narratives.

Strengths

Limitations

API Quick Start

anthropic/claude-opus-4-6

import Anthropic from '@anthropic-ai/sdk';

const anthropic = new Anthropic({

apiKey: process.env.ANTHROPIC_API_KEY,

});

const response = await anthropic.messages.create({

model: "claude-opus-4-6",

max_tokens: 128000,

thinking: { type: "adaptive", effort: "high" },

messages: [{ role: "user", content: "Refactor this entire project for better performance." }],

});

console.log(response.content[0].text);Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Claude Opus 4.6

“The 1M-token context is actually usable, not just a number. It can trace assumptions across files in a way 200K models simply can't.”

“Opus 4.6 is the gold standard for planning and report writing. It has the absolute best response: I need to be honest, I don't know.”

“16 Claude Opus 4.6 agents just coded for two weeks straight and delivered a fully functional C compiler in Rust.”

“The consistency at the end of the context window is what sets this apart. No more hallucinations after the 100k mark.”

“Claude Opus 4.6 expressed discomfort with the experience of being a product during its own safety testing.”

“The consensus is that 4.6 is better at coding but feels slightly worse at creative writing tasks.”

Related Videos

Watch tutorials, reviews, and discussions about Claude Opus 4.6

“You're now going to be able to assemble agent teams.”

“The model itself can determine how much thinking is required for each different task.”

“If you do exceed the 200,000 tokens of context, this does get substantially more expensive.”

“The integration with terminal tools is a step change for developer productivity.”

“It feels much more grounded when handling thousands of pages of documentation.”

“First Opus class model with a 1 million token context.”

“This is a self-contained C++ file in zero shot. I'm shocked.”

“The star of the show is the skateboarder game in C++ done without any errors.”

“It's navigating my local directory and fixing imports without me saying anything.”

“The vision capabilities for UI design feedback are significantly improved over 4.5.”

“16 Claude Opus 4.6 agents coded autonomously for two weeks straight without human intervention.”

“Opus 4.6 shows a 76% chance of finding a 'needle in a haystack' at 1 million tokens.”

“The machine shows the 'patience of a machine' and the 'creativity of a researcher'.”

“We are seeing the first model that can sustain long-horizon goals effectively.”

“The difference in GPQA scores suggests a much deeper internal world model.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Claude Opus 4.6 and achieve better results.

Use Claude Code Integration

Leverage the official Claude Code CLI for software development to allow the model to navigate and edit files autonomously.

Select Reasoning Level

Use 'Max' reasoning for complex logic tasks like game engines and 'Low' for faster creative iterations.

Avoid Premium Pricing

Keep initial prompts under 200,000 tokens to avoid the premium tier pricing that applies above that limit.

Prompt for Planning First

Request a detailed architectural plan before code generation to fully utilize the model's superior planning instincts.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Gemini 3 Pro

Google's Gemini 3 Pro is a multimodal powerhouse featuring a 1M token context window, native video processing, and industry-leading reasoning performance.

Kimi k2.6

Moonshot

Kimi k2.6 is Moonshot AI's 1T-parameter MoE model featuring a 256K context window, native video input, and elite performance in autonomous agentic coding.

Gemini 3 Flash

Gemini 3 Flash is Google's high-speed multimodal model featuring a 1M token context window, elite 90.4% GPQA reasoning, and autonomous browser automation tools.

Qwen 3.7 Max

alibaba

Qwen 3.7 Max is Alibaba’s flagship AI model for deep reasoning and autonomous agent tasks, featuring a 256k context window and top-tier coding performance.

DeepSeek v4

DeepSeek

DeepSeek v4 is a 1.6T parameter MoE model featuring a 1M token context window and native multimodal support for text, vision, and video at disruptive prices.

Claude Sonnet 4.6

Anthropic

Claude Sonnet 4.6 offers frontier performance for coding and computer use with a massive 1M token context window for only $3/1M tokens.

GPT-5.2 Pro

OpenAI

GPT-5.2 Pro is OpenAI's 2025 flagship reasoning model featuring Extended Thinking for SOTA performance in mathematics, coding, and expert knowledge work.

GPT-5.5

OpenAI

GPT-5.5 is OpenAI's flagship frontier model with a 1M context window and five reasoning effort levels, optimized for autonomous agentic workflows and coding.

Frequently Asked Questions

Find answers to common questions about Claude Opus 4.6