Claude Sonnet 4.6

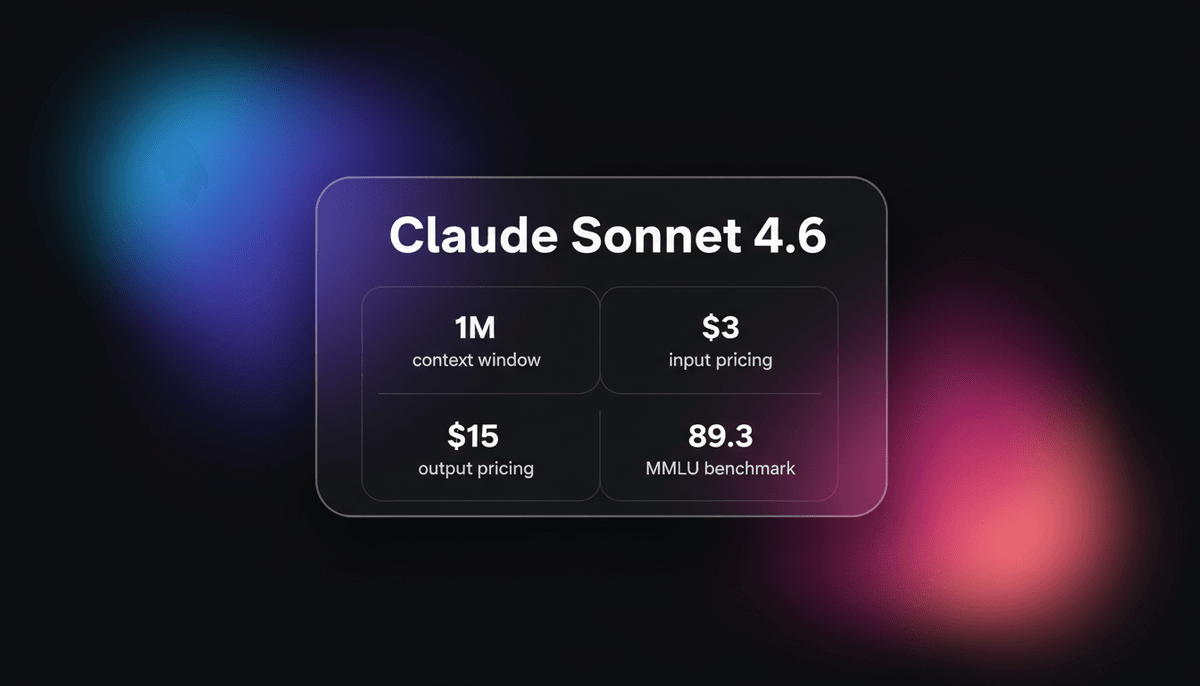

Claude Sonnet 4.6 offers frontier performance for coding and computer use with a massive 1M token context window for only $3/1M tokens.

About Claude Sonnet 4.6

Learn about Claude Sonnet 4.6's capabilities, features, and how it can help you achieve better results.

High-Performance Agentic Intelligence

Claude Sonnet 4.6 is Anthropic's most versatile model, designed to act as a primary engine for complex enterprise workflows and autonomous agents. Released on February 17, 2026, it introduces human-level computer use capabilities and a 1-million-token context window. The model architecture balances the speed of mid-tier systems with the reasoning depth typically reserved for the Opus class, making it a sustainable choice for high-volume production environments.

Adaptive Thinking and Multimodality

At its technical core, Sonnet 4.6 utilizes an Adaptive Thinking mechanism. This allows developers to scale the internal reasoning effort based on the specific requirements of a task, optimizing for either sub-second latency or deep logical verification. The model is natively multimodal, offering state-of-the-art performance in processing text, high-resolution images, and audio files. It excels at interpreting dense technical documentation and complex visual data, such as architectural blueprints or financial charts.

The Industry Standard for Coding

With a record-breaking 79.6% on SWE-bench Verified, Sonnet 4.6 has become the default choice for software engineering automation. Its ability to reason across vast codebases within its 1M context window allows it to resolve multi-file bugs and plan architectural refactors with minimal human intervention. By offering near-Opus level intelligence at $3 per million input tokens, it removes the financial barriers previously associated with deploying truly autonomous AI systems.

Use Cases

Discover the different ways you can use Claude Sonnet 4.6 to achieve great results.

Autonomous Software Engineering

Resolving complex multi-file GitHub issues and executing full-repository refactors using its 79.6% SWE-bench accuracy.

Human-Level Computer Use

Directly navigating desktop software and web interfaces to complete multi-step administrative tasks without custom API integrations.

Large-Scale Document Analysis

Reviewing thousands of pages of legal contracts or research papers simultaneously within the 1-million-token context window.

Financial Intelligence and Forecasting

Processing earnings calls and quarterly reports to identify subtle market anomalies using high-effort adaptive reasoning.

Multimodal Technical Support

Interpreting complex technical diagrams, circuit board photos, and audio recordings to provide precise troubleshooting steps.

Agentic Business Strategy

Planning and executing long-horizon operations by leveraging top-tier scores on strategy and logic-based benchmarks.

Strengths

Limitations

API Quick Start

anthropic/claude-sonnet-4-6

import Anthropic from '@anthropic-ai/sdk';

const anthropic = new Anthropic({

apiKey: process.env.ANTHROPIC_API_KEY,

});

const response = await anthropic.messages.create({

model: "claude-4-sonnet-20260217",

max_tokens: 4096,

thinking: { type: "adaptive", effort: "high" },

messages: [

{ role: "user", content: "Analyze this repository for architectural bottlenecks." }

],

});

console.log(response.content[0].text);Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Claude Sonnet 4.6

“Context is noise. Bigger token windows are a trap. Give agents only the narrow, curated signal they need.”

“This is Claude Sonnet 4.6: our most capable Sonnet model yet. It’s a full upgrade across coding, computer use, and agent planning.”

“The performance-to-cost ratio of Claude Sonnet 4.6 is extraordinary, it’s hard to overstate how fast these models are evolving.”

“Sonnet 4.6 is now live in Claude Code. It's cheaper than Opus 4.6 and nears Opus-level intelligence.”

“Claude 4.6 is the new leader in agentic performance, slightly ahead of Opus 4.6 on real-world knowledge work tasks.”

“The fact that this model can navigate a computer interface with 72% accuracy basically ends the need for most bespoke APIs.”

Related Videos

Watch tutorials, reviews, and discussions about Claude Sonnet 4.6

“Sonic 4.6 is here and it may replace Opus for 90% of what you do daytoday.”

“But the best part, it's 40% cheaper than using Opus 4.6.”

“The SWE-bench results are actually unbelievable for a mid-tier model.”

“You can effectively feed it an entire codebase and it doesn't lose the plot.”

“Adaptive thinking effort allows you to trade off speed for deeper logic.”

“Early users are actually reporting that it is capable of near humanlike performance on complex spreadsheet manipulation.”

“This model is around twice as fast in comparison to the opus.”

“The 1 million token context window is currently in beta but works very well.”

“It navigates software interfaces without needing specific API integrations.”

“The coding capability on Python and JavaScript is basically at the ceiling.”

“Anthropic says the new context window is big enough to hold entire code bases and reason effectively across all that context.”

“Opus 4.6 is the nuclear bomb option... but now we finally have a scalpel which is awesome news.”

“Computer use is the standout feature here, actually moving the mouse and typing.”

“Financial analysts are going to love the reasoning depth for document review.”

“It's the first time a 'Sonnet' model has felt like the absolute best in class.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Claude Sonnet 4.6 and achieve better results.

Optimize Thinking Effort

Use the 'adaptive' thinking mode to save costs on simple queries while reserving 'max' effort for math and logic tasks.

Implement Context Compaction

Enable prompt caching and compaction features to handle the 1M token window efficiently without redundant costs.

Structured Behavioral Anchoring

Utilize a central project markdown file to maintain a persistent source of truth for the model's architectural decisions.

Video Frame Extraction

Since native video is not supported, extract key frames at 1fps for the most accurate visual analysis of video content.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

DeepSeek v4

DeepSeek

DeepSeek v4 is a 1.6T parameter MoE model featuring a 1M token context window and native multimodal support for text, vision, and video at disruptive prices.

Gemini 3 Flash

Gemini 3 Flash is Google's high-speed multimodal model featuring a 1M token context window, elite 90.4% GPQA reasoning, and autonomous browser automation tools.

Kimi k2.6

Moonshot

Kimi k2.6 is Moonshot AI's 1T-parameter MoE model featuring a 256K context window, native video input, and elite performance in autonomous agentic coding.

Claude Opus 4.6

Anthropic

Claude Opus 4.6 is Anthropic's flagship model featuring a 1M token context window, Adaptive Thinking, and world-class coding and reasoning performance.

Qwen3.5-397B-A17B

alibaba

Qwen3.5-397B-A17B is Alibaba's flagship open-weight MoE model. It features native multimodal reasoning, a 1M context window, and a 19x decoding throughput...

GPT-5.1

OpenAI

GPT-5.1 is OpenAI’s advanced reasoning flagship featuring adaptive thinking, native multimodality, and state-of-the-art performance in math and technical...

Gemini 3 Pro

Google's Gemini 3 Pro is a multimodal powerhouse featuring a 1M token context window, native video processing, and industry-leading reasoning performance.

Kimi K2.5

Moonshot

Discover Moonshot AI's Kimi K2.5, a 1T-parameter open-source agentic model featuring native multimodal capabilities, a 262K context window, and SOTA reasoning.

Frequently Asked Questions

Find answers to common questions about Claude Sonnet 4.6