Qwen3.5-397B-A17B

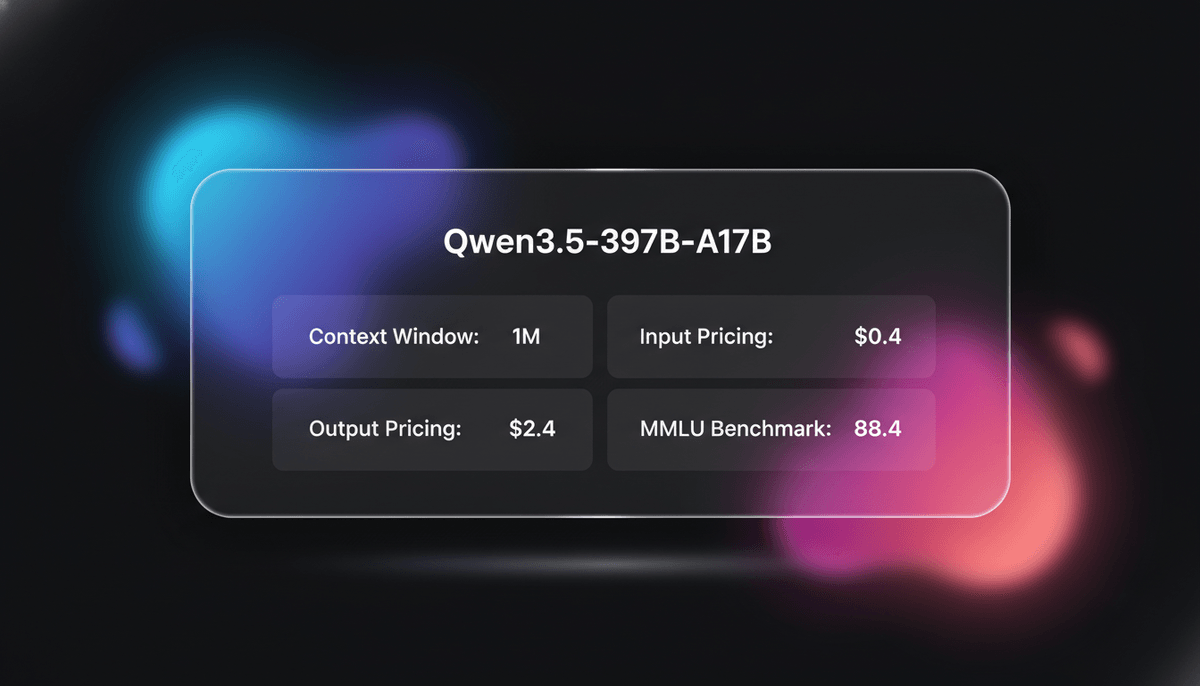

Qwen3.5-397B-A17B is Alibaba's flagship open-weight MoE model. It features native multimodal reasoning, a 1M context window, and a 19x decoding throughput...

About Qwen3.5-397B-A17B

Learn about Qwen3.5-397B-A17B's capabilities, features, and how it can help you achieve better results.

High-Efficiency Mixture of Experts

Qwen3.5-397B-A17B is a flagship native multimodal model that utilizes an innovative hybrid architecture fusing linear attention through Gated Delta Networks with a sparse Mixture-of-Experts (MoE). Although it contains 397 billion total parameters, its sparse design activates only 17 billion parameters per forward pass, achieving exceptional inference efficiency and speed without compromising on its vast reasoning capabilities. It is optimized for both language and visual tasks, supporting a massive vocabulary of 250k tokens and providing support for over 201 languages and dialects.

Native Multimodal Agentic Workflows

The model excels as a native multimodal agent, capable of processing up to one million tokens of context, which is equivalent to approximately two hours of video. It introduces a specialized Thinking Mode for complex logical reasoning and is natively equipped for agentic workflows, including web development, GUI navigation, and real-world spatial intelligence. Its architecture supports FP8 end-to-end training and a disaggregated training-inference framework, making it one of the most scalable and efficient models for enterprise-grade AI applications.

Open Weights for Global Accessibility

Released under the Apache 2.0 license, this model provides the open-source community with frontier-level capabilities previously restricted to proprietary systems. It bridges the gap between massive parameter counts and practical deployment, allowing organizations to run state-of-the-art reasoning tasks on private infrastructure with significantly lower compute overhead than dense 400B alternatives.

Use Cases

Discover the different ways you can use Qwen3.5-397B-A17B to achieve great results.

Long-Horizon Video Analysis

Analyze up to two hours of video content to extract logic, reverse-engineer code from footage, or generate structured summaries.

PhD-Level STEM Research

Solve graduate-level PhD science questions and olympiad-level math problems using its adaptive deep-thinking mode.

Autonomous GUI Agents

Automate interactions with smartphones and computers to handle office workflows and cross-app mobile navigation.

Visual Software Engineering

Execute 'vibe coding' by turning natural language instructions and UI sketches into functional frontend code.

Document Intelligence

Process complex documents, charts, and handwritten sketches to extract structured data and reverse-engineer layouts.

Spatial AI Applications

Understand pixel-level relationships for embodied AI tasks like autonomous driving scene analysis and robotic navigation.

Strengths

Limitations

API Quick Start

alibaba/qwen3.5-plus

import OpenAI from 'openai';

const client = new OpenAI({

apiKey: process.env.DASHSCOPE_API_KEY,

baseURL: 'https://dashscope-intl.aliyuncs.com/compatible-mode/v1',

});

async function main() {

const completion = await client.chat.completions.create({

model: 'qwen3.5-plus',

messages: [{ role: 'user', content: 'Analyze the logic of this MoE architecture.' }],

extra_body: { enable_thinking: true },

});

console.log(completion.choices[0].message.content);

}

main();Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Qwen3.5-397B-A17B

“Qwen3.5-397B is essentially a GPT-5 class model but open-weight. The DeltaNet architecture is solving the MoE latency issues perfectly.”

“Native multimodal reasoning on Qwen3.5 looks incredible. 1M context + video analysis is going to change agent workflows.”

“The decision to use FP8 end-to-end training while maintaining BF16 in sensitive layers is a masterclass in stability optimizations.”

“This is the first time I've seen an open model actually beat Gemini 1.5 Pro on complex multimodal agent tasks.”

“The 19x decoding throughput improvement over Qwen3-Max makes this a viable alternative for production-level agents.”

“I was surprised by how well it handles 4-bit quantization. It keeps almost all the reasoning capability on a dual A100 setup.”

Related Videos

Watch tutorials, reviews, and discussions about Qwen3.5-397B-A17B

“A 397 billion parameter model, but with 17 billion parameters active.”

“When decoding at 256K, this model is 19 times faster than Qwen 3 Max.”

“The native vision-language reasoning is what sets this apart for agentic workflows.”

“This beats most closed models on the standard math benchmarks.”

“Running this locally is tough, but the quantized versions are workable on high-end macs.”

“397 billion parameter model with 17 billion active parameters. It's natively multimodal.”

“It's probably currently the best open-source multimodal model.”

“The ability to process two hours of video natively is a massive advantage.”

“Look at these logic scores, it's hitting GPT-4o levels consistently.”

“The Apache license makes this very attractive for corporate data privacy.”

“OCR structured extraction. You have a messy PDF... and you need to turn that into clean JSON. This model excels there.”

“You get the intelligence of a 400 billion parameter giant... but you pay the compute cost of a 17 billion parameter model.”

“It handles long-context retrieval better than the previous version.”

“The tool use integration is built into the base training, not an afterthought.”

“Thinking mode allows it to correct its own logic before outputting.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Qwen3.5-397B-A17B and achieve better results.

Toggle Thinking Mode

Pass the 'enable_thinking: true' parameter in your API call to activate deep reasoning for math, coding, and complex logic puzzles.

Utilize Fast Mode

Use 'Fast' mode for simple queries to get instant answers without consuming tokens on unnecessary internal thinking phases.

Optimize Video Prompts

When analyzing video, prompt the model to focus on the final dynamic outcome rather than frame-by-frame analysis for better temporal coherence.

Leverage Quantization

Use 4-bit or 8-bit quantization (GGUF/EXL2) to run the model on consumer-grade hardware if you have sufficient VRAM (200GB+).

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

GPT-5.1

OpenAI

GPT-5.1 is OpenAI’s advanced reasoning flagship featuring adaptive thinking, native multimodality, and state-of-the-art performance in math and technical...

Kimi K2.5

Moonshot

Discover Moonshot AI's Kimi K2.5, a 1T-parameter open-source agentic model featuring native multimodal capabilities, a 262K context window, and SOTA reasoning.

Grok-4

xAI

Grok-4 by xAI is a frontier model featuring a 2M token context window, real-time X platform integration, and world-record reasoning capabilities.

Claude Opus 4.5

Anthropic

Claude Opus 4.5 is Anthropic's most powerful frontier model, delivering record-breaking 80.9% SWE-bench performance and advanced autonomous agency for coding.

Gemini 3.1 Flash-Lite

Gemini 3.1 Flash-Lite is Google's fastest, most cost-efficient model. Features 1M context, native multimodality, and 363 tokens/sec speed for scale.

Claude Sonnet 4.6

Anthropic

Claude Sonnet 4.6 offers frontier performance for coding and computer use with a massive 1M token context window for only $3/1M tokens.

DeepSeek v4

DeepSeek

DeepSeek v4 is a 1.6T parameter MoE model featuring a 1M token context window and native multimodal support for text, vision, and video at disruptive prices.

Gemini 3 Flash

Gemini 3 Flash is Google's high-speed multimodal model featuring a 1M token context window, elite 90.4% GPQA reasoning, and autonomous browser automation tools.

Frequently Asked Questions

Find answers to common questions about Qwen3.5-397B-A17B