Gemini 3.1 Flash-Lite

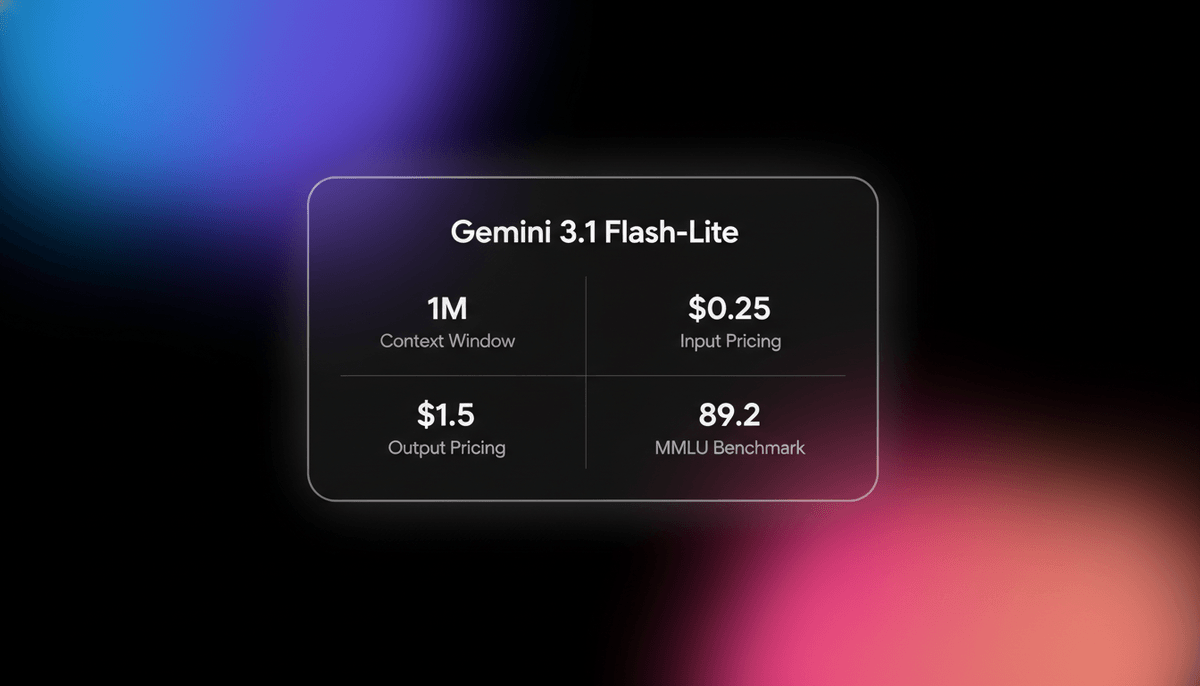

Gemini 3.1 Flash-Lite is Google's fastest, most cost-efficient model. Features 1M context, native multimodality, and 363 tokens/sec speed for scale.

About Gemini 3.1 Flash-Lite

Learn about Gemini 3.1 Flash-Lite's capabilities, features, and how it can help you achieve better results.

Gemini 3.1 Flash-Lite is engineered for high-volume AI applications where processing speed is the primary technical requirement. Unlike larger Pro models, Flash-Lite uses a streamlined architecture that prioritizes throughput, reaching 363 tokens per second. It serves as a specialized tool for developers building real-time voice agents, automated content moderation systems, and large-scale data extraction pipelines that must remain cost-effective under heavy traffic.

Despite its lite designation, the model maintains a 1 million token context window. It can ingest raw audio files, hour-long videos, and hundreds of pages of PDFs in a single request. By introducing Thinking Levels, Google allows users to choose between near-instant responses for simple tasks and a deeper reasoning phase for complex logic. This provides multiple performance profiles within a single API endpoint to balance cost and accuracy.

The model is natively multimodal, which eliminates the need for external tools to transcribe audio or describe images before processing. This native capability improves performance on visual tasks like document question answering and chart analysis. Developers can use the thinking_level parameter to adjust internal reasoning time, effectively scaling the model's effort based on the specific complexity of each query.

Use Cases

Discover the different ways you can use Gemini 3.1 Flash-Lite to achieve great results.

High-Volume Translation

Processing thousands of multilingual chat messages or support tickets in real-time with sub-second latency.

Intelligent Model Routing

Acting as a fast classifier to determine if incoming queries need to be escalated to more expensive models.

Multimodal Content Moderation

Scanning large batches of user-generated images and videos for safety compliance at low cost.

Real-Time UI Prototyping

Generating functional React or Tailwind components from hand-drawn wireframes or verbal descriptions.

Long-Document Summarization

Condensing massive legal archives or technical manuals without losing context across the 1M token window.

Live Audio Transcription

Converting hours of meetings or lecture recordings into structured summaries and action items in one pass.

Strengths

Limitations

API Quick Start

google/gemini-3.1-flash-lite-preview

import { GoogleGenAI } from "@google/generative-ai";

const genAI = new GoogleGenAI(process.env.API_KEY);

const model = genAI.getGenerativeModel({

model: "gemini-3.1-flash-lite-preview",

generationConfig: {

thinkingConfig: { thinking_level: "high" }

}

});

const result = await model.generateContent("Create a weather dashboard UI.");

console.log(result.response.text());Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Gemini 3.1 Flash-Lite

“The coding capability of 3.1 Flash-Lite is surprisingly good for front-end development; it coded a 360-degree viewer perfectly.”

“Gemini 3.1 Flash-Lite is the model to build always-on multimodal AI Agents. It reads, connects, and consolidates everything.”

“Pricing is a massive shock. A 3.75x jump on output tokens is going to sting if you're on a tight cloud budget.”

“It shifts the burden of complexity from your engineering team's architecture right onto Google's infrastructure.”

“Another price drop for intelligence. High speed, low cost, high intelligence. A great model for agentic routing.”

“The 1M context is still the killer feature here. I can dump entire repo folders and it just works with sub-second TTFT.”

Related Videos

Watch tutorials, reviews, and discussions about Gemini 3.1 Flash-Lite

“It seems like they have been able to squeeze in a lot of intelligence into this model somehow.”

“I would use it for high throughput workloads which are very well defined.”

“The front-end capability of the flashlight is even better than most models that I have actually worked with.”

“It literally created a fully functional viewer in one shot.”

“This model is ideal for those who need speed without sacrificing all the logic.”

“This model is what we would call a workhorse model... specifically designed for high throughput tasks.”

“If you run this on minimal thinking budget, it basically works as a non-reasoning model and it's extremely fast.”

“It did a remarkably good job at the website that we have as an output.”

“The speed-to-cost ratio is the real reason why you would move your production apps here.”

“It handles multimodal inputs natively which is a huge advantage over competitors.”

“Hitting nearly 87% on GPQA Diamond with a model labeled lite disrupts our entire categorization system.”

“Do not use this model as a factual oracle... you have to bring the facts to it.”

“With 3.1 Flash-Lite, you avoid firing three other microservices... that simplicity is worth real money.”

“The 45 percent increase in output speed is felt immediately in the streaming response.”

“You are getting 1M context for pennies, which still feels like magic in production.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Gemini 3.1 Flash-Lite and achieve better results.

Set Thinking Levels

Use minimal thinking for classification to reduce costs but switch to high for complex coding tasks.

Enable Grounding

Always use Google Search grounding for tasks requiring factual recall since base factual accuracy is lower.

Upload Raw Files

Avoid pre-processing audio or video into text and instead upload raw files to leverage native multimodality.

Use System Instructions

Strictly enforce JSON schemas using the system_instruction parameter to minimize output correction tokens.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Claude Opus 4.5

Anthropic

Claude Opus 4.5 is Anthropic's most powerful frontier model, delivering record-breaking 80.9% SWE-bench performance and advanced autonomous agency for coding.

Grok-4

xAI

Grok-4 by xAI is a frontier model featuring a 2M token context window, real-time X platform integration, and world-record reasoning capabilities.

GLM-5.1

Zhipu (GLM)

GLM-5.1 is Zhipu AI's flagship reasoning model, featuring a 202K context window and an autonomous 8-hour execution loop for complex agentic engineering.

Kimi K2.5

Moonshot

Discover Moonshot AI's Kimi K2.5, a 1T-parameter open-source agentic model featuring native multimodal capabilities, a 262K context window, and SOTA reasoning.

Qwen3.6-Max-Preview

alibaba

Qwen3.6-Max-Preview is Alibaba's flagship MoE model featuring 1M context, a native thinking mode, and SOTA scores in agentic coding and reasoning.

GLM-5

Zhipu (GLM)

GLM-5 is Zhipu AI's 744B parameter open-weight powerhouse, excelling in long-horizon agentic tasks, coding, and factual accuracy with a 200k context window.

GPT-5.1

OpenAI

GPT-5.1 is OpenAI’s advanced reasoning flagship featuring adaptive thinking, native multimodality, and state-of-the-art performance in math and technical...

GPT-5.2

OpenAI

GPT-5.2 is OpenAI's flagship model for professional tasks, featuring a 400K context window, elite coding, and deep multi-step reasoning capabilities.

Frequently Asked Questions

Find answers to common questions about Gemini 3.1 Flash-Lite