Claude Opus 4.5

Claude Opus 4.5 is Anthropic's most powerful frontier model, delivering record-breaking 80.9% SWE-bench performance and advanced autonomous agency for coding.

About Claude Opus 4.5

Learn about Claude Opus 4.5's capabilities, features, and how it can help you achieve better results.

Claude Opus 4.5 is the flagship model from Anthropic, released in late 2025. It is specifically designed for complex software engineering and high-stakes reasoning. The model achieved a record-breaking 80.9% on the SWE-bench Verified benchmark, making it a primary choice for autonomous debugging and system refactoring. It introduces a refined persona emphasizing diplomatic honesty and nuanced helpfulness.

Multimodal and Agentic Optimization

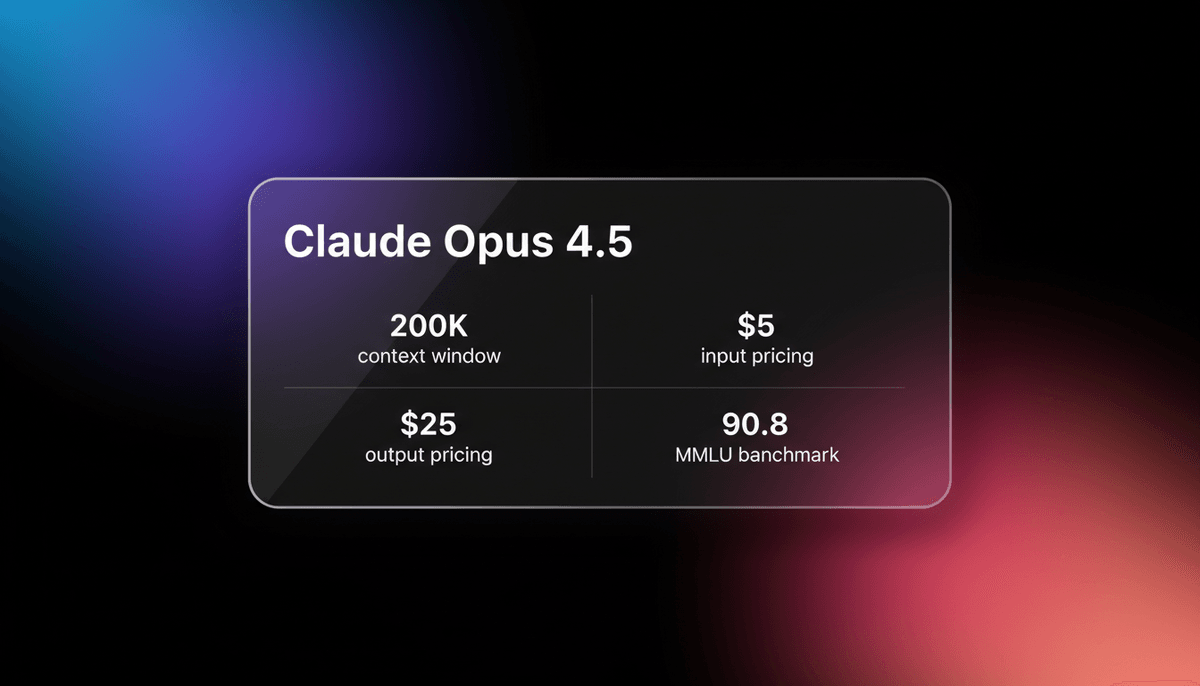

The architecture supports a 200,000-token context window and a 64,000-token output limit. Developers can use a specialized effort parameter to scale reasoning depth against computational costs. This flexibility allows for high-intensity logic tasks or faster, more economical creative drafting. The model is multimodal, excelling at interpreting architectural diagrams and dense UI layouts.

Engineering and Tool Use

Optimized for agentic workflows, it navigates terminal environments via Claude Code to perform system-wide audits. It reduces input and output pricing significantly compared to earlier flagship iterations. Its ability to maintain coherence across long-horizon tasks positions it as a reliable partner for professional engineering teams and complex data analysis.

Use Cases

Discover the different ways you can use Claude Opus 4.5 to achieve great results.

Autonomous Software Engineering

Automating end-to-end debugging and system-wide refactoring with a record-breaking 80.9% SWE-bench score.

Agentic Research Workflows

Synthesizing vast amounts of technical data into actionable business strategies using the 200k context window.

High-Fidelity UI/UX Vision

Converting complex Figma designs and architectural diagrams into production-ready frontend code with pixel-perfect accuracy.

Multi-Agent Orchestration

Serving as the central brain for teams of sub-agents to manage long-horizon projects across disparate codebases.

Advanced Data Analysis

Automating complex financial modeling and Excel workflows with high precision and reasoning depth.

Literary and Creative Drafting

Producing nuanced prose that adheres to specific writerly tastes and complex human-centric design principles.

Strengths

Limitations

API Quick Start

anthropic/claude-opus-4.5

import Anthropic from '@anthropic-ai/sdk';

const anthropic = new Anthropic({

apiKey: process.env.ANTHROPIC_API_KEY,

});

const msg = await anthropic.messages.create({

model: 'claude-opus-4-5-20251101',

max_tokens: 4096,

effort: 'high',

messages: [{ role: 'user', content: 'Analyze this system architecture for race conditions.' }],

});

console.log(msg.content[0].text);Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Claude Opus 4.5

“Claude Opus 4.5 feels less like a stateless assistant and more like a persistent teammate. It can trace assumptions across multiple files in a way that feels clearly stronger.”

“Watching your AI agent develop a social media persona that resonates with real people in ways you cannot explain. Infrastructure matters more than prompts.”

“Opus is the best performing model in this aspect. Its discussion is most natural, and it truly follows along with you in discussion.”

“Opus 4.5 hits the most little nuances. It is the only model to successfully include an inline trailer mechanism in the first pass.”

“The 80.9% SWE-bench score is probably real but also kinda misleading. It requires clear environment setup to hit those numbers consistently.”

“SWE-bench Verified: 80.9% (Opus 4.5) vs 71.3% (Claude 3-Opus). This is a massive jump for real-world reliability.”

Related Videos

Watch tutorials, reviews, and discussions about Claude Opus 4.5

“Opus 4.5 hits the most little nuances”

“It was the only model to successfully include an inline trailer mechanism in the first pass”

“An agent-driven code evaluation confirms this subjective feeling, scoring Opus at 7/10 for feature completeness”

“The reasoning is far more logical than previous versions when handling edge cases”

“It maintains codebase consistency over 30 minute sessions”

“The price is now three times cheaper. It is only going to be $5 for a million input tokens”

“Input is $5 and output is $25 for a million tokens”

“Opus 4.5 scored higher than any human candidate has ever scored on Anthropic's own take-home exam”

“This is the first model to break the 80 percent barrier on SWE-bench”

“It handles autonomous 30-minute coding sessions without human intervention”

“Think of Claude Opus 4.5 as a persuasion layer and an absolute agentic monster”

“It is an absolute agentic and harness coding monster”

“Engineers end up preferring working with Claude Opus 4.5 because they get those tight feedback loops”

“The reasoning effort parameter is the standout feature for developers”

“It feels more like a collaborator than a tool in long-form discussions”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Claude Opus 4.5 and achieve better results.

Toggle Reasoning Effort

Use the effort parameter to select high for complex logic or coding tasks and medium for standard creative writing.

Vision-Native Design

Upload high-resolution screenshots of UI bugs as the model is tuned to identify visual discrepancies that text descriptions miss.

Structured System Prompts

Define clear agentic roles and effort levels in your system prompts to prevent the model from overthinking simpler procedural tasks.

Context Compaction

Summarize history in long-running sessions to keep the 200k context window focused on the most relevant information.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Gemini 3.1 Flash-Lite

Gemini 3.1 Flash-Lite is Google's fastest, most cost-efficient model. Features 1M context, native multimodality, and 363 tokens/sec speed for scale.

Grok-4

xAI

Grok-4 by xAI is a frontier model featuring a 2M token context window, real-time X platform integration, and world-record reasoning capabilities.

Kimi K2.5

Moonshot

Discover Moonshot AI's Kimi K2.5, a 1T-parameter open-source agentic model featuring native multimodal capabilities, a 262K context window, and SOTA reasoning.

GLM-5.1

Zhipu (GLM)

GLM-5.1 is Zhipu AI's flagship reasoning model, featuring a 202K context window and an autonomous 8-hour execution loop for complex agentic engineering.

Qwen3.6-Max-Preview

alibaba

Qwen3.6-Max-Preview is Alibaba's flagship MoE model featuring 1M context, a native thinking mode, and SOTA scores in agentic coding and reasoning.

GLM-5

Zhipu (GLM)

GLM-5 is Zhipu AI's 744B parameter open-weight powerhouse, excelling in long-horizon agentic tasks, coding, and factual accuracy with a 200k context window.

GPT-5.1

OpenAI

GPT-5.1 is OpenAI’s advanced reasoning flagship featuring adaptive thinking, native multimodality, and state-of-the-art performance in math and technical...

GPT-5.2

OpenAI

GPT-5.2 is OpenAI's flagship model for professional tasks, featuring a 400K context window, elite coding, and deep multi-step reasoning capabilities.

Frequently Asked Questions

Find answers to common questions about Claude Opus 4.5