Qwen3.6-Max-Preview

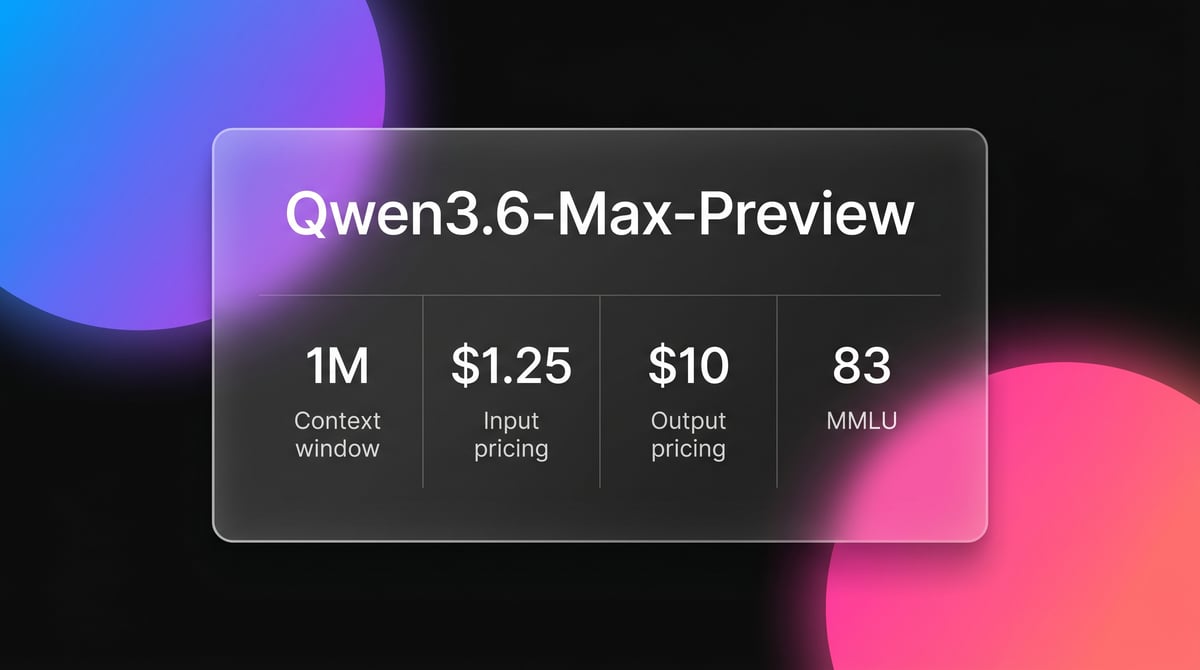

Qwen3.6-Max-Preview is Alibaba's flagship MoE model featuring 1M context, a native thinking mode, and SOTA scores in agentic coding and reasoning.

About Qwen3.6-Max-Preview

Learn about Qwen3.6-Max-Preview's capabilities, features, and how it can help you achieve better results.

Qwen3.6-Max-Preview is the flagship proprietary large language model from Alibaba, representing the next step in their high-performance AI series. Utilizing a sparse Mixture-of-Experts (MoE) architecture, the model achieves the reasoning depth of a trillion-parameter system while maintaining significant operational efficiency. It is specifically optimized for agentic coding, world knowledge, and complex instruction following.

The model's standout feature is its native Thinking Mode, which allows the system to generate a visible internal chain-of-thought before delivering a final response. This transparency is particularly valuable for developers building autonomous agents, as it provides a clear window into logical planning and error-correction steps. Combined with a massive 1-million-token context window, the model can ingest entire project repositories or extensive documentation libraries in a single pass.

Hosted on Alibaba Cloud Model Studio, Qwen3.6-Max-Preview supports industry-standard protocols and is compatible with OpenAI-style API specifications. It is designed to be the primary choice for enterprises requiring frontier-level AI capabilities for multimodal data analysis and robust agentic workflows, offering a high-performance alternative to Western closed-source models.

Use Cases

Discover the different ways you can use Qwen3.6-Max-Preview to achieve great results.

Autonomous Software Engineering

Deploy the model as a coding agent capable of navigating entire codebases, planning architectural changes, and fixing bugs across multiple files.

Large-Scale Technical Analysis

Utilize the 1M token context window to ingest complete documentation sets or legal frameworks for deep-dive analysis without RAG limitations.

Complex Reasoning and Planning

Leverage the native Thinking Mode to solve high-level mathematical problems where a multi-step internal plan is required for accuracy.

Multimodal Content Understanding

Analyze both static imagery and complex video sequences to extract data and summarize dynamic visual events.

Interactive Terminal Operations

Build tools that allow the AI to interact directly with shells and CLI environments, benefiting from its optimized Terminal-Bench performance.

Enterprise Agentic Workflows

Integrate the model into complex business pipelines where high instructional reliability and sophisticated tool-calling are required for automation.

Strengths

Limitations

API Quick Start

alibaba/qwen3.6-max-preview

import OpenAI from 'openai';

const client = new OpenAI({

apiKey: process.env.DASHSCOPE_API_KEY,

base_url: 'https://dashscope-intl.aliyuncs.com/compatible-mode/v1',

});

async function main() {

const completion = await client.chat.completions.create({

model: 'qwen3.6-max-preview',

messages: [{ role: 'user', content: 'Design a system architecture for a real-time AI agent.' }],

extra_body: { enable_thinking: true },

stream: true

});

for await (const chunk of completion) {

process.stdout.write(chunk.choices[0]?.delta?.content || '');

}

}

main();Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Qwen3.6-Max-Preview

“The kind of performance you'd expect from a model running on a massive server farm is now sitting on your desktop.”

“Qwen3.6-Max-Preview just beat Claude Opus 4.5 on SWE-Bench Pro. China is catching up fast.”

“At $1.25 per million tokens, Qwen is significantly cheaper than Claude for large scale data ingestion.”

“The fact that Thinking Mode is baked in as the default state is a meaningful design choice for agentic reliability.”

“Qwen has launched Qwen 3.6 Max Preview as a new top-end proprietary flagship model.”

“It shows improved agentic coding and better real-world agent reliability compared to the Plus model.”

Related Videos

Watch tutorials, reviews, and discussions about Qwen3.6-Max-Preview

“Qwen has launched Qwen 3.6 Max Preview as a new top-end proprietary flagship model.”

“The model shows a strong jump in coding-agent benchmarks like SkillsBench and Terminal-Bench 2.0.”

“Qwen is clearly trying to compete seriously at the high end against models like Claude 4.5 Opus.”

“This model represents a meaningful improvement in world knowledge and instruction following.”

“The performance jump on SWE-bench is what really sets this apart from the Plus variant.”

“The benchmark story is really about positioning the hosted Max Preview as distinct from the open-weight family.”

“We use Qwen Code pages and repo surfaces to judge the ecosystem depth beyond just the model weights.”

“The thinking mode is surprisingly fast compared to o1-style models from last year.”

“This is clearly designed for enterprise developers who need a reliable API for agentic tasks.”

“The multimodal vision performance is catching up to Gemini 2 in some document analysis tests.”

“This video introduces the Qwen3.6-Max-Preview, an early look at the next flagship model from Qwen.”

“It shows improved agentic coding and better real-world agent reliability compared to the Plus model.”

“The 1M context window is much more stable than what we saw in early Qwen 2 versions.”

“If you are doing a lot of coding, Qwen 3.6 Max is currently the benchmark leader.”

“Pricing remains very competitive even for their flagship closed-source model.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Qwen3.6-Max-Preview and achieve better results.

Enable Internal Reasoning

Set the 'enable_thinking' parameter to true in your API request to view the model's internal logic for debugging complex reasoning.

Preserve Long-Horizon Logic

Use the 'preserve_thinking' feature for multi-turn conversations to ensure the model maintains logical consistency across a session.

Feed Entire Libraries

Take advantage of the 1M context window by providing full source materials instead of chunked data for better cross-file understanding.

Use Compatible Endpoints

For global applications, use the Singapore or US Virginia endpoints in Alibaba Cloud to minimize regional latency for international users.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

GLM-5

Zhipu (GLM)

GLM-5 is Zhipu AI's 744B parameter open-weight powerhouse, excelling in long-horizon agentic tasks, coding, and factual accuracy with a 200k context window.

GLM-5.1

Zhipu (GLM)

GLM-5.1 is Zhipu AI's flagship reasoning model, featuring a 202K context window and an autonomous 8-hour execution loop for complex agentic engineering.

GPT-5.2

OpenAI

GPT-5.2 is OpenAI's flagship model for professional tasks, featuring a 400K context window, elite coding, and deep multi-step reasoning capabilities.

Gemini 3.1 Flash-Lite

Gemini 3.1 Flash-Lite is Google's fastest, most cost-efficient model. Features 1M context, native multimodality, and 363 tokens/sec speed for scale.

Claude Opus 4.5

Anthropic

Claude Opus 4.5 is Anthropic's most powerful frontier model, delivering record-breaking 80.9% SWE-bench performance and advanced autonomous agency for coding.

Grok-4

xAI

Grok-4 by xAI is a frontier model featuring a 2M token context window, real-time X platform integration, and world-record reasoning capabilities.

Kimi K2 Thinking

Moonshot

Kimi K2 Thinking is Moonshot AI's trillion-parameter reasoning model. It outperforms GPT-5 on HLE and supports 300 sequential tool calls autonomously for...

Kimi K2.5

Moonshot

Discover Moonshot AI's Kimi K2.5, a 1T-parameter open-source agentic model featuring native multimodal capabilities, a 262K context window, and SOTA reasoning.

Frequently Asked Questions

Find answers to common questions about Qwen3.6-Max-Preview