GPT-5.2

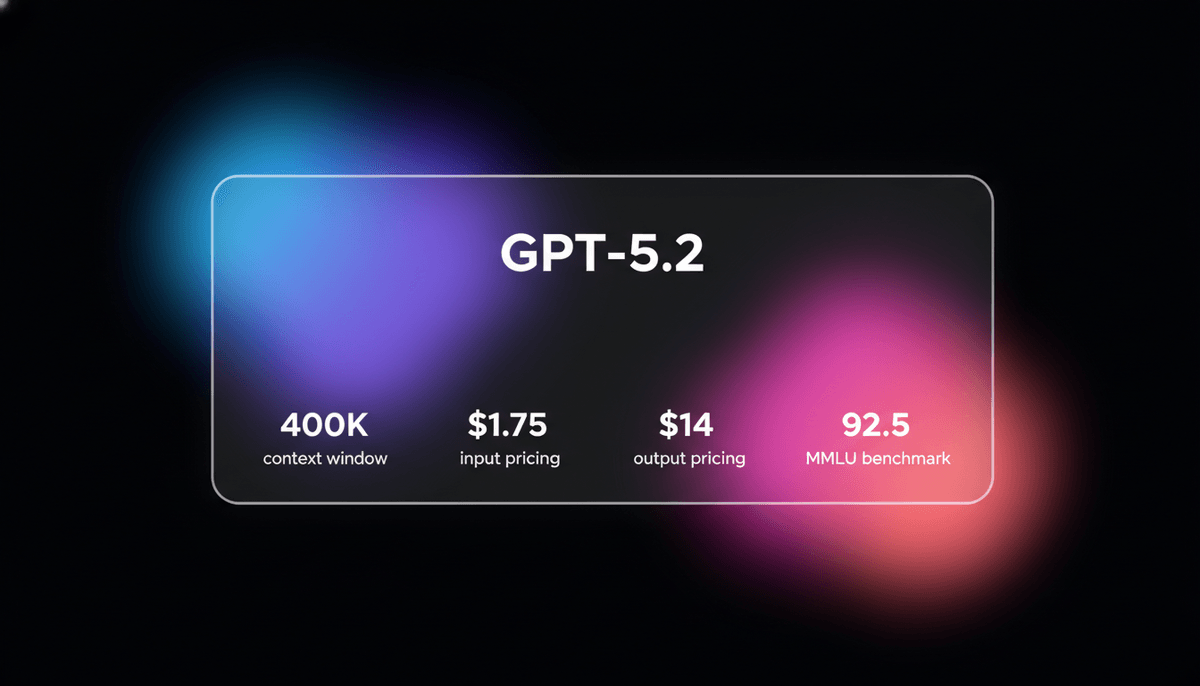

GPT-5.2 is OpenAI's flagship model for professional tasks, featuring a 400K context window, elite coding, and deep multi-step reasoning capabilities.

About GPT-5.2

Learn about GPT-5.2's capabilities, features, and how it can help you achieve better results.

GPT-5.2 is OpenAI’s flagship reasoning model designed for high-stakes professional knowledge work and autonomous engineering. Released on December 11, 2025, it marks a significant evolution from the GPT-4 and GPT-o1 series by integrating a dedicated Thinking mode with effort controls (Medium, High, Extra High). This allows the model to pause and verify multi-step logic before generating a response.

With a massive 400K context window and nearly 100% recall, it is engineered for senior-level code reviews, complex refactoring, and scientific research. The model architecture is built to support agentic workflows, featuring native tool-calling and multimodal vision that can process intricate technical diagrams and codebases simultaneously.

While it excels in logical precision and engineering benchmarks, hitting a 100% score on AIME 2025, it adopts a more formal, machine-like tone compared to competitors like Claude. It is currently priced at $1.75 per million input tokens and $14.00 per million output tokens, making it a cost-effective alternative for deep reasoning tasks that previously required high-compute human oversight.

Use Cases

Discover the different ways you can use GPT-5.2 to achieve great results.

Complex Engineering Refactors

Performing deep refactoring on performance-critical codebases while maintaining strict type invariants and architectural consistency.

Autonomous Terminal Tasks

Executing multi-step CLI workflows and managing complex cloud deployments through high performance on Terminal-Bench environments.

PhD-Level Knowledge Synthesis

Analyzing hundreds of technical sources and academic papers simultaneously to create comprehensive research reports on niche scientific topics.

Concurrency Bug Resolution

Identifying and fixing subtle race conditions or memory leaks that require high-level logical inference over long code segments.

Mechanical Code Processing

Handling large-scale, repetitive coding migrations across entire repositories without the laziness often observed in general-purpose LLMs.

Senior Technical Review

Acting as a virtual senior engineer to review design plans and identify edge cases in logic for production systems.

Strengths

Limitations

API Quick Start

openai/gpt-5.2

import OpenAI from 'openai';

const openai = new OpenAI();

async function solveCodeProblem() {

const response = await openai.chat.completions.create({

model: 'gpt-5.2',

messages: [{ role: 'user', content: 'Debug this race condition in my Rust service.' }],

reasoning_effort: 'high',

temperature: 0,

});

console.log(response.choices[0].message.content);

}

solveCodeProblem();Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about GPT-5.2

“GPT 5.2 in Codex is a very huge improvement, it's more willing to handle those mechanical tasks that would normally make models lazy.”

“The increased deliberation and time spent fact-checking its output is to be commended... the reliability is much improved.”

“The model powering deep research showcased a human-like approach by effectively seeking out specialized information when necessary.”

“OpenAI's focus on structured 'user care' feels like a corporate mask for a cold core compared to the natural discussions in Claude.”

“Finally a model that doesn't get lazy halfway through a 500-line refactor.”

“The reasoning effort parameter is the real MVP for complex logic problems.”

Related Videos

Watch tutorials, reviews, and discussions about GPT-5.2

“This is actually insane. Look at this one shot.”

“The design I'm not super impressed with with GPT 5.2... it did much worse than Gemini 3.”

“The context recall is nearly perfect across the whole 400k range.”

“It feels much more like a reasoning engine than a chatbot.”

“The latency is the only real dealbreaker for some real-time apps.”

“GPT 5.2 can now create fully formatted spreadsheets and slide decks directly inside chat GPT.”

“It's like the model finally grew up and started taking its job seriously.”

“Use the high reasoning setting only for logic-heavy tasks.”

“The hallucinations are down significantly compared to the 4o series.”

“Agentic workflows are finally viable without constant babysitting.”

“GPT 5.2 is actually 40% more expensive than 5.1, but it's still significantly cheaper than Opus.”

“GPT 5.2 took 11 minutes and 20 seconds [to build the app]. So double the amount of time [compared to Opus].”

“The output quality is much higher when you allow the thinking mode to run.”

“It handled the multi-file refactor without losing the type definitions.”

“If you need raw speed, this isn't the model for you.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of GPT-5.2 and achieve better results.

Leverage Thinking Effort

Use the reasoning_effort parameter (medium, high, xhigh) to match the model's deliberation time to the complexity of the task.

Enable Codex for Persistence

When working on large repos, use the dedicated Codex environment to maintain active processing sessions for up to 150 minutes.

Spoon-feed Context

Provide rich background documentation in system prompts as the model performs best when interviewed about the context it needs.

Iterate on Requirements

Explicitly instruct the model to perform verification checks against the current codebase to ensure requirements are validated.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Qwen3.6-Max-Preview

alibaba

Qwen3.6-Max-Preview is Alibaba's flagship MoE model featuring 1M context, a native thinking mode, and SOTA scores in agentic coding and reasoning.

GLM-5

Zhipu (GLM)

GLM-5 is Zhipu AI's 744B parameter open-weight powerhouse, excelling in long-horizon agentic tasks, coding, and factual accuracy with a 200k context window.

GLM-5.1

Zhipu (GLM)

GLM-5.1 is Zhipu AI's flagship reasoning model, featuring a 202K context window and an autonomous 8-hour execution loop for complex agentic engineering.

Gemini 3.1 Flash-Lite

Gemini 3.1 Flash-Lite is Google's fastest, most cost-efficient model. Features 1M context, native multimodality, and 363 tokens/sec speed for scale.

Kimi K2 Thinking

Moonshot

Kimi K2 Thinking is Moonshot AI's trillion-parameter reasoning model. It outperforms GPT-5 on HLE and supports 300 sequential tool calls autonomously for...

Claude Opus 4.5

Anthropic

Claude Opus 4.5 is Anthropic's most powerful frontier model, delivering record-breaking 80.9% SWE-bench performance and advanced autonomous agency for coding.

GPT-5.4

OpenAI

GPT-5.4 is OpenAI's frontier model featuring a 1.05M context window and Extreme Reasoning. It excels at autonomous UI interaction and long-form data analysis.

Qwen3.5-Omni

alibaba

Qwen3.5-Omni is a natively omnimodal AI by Alibaba Cloud, offering seamless audio-visual reasoning, real-time voice chat, and 256k context for low-latency apps.

Frequently Asked Questions

Find answers to common questions about GPT-5.2