Qwen3.5-Omni

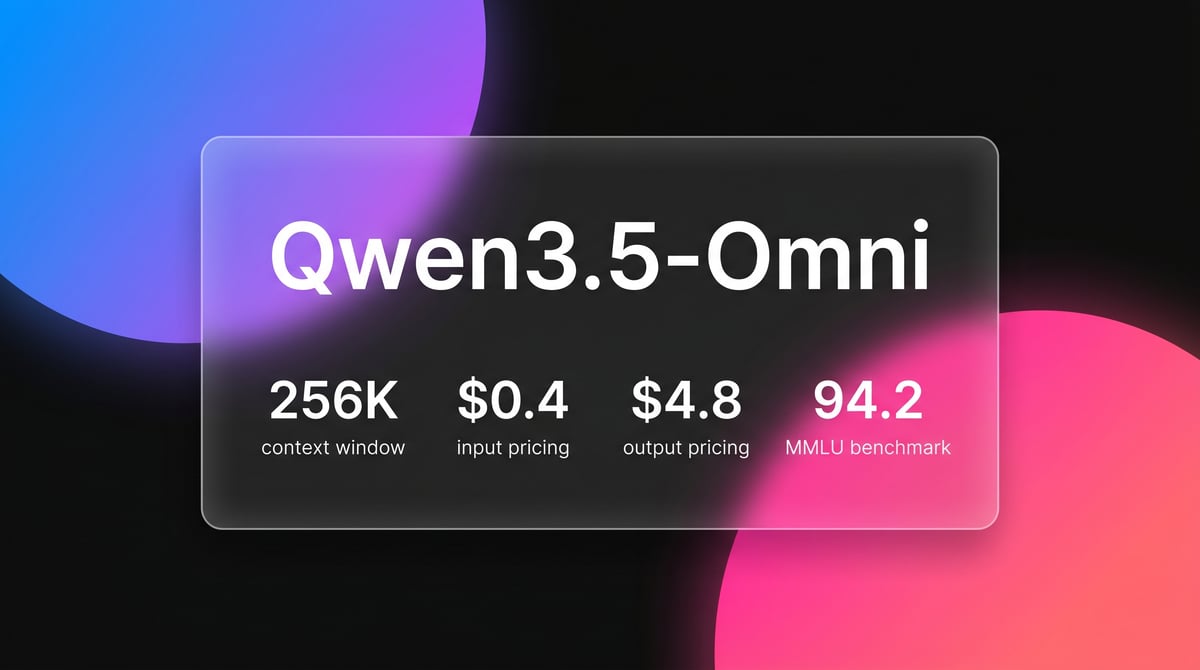

Qwen3.5-Omni is a natively omnimodal AI by Alibaba Cloud, offering seamless audio-visual reasoning, real-time voice chat, and 256k context for low-latency apps.

About Qwen3.5-Omni

Learn about Qwen3.5-Omni's capabilities, features, and how it can help you achieve better results.

Unified Omnimodal Architecture

Qwen3.5-Omni is a natively omnimodal model developed by Alibaba Cloud, built on a unified architecture designed to process text, image, audio, and video inputs simultaneously. Unlike previous models that relied on separate encoders, Qwen3.5-Omni utilizes a Thinker-Talker architecture. The Thinker component performs complex multimodal reasoning across interleaved signals, while the Talker component generates high-quality, low-latency streaming speech. This allows the model to handle massive context, including up to 10 hours of audio or nearly seven minutes of 720p video in a single prompt.

Advanced Synchronization and Performance

A technical feature of this model is the Adaptive Rate Interleave Alignment (ARIA) system, which synchronizes text and speech tokens to ensure natural-sounding voice responses. The model supports real-time semantic interruption, allowing users to cut off the AI during conversation. It is optimized for both enterprise-grade multimodal analysis and consumer-facing real-time voice assistants, offering performance in vision and audio tasks that matches or exceeds proprietary flagship models.

Specialized for Low-Latency Interaction

The model's architecture is specifically tuned for real-time applications where latency is critical. By using a Mixture-of-Experts (MoE) approach with a gated delta networks architecture, the model maintains high computational efficiency. This efficiency enables it to provide real-time audio interaction while managing a 256k token context window, making it suitable for long-form content analysis such as meeting transcripts and cinematic video indexing.

Use Cases

Discover the different ways you can use Qwen3.5-Omni to achieve great results.

Real-time Voice Assistants

The model builds interactive AI avatars that engage in natural voice conversations with semantic interruption support.

Cinematic Video Captioning

It generates screenplay-level descriptions and timestamped annotations for high-definition long-form video content.

Audio-Visual Live Coding

Developers fix code by showing their screen and verbally explaining logic in real-time to the model.

Enterprise Audio Archiving

The system processes up to 10 hours of meeting recordings or podcasts to extract insights in one pass.

Multilingual Translation Services

It provides end-to-end speech-to-speech translation across 113 languages and various regional Chinese dialects.

Content Moderation

The model audits video and audio streams for safety by identifying visual and verbal prohibited content simultaneously.

Strengths

Limitations

API Quick Start

alibaba/qwen3.5-omni-plus

import OpenAI from 'openai';

const client = new OpenAI({

apiKey: process.env.DASHSCOPE_API_KEY,

baseURL: 'https://dashscope-intl.aliyuncs.com/compatible-mode/v1',

});

const completion = await client.chat.completions.create({

model: 'qwen3.5-omni-plus',

messages: [{ role: 'user', content: 'Analyze this video content.' }],

modalities: ['text'],

stream: true,

});

for await (const chunk of completion) {

process.stdout.write(chunk.choices[0]?.delta?.content || '');

}Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Qwen3.5-Omni

“The Audio-Visual Vibe Coding is a game changer; it finally understands what I am showing on screen while I explain the bug.”

“Qwen3.5-Omni's ability to handle 10 hours of audio in one context is insane for researchers and podcasters.”

“The voice cloning sounds surprisingly natural compared to the previous generation, almost indistinguishable in English.”

“Finally, a model that doesn't just cut me off mid-sentence; the semantic interruption works as advertised.”

“Impressive numbers on the new Qwen3.6 27B, but the Omni version is the one everyone will use for real products.”

“I tried interrupting it five times, and it caught my intent every single time.”

Related Videos

Watch tutorials, reviews, and discussions about Qwen3.5-Omni

“The Thinker-Talker architecture is a massive leap forward for real-time latency [04:15].”

“It handles 400 seconds of video which is double what we usually see [07:22].”

“This model is natively end-to-end multilingual and multimodal [10:05].”

“The ARIA system prevents the pronunciation errors found in standard TTS [15:30].”

“You can literally show your screen and have a fluid conversation about the code [22:10].”

“I tried interrupting it five times, and it caught my intent every single time [08:30].”

“The way it writes code based on what it sees in the video is spooky [10:45].”

“This is the first real competitor to GPT-4o's voice mode we have seen [14:20].”

“It supports 113 languages for speech recognition, which is a huge advantage [18:55].”

“The vision extraction is far more robust for complex PDFs and video [25:15].”

“The 10-hour audio context is the real star here for enterprise use [12:10].”

“Performance in non-English languages is where Qwen really pulls ahead [15:40].”

“It can distinguish between background noise and actual user interruption [19:22].”

“Pricing is very competitive, especially for the scale of parameters active [24:10].”

“This is currently the most capable model for Python automation involving visual UI [28:45].”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Qwen3.5-Omni and achieve better results.

Optimize Audio Ingestion

Segment audio longer than 10 hours to maintain factual retrieval accuracy within the 256k context window.

Leverage Semantic Interruption

Enable native turn-taking features in voice apps to distinguish user intent from background noise.

Use ARIA for Technical Terms

Utilize streaming speech mode to benefit from ARIA alignment, which ensures technical numbers are pronounced accurately.

Video Frame Rate Control

Upload standard video at 1 FPS, but increase the rate for high-action scenes to ensure visual precision.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

GPT-5.4

OpenAI

GPT-5.4 is OpenAI's frontier model featuring a 1.05M context window and Extreme Reasoning. It excels at autonomous UI interaction and long-form data analysis.

Kimi K2 Thinking

Moonshot

Kimi K2 Thinking is Moonshot AI's trillion-parameter reasoning model. It outperforms GPT-5 on HLE and supports 300 sequential tool calls autonomously for...

GPT-5.2

OpenAI

GPT-5.2 is OpenAI's flagship model for professional tasks, featuring a 400K context window, elite coding, and deep multi-step reasoning capabilities.

Qwen3.6-Max-Preview

alibaba

Qwen3.6-Max-Preview is Alibaba's flagship MoE model featuring 1M context, a native thinking mode, and SOTA scores in agentic coding and reasoning.

GLM-5

Zhipu (GLM)

GLM-5 is Zhipu AI's 744B parameter open-weight powerhouse, excelling in long-horizon agentic tasks, coding, and factual accuracy with a 200k context window.

GLM-5.1

Zhipu (GLM)

GLM-5.1 is Zhipu AI's flagship reasoning model, featuring a 202K context window and an autonomous 8-hour execution loop for complex agentic engineering.

GPT-5.3 Codex

OpenAI

GPT-5.3 Codex is OpenAI's 2026 frontier coding agent, featuring a 400K context window, 77.3% Terminal-Bench score, and superior logic for complex software...

Gemini 3.1 Flash-Lite

Gemini 3.1 Flash-Lite is Google's fastest, most cost-efficient model. Features 1M context, native multimodality, and 363 tokens/sec speed for scale.

Frequently Asked Questions

Find answers to common questions about Qwen3.5-Omni