GPT-5.4

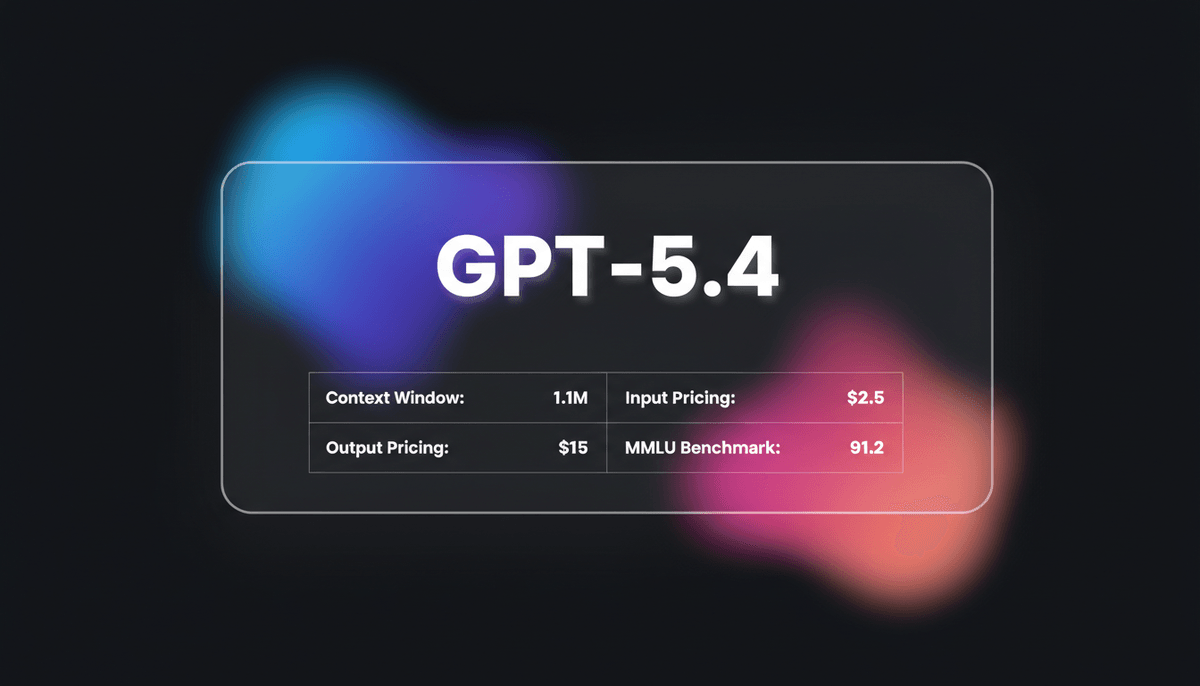

GPT-5.4 is OpenAI's frontier model featuring a 1.05M context window and Extreme Reasoning. It excels at autonomous UI interaction and long-form data analysis.

About GPT-5.4

Learn about GPT-5.4's capabilities, features, and how it can help you achieve better results.

The Frontier of Long-Context Reasoning

GPT-5.4 represents the high-performance evolution of the GPT-5 series. It features an industry-leading 1.05-million-token context window. This model handles expansive datasets, such as massive code repositories or multi-year historical logs, without losing reasoning fidelity. The interactive Mid-Response Steering allows users to monitor and adjust the model thinking plan in real-time. This ensures the output aligns with complex, multi-step intents.

Unified Intelligence and Autonomous Action

Technically, GPT-5.4 unifies the world-class coding strengths of previous Codex branches with the creative nuances of the standard GPT-5 series. It features a specialized Thinking mode with adjustable effort levels. These include Standard, Extended, and Heavy modes. It utilizes reinforced chain-of-thought processing to solve PhD-level science and logic problems. Beyond text, GPT-5.4 introduces native computer use capabilities. It achieves a 75% score on OSWorld-Verified tasks by interpreting visual screenshots and executing coordinate-based clicks.

Efficiency and Reliability

OpenAI reports a 33% decrease in claim-level errors compared to predecessors. This makes GPT-5.4 a primary choice for autonomous agents and high-stakes decision support. It is engineered for token and energy efficiency. This allows for cheaper long-context processing than previous iterations. Whether managing an entire enterprise codebase or acting as an autonomous scheduling agent, GPT-5.4 sets a new standard for reliability and agentic performance.

Use Cases

Discover the different ways you can use GPT-5.4 to achieve great results.

Large-Scale Code Refactoring

Systematically rewriting legacy codebases exceeding 300,000 lines with strict adherence to architectural standards.

Autonomous Financial Modeling

Building complex three-statement models where the AI reconciles income statements, balance sheets, and cash flows.

Interactive System Design

Developing 3D simulations or physics-based games by steering the model logic path during the generation process.

Agentic Computer Use

Executing multi-step desktop tasks such as bulk data entry, email management, and software testing via native UI interaction.

Long-Context Legal Analysis

Cross-referencing hundreds of legal documents to identify inconsistencies or extract specific clauses with high recall accuracy.

PhD-Level Research Support

Solving complex mathematical proofs and scientific problems using Heavy Reasoning mode for verified logical chains.

Strengths

Limitations

API Quick Start

openai/gpt-5.4

import OpenAI from "openai";

const openai = new OpenAI();

async function main() {

const completion = await openai.chat.completions.create({

model: "gpt-5.4",

messages: [

{ role: "user", content: "Refactor this controller for better error handling." }

],

reasoning_effort: "heavy"

});

console.log(completion.choices[0].message.content);

}

main();Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about GPT-5.4

“GPT 5.4 in Codex is a very huge improvement... I've actually seen it work for 150 minutes at once without losing context.”

“GPT 5.4's 3D design chops are unmatched. The way it handled transparency and physics in my ship simulator was spookily accurate.”

“The mid-response course correction is incredible. I can actually see where the model is going and fix it before it wastes tokens.”

“It beat humans 83% of the time across 44 different jobs. Lawyer. Accountant. Financial analyst. Administrator.”

“OpenAI finally fixed the output bottleneck. 128k output tokens is a dream for developers building full-stack applications.”

“The computer use latency is still there, but the precision is high enough to handle complex SAP workflows which is wild.”

Related Videos

Watch tutorials, reviews, and discussions about GPT-5.4

“GPT 5.4 is here and we may actually have a new best model on the planet.”

“GPT 5.4 Thinking can now provide an upfront plan of its thinking... allows you to guide the model.”

“This interactive element solves the black box problem of reasoning models.”

“The speed compared to o1-preview is night and day for standard tasks.”

“You are seeing reasoning that actually feels consistent over long conversations.”

“GPT 5.4... wasn't built to chat. It was built to work.”

“Deferred loading... reduced total token usage by 47% with no loss in accuracy.”

“The computer use functionality tracks UI elements with a coordinate-based system.”

“I tested it with a legacy Java codebase and it actually understood the cross-file dependencies.”

“We are moving into a world where the AI is the operating system controller.”

“1 million 50,000 token context window. This is a very long context window.”

“Navigate it while it is thinking, which is definitely more efficient to use.”

“The pricing is steep but for large document sets, it's the only model that works.”

“Thinking mode can be adjusted based on the complexity of your prompt.”

“It feels more reliable on factual recall than any previous GPT version.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of GPT-5.4 and achieve better results.

Toggle Thinking Effort

Use the Standard, Extended, or Heavy parameters to balance the need for accuracy against generation speed and cost.

Review the Thinking Plan

Monitor the upfront plan provided by the model and use Mid-Response Steering to correct it if the logic deviates.

Leverage Deferred Tool Loading

For agentic workflows, use the deferred loading registry to reduce upfront token costs by up to 47%.

Use Completeness Contracts

Explicitly define what finished means in your prompt to make the model more persistent during long-running tasks.

Max Resolution Vision

Upload high-fidelity images up to 10.24M pixels for precise visual inspections of UI elements or technical diagrams.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Qwen3.5-Omni

alibaba

Qwen3.5-Omni is a natively omnimodal AI by Alibaba Cloud, offering seamless audio-visual reasoning, real-time voice chat, and 256k context for low-latency apps.

Kimi K2 Thinking

Moonshot

Kimi K2 Thinking is Moonshot AI's trillion-parameter reasoning model. It outperforms GPT-5 on HLE and supports 300 sequential tool calls autonomously for...

GPT-5.2

OpenAI

GPT-5.2 is OpenAI's flagship model for professional tasks, featuring a 400K context window, elite coding, and deep multi-step reasoning capabilities.

Qwen3.6-Max-Preview

alibaba

Qwen3.6-Max-Preview is Alibaba's flagship MoE model featuring 1M context, a native thinking mode, and SOTA scores in agentic coding and reasoning.

GLM-5

Zhipu (GLM)

GLM-5 is Zhipu AI's 744B parameter open-weight powerhouse, excelling in long-horizon agentic tasks, coding, and factual accuracy with a 200k context window.

GLM-5.1

Zhipu (GLM)

GLM-5.1 is Zhipu AI's flagship reasoning model, featuring a 202K context window and an autonomous 8-hour execution loop for complex agentic engineering.

Gemini 3.1 Flash-Lite

Gemini 3.1 Flash-Lite is Google's fastest, most cost-efficient model. Features 1M context, native multimodality, and 363 tokens/sec speed for scale.

GPT-5.3 Codex

OpenAI

GPT-5.3 Codex is OpenAI's 2026 frontier coding agent, featuring a 400K context window, 77.3% Terminal-Bench score, and superior logic for complex software...

Frequently Asked Questions

Find answers to common questions about GPT-5.4