Kimi K2 Thinking

Kimi K2 Thinking is Moonshot AI's trillion-parameter reasoning model. It outperforms GPT-5 on HLE and supports 300 sequential tool calls autonomously for...

About Kimi K2 Thinking

Learn about Kimi K2 Thinking's capabilities, features, and how it can help you achieve better results.

Trillion-Parameter Mixture of Experts

Kimi K2 Thinking is a trillion-parameter reasoning model that utilizes a Mixture-of-Experts (MoE) architecture. Developed by Moonshot AI and released in late 2025, it activates only 32B parameters for inference, which balances massive knowledge capacity with computational efficiency. It is designed specifically as a thinking agent that scales its computation during the inference phase to solve complex logical problems. This approach allows the model to reflect on its own reasoning and correct mistakes before providing a final answer.

Agentic Tool Use and Planning

The model distinguishes itself through its capability to handle up to 300 sequential tool calls autonomously. While most standard language models struggle with long-horizon planning, K2 Thinking is engineered for agentic workflows such as autonomous web browsing and multi-step software engineering. It natively supports INT4 precision via Quantization-Aware Training, allowing the model to maintain frontier-level performance while running on standard enterprise hardware clusters.

Developer and Research Focus

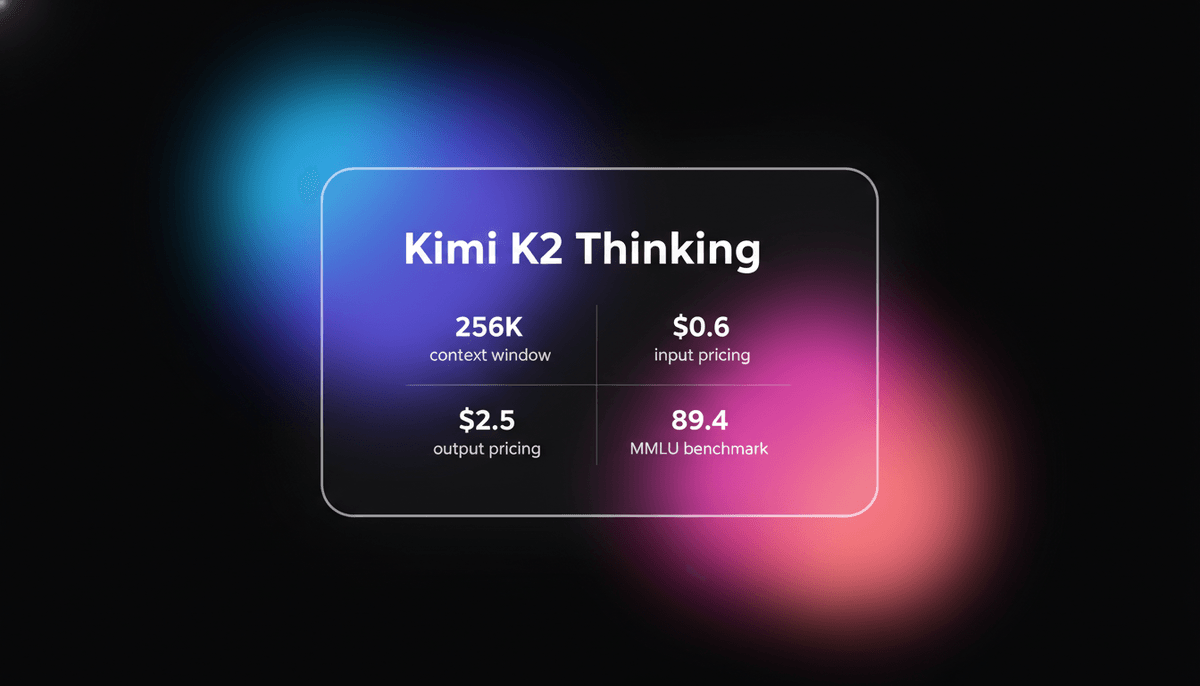

With a 256K token context window, the model is built for deep research and complex technical tasks. It bridges the performance gap between closed-source systems and open-weights models. Its ability to solve PhD-level science questions and competitive math problems makes it a suitable choice for academic research, automated coding assistants, and high-fidelity reasoning applications where logical consistency is the primary requirement.

Use Cases

Discover the different ways you can use Kimi K2 Thinking to achieve great results.

Complex Software Engineering

Resolving real GitHub issues and architecting multi-file codebases using iterative self-correction.

Autonomous Research Agents

Executing hundreds of sequential tool calls to gather and synthesize obscure technical data.

Olympiad-Level Mathematics

Solving advanced geometry and algebra problems with deep chain-of-thought verification.

PhD-Level Science Inquiry

Answering expert questions in physics and biology that require multi-step logical deduction.

Interactive Computer Control

Navigating terminal environments and cloud infrastructure to automate devops workflows.

Logic-Heavy Creative Writing

Generating long-form content that requires strict adherence to intricate world-building rules.

Strengths

Limitations

API Quick Start

moonshot/kimi-k2-thinking

import OpenAI from 'openai';

const client = new OpenAI({

apiKey: process.env.MOONSHOT_API_KEY,

baseURL: 'https://api.moonshot.cn/v1',

});

async function main() {

const response = await client.chat.completions.create({

model: 'kimi-k2-thinking',

messages: [{ role: 'user', content: 'Design a system for autonomous code review using 300 tool calls.' }],

});

console.log(response.choices[0].message.content);

}

main();Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Kimi K2 Thinking

“Kimi K2.5 is the best open model for coding, they really cooked.”

“Moonshot AI just dropped Kimi K2 Thinking. 300 sequential tool calls? That's the future of agentic AI.”

“Kimi released Kimi K2 Thinking, an open-source trillion-parameter reasoning model. This is the real deal.”

“The fact that it can handle 300 tool calls sequentially opens up entirely new agent workflows.”

“Impressive to see an open-source model hitting these numbers. The test-time scaling approach is clearly paying off.”

“Running this model locally is a challenge, but the reasoning depth is unlike anything else in the open weights space.”

Related Videos

Watch tutorials, reviews, and discussions about Kimi K2 Thinking

“Kimmy K2 thinking is the best AI model I've ever used.”

“It is the most agentic independent model ever made. Meaning, it can run for hours by itself.”

“It is able to think and reflect every single step of the way. So it never gets lost.”

“The reasoning speed is surprisingly fast despite the trillion parameters.”

“If you are building agents, this is the architecture you want to look at.”

“Kimi K2 Thinking... is a thinking upgrade to the Kimmy K2 model, which truthfully seems to be very widely regarded.”

“This is of course an open-source model... coming in at a total size of around 1 trillion parameters.”

“All benchmark results are reported under int4 precision.”

“It handles complex math problems with a level of logic that rivals the top proprietary labs.”

“The installation process for the local weights is fairly straightforward if you have the VRAM.”

“Kimi K2.5 is the latest open-source model developed by a Chinese company called Moonshot AI.”

“It is capable of spinning up up to 100 sub-agents and 1,500 tool calls and run them concurrently.”

“I would certainly recommend it if you want to make a truly beautiful website.”

“The internal chain of thought allows it to self-correct code errors before providing the final answer.”

“Moonshot has really focused on long-horizon planning for this specific release.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Kimi K2 Thinking and achieve better results.

Enable Thinking Output

Use the special tokens flag in your inference engine to see the model's internal reasoning steps.

Optimize Temperature

Set the sampling temperature to 1.0 and min_p to 0.01 for the most consistent reasoning flow.

Utilize System Prompts

Start conversations with the official Moonshot AI identity prompt to stabilize the model's behavior.

Scale Test-Time Compute

Allow the model to generate more internal tokens for harder problems to increase accuracy.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

GPT-5.4

OpenAI

GPT-5.4 is OpenAI's frontier model featuring a 1.05M context window and Extreme Reasoning. It excels at autonomous UI interaction and long-form data analysis.

Qwen3.5-Omni

alibaba

Qwen3.5-Omni is a natively omnimodal AI by Alibaba Cloud, offering seamless audio-visual reasoning, real-time voice chat, and 256k context for low-latency apps.

GPT-5.2

OpenAI

GPT-5.2 is OpenAI's flagship model for professional tasks, featuring a 400K context window, elite coding, and deep multi-step reasoning capabilities.

Qwen3.6-Max-Preview

alibaba

Qwen3.6-Max-Preview is Alibaba's flagship MoE model featuring 1M context, a native thinking mode, and SOTA scores in agentic coding and reasoning.

GLM-5

Zhipu (GLM)

GLM-5 is Zhipu AI's 744B parameter open-weight powerhouse, excelling in long-horizon agentic tasks, coding, and factual accuracy with a 200k context window.

GLM-5.1

Zhipu (GLM)

GLM-5.1 is Zhipu AI's flagship reasoning model, featuring a 202K context window and an autonomous 8-hour execution loop for complex agentic engineering.

Gemini 3.1 Flash-Lite

Gemini 3.1 Flash-Lite is Google's fastest, most cost-efficient model. Features 1M context, native multimodality, and 363 tokens/sec speed for scale.

Claude Opus 4.5

Anthropic

Claude Opus 4.5 is Anthropic's most powerful frontier model, delivering record-breaking 80.9% SWE-bench performance and advanced autonomous agency for coding.

Frequently Asked Questions

Find answers to common questions about Kimi K2 Thinking