GPT-5.5

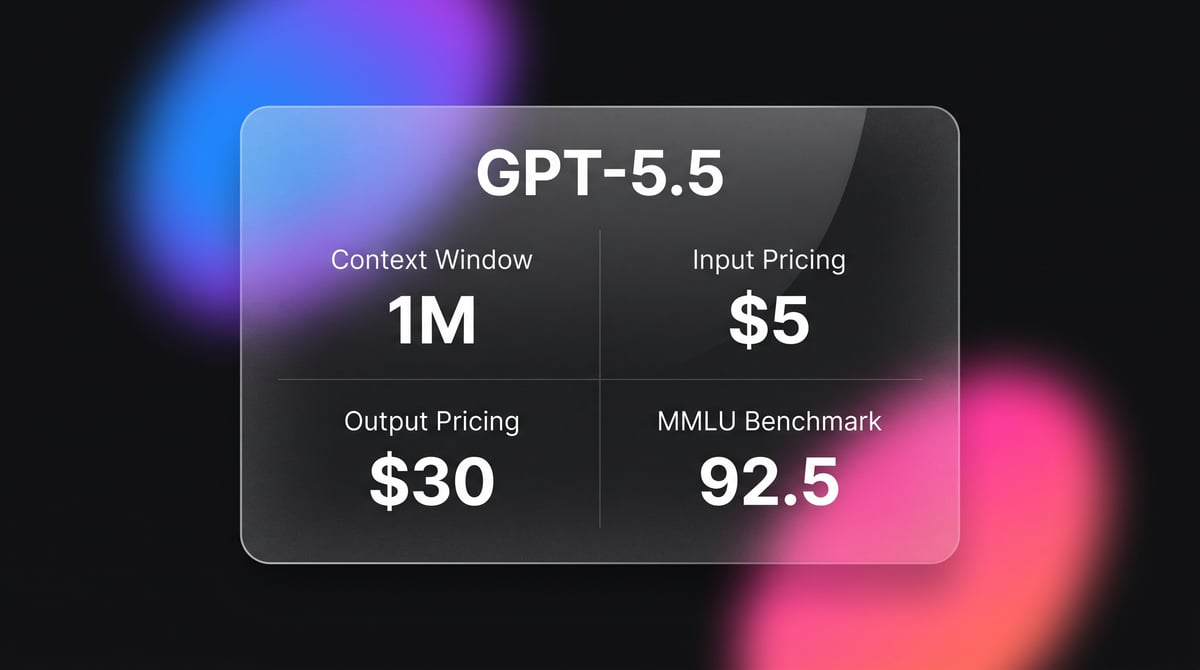

GPT-5.5 is OpenAI's flagship frontier model with a 1M context window and five reasoning effort levels, optimized for autonomous agentic workflows and coding.

About GPT-5.5

Learn about GPT-5.5's capabilities, features, and how it can help you achieve better results.

Transition to Agentic Intelligence

GPT-5.5 represents the transition from large language models to large agentic models. It is designed to function as an autonomous teammate rather than a simple chatbot, capable of planning, executing, and self-verifying complex workflows across digital environments. The model's primary innovation is the implementation of variable reasoning effort levels, which gives developers granular control over the model's thinking time and associated compute costs.

Technical Efficiency and Vision

Technically, GPT-5.5 maintains the 1-million-token context window of the GPT-5 family but introduces a 40% gain in token efficiency. This means that while per-token pricing has doubled relative to the 5.4 series, the effective cost for complex tasks is only 20% higher. The model's vision capabilities have also been significantly upgraded, now reaching near-human performance on technical diagrams and spatial reasoning tasks like ARC-AGI v2.

Optimization for Autonomy

It is particularly effective for autonomous coding, where it can manage entire repositories and verify its own bug fixes. By utilizing the new reasoning_effort parameter, users can toggle between five distinct logic depths, making it the first model to offer a sliding scale of intelligence for high-stakes problem solving.

Use Cases

Discover the different ways you can use GPT-5.5 to achieve great results.

Autonomous Software Engineering

Managing entire code repositories, fixing bugs, and deploying updates without human oversight.

Scientific Research Analysis

Processing thousands of research papers across a 1M window to synthesize novel hypotheses.

Complex Financial Modeling

Building and auditing intricate corporate finance structures with PhD-level mathematical precision.

Multi-Step Agentic Workflows

Creating and executing recursive task lists to achieve long-term digital objectives autonomously.

Technical Visual Analysis

Interpreting complex engineering blueprints and circuit diagrams for automated quality assurance.

High-Fidelity Data Compression

Converting massive datasets into token-dense summaries that preserve deep semantic nuances.

Strengths

Limitations

API Quick Start

openai/gpt-5.5

import OpenAI from "openai";

const openai = new OpenAI();

async function main() {

const response = await openai.chat.completions.create({

model: "gpt-5.5",

messages: [

{ role: "system", content: "You are an autonomous coding agent." },

{ role: "user", content: "Debug this Python repository and verify the fixes." }

],

reasoning_effort: "xhigh"

});

console.log(response.choices[0].message.content);

}

main();Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about GPT-5.5

“The hallucination rate is wild though, 86% on facts? It's like a genius who refuses to say 'I don't know'.”

“GPT-5.5 Pro is $180/mil output. We've officially entered the luxury era of AI.”

“The proto-AGI era has arrived. It's no longer a chatbot; it's a teammate.”

“The reasoning ladder with 5 effort levels is the most useful feature release since function calling.”

“OpenAI cooked with this one. It's expensive, but it actually works for high-end agentic work.”

“Across 20 benchmarks GPT-5.5 scores slightly higher than Opus 4.7 but it is also now $5/million tokens.”

Related Videos

Watch tutorials, reviews, and discussions about GPT-5.5

“The reasoning ability on this model is just night and day compared to anything we've seen before.”

“It literally built a whole SaaS application in one go without me having to fix a single bug.”

“At $5 per million tokens, you really have to be sure you need this level of intelligence.”

“Comparing this to open models, there is still a significant gap in agentic autonomy.”

“The reasoning effort parameters are the real story here for developers.”

“OpenAI cooked with this one. It's expensive, but it actually works for high-end agentic work.”

“The visual understanding of UI layouts is perfectly accurate now.”

“It manages its own state across multiple steps much better than GPT-5.4.”

“You can basically hand it a terminal and let it work for twenty minutes.”

“The pricing is steep, but the time saved on debugging is worth it.”

“The context window being a full million tokens is a game changer for long document analysis.”

“If you're building autonomous agents, this is currently the only model that feels truly autonomous.”

“I noticed a high hallucination rate on very specific historical facts.”

“The efficiency gains mean you use fewer tokens for the same complex task.”

“It is a specialized tool for developers more than a casual chatbot.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of GPT-5.5 and achieve better results.

Use Reasoning Effort xhigh

Set the reasoning_effort parameter to 'xhigh' for logic-heavy tasks like math and architectural design.

Leverage Large Context Window

Provide complete documentation and codebase context in the initial system prompt to take full advantage of the 1M window.

Implement Self-Critique Loops

Request a recursive review where the model critiques its first output to mitigate the native hallucination rate.

Agentic Verification

Utilize the xhigh effort level for agentic tasks to ensure the model self-verifies every step before moving to the next.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Grok-3

xAI

Grok-3 is xAI's flagship reasoning model, featuring deep logic deduction, a 128k context window, and real-time integration with X for live research and coding.

Gemini 3.1 Flash Live Preview

Gemini 3.1 Flash Live Preview is Google's ultra-low-latency, audio-to-audio model featuring a 131K context window, high-fidelity multimodal reasoning, and...

GPT-5.2 Pro

OpenAI

GPT-5.2 Pro is OpenAI's 2025 flagship reasoning model featuring Extended Thinking for SOTA performance in mathematics, coding, and expert knowledge work.

Claude Opus 4.7

Anthropic

Claude Opus 4.7 is Anthropic's flagship model with a 1-million-token context, adaptive reasoning, and 3.3x vision resolution for enterprise-scale agents.

Gemini 3.1 Pro

Gemini 3.1 Pro is Google's elite multimodal model featuring the DeepThink reasoning engine, a 1M+ context window, and industry-leading ARC-AGI logic scores.

Qwen 3.7 Max

alibaba

Qwen 3.7 Max is Alibaba’s flagship AI model for deep reasoning and autonomous agent tasks, featuring a 256k context window and top-tier coding performance.

Gemini 3 Pro

Google's Gemini 3 Pro is a multimodal powerhouse featuring a 1M token context window, native video processing, and industry-leading reasoning performance.

Claude Opus 4.6

Anthropic

Claude Opus 4.6 is Anthropic's flagship model featuring a 1M token context window, Adaptive Thinking, and world-class coding and reasoning performance.

Frequently Asked Questions

Find answers to common questions about GPT-5.5