DeepSeek v4

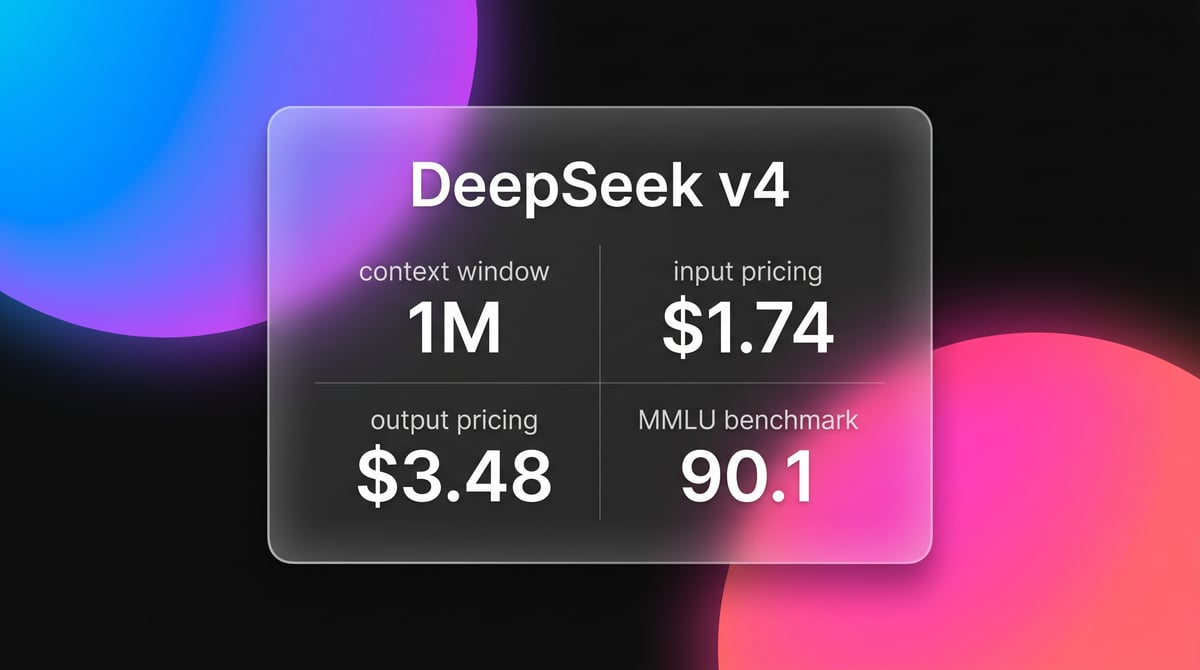

DeepSeek v4 is a 1.6T parameter MoE model featuring a 1M token context window and native multimodal support for text, vision, and video at disruptive prices.

About DeepSeek v4

Learn about DeepSeek v4's capabilities, features, and how it can help you achieve better results.

High-Efficiency trillion-Scale Architecture

DeepSeek v4 represents an evolution in Mixture-of-Experts (MoE) design, scaling to 1.6 trillion total parameters with 49 billion active parameters. The model integrates Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) to manage its 1-million-token context window. These technologies reduce the KV cache memory footprint by 90% compared to standard architectures, allowing for faster inference and lower hardware requirements for long-context tasks. ### Native Multimodal Integration Unlike models that use separate vision or audio encoders, DeepSeek v4 is natively multimodal from the initial training phase. It processes text, images, audio, and video within a single unified framework. This approach improves cross-modal reasoning, enabling the model to perform complex analysis on raw video files and large-scale document archives without losing granular details. ### Strategic Cost Disruption The model is positioned as a performant open-source alternative to high-tier proprietary models. With pricing at $1.74 per million input tokens, it maintains frontier-level performance in coding and mathematics while significantly reducing operational costs for developers. The inclusion of an optional Thinking Mode allows for deep reasoning for logical proofs and competitive programming.

Use Cases

Discover the different ways you can use DeepSeek v4 to achieve great results.

Large-Scale Codebase Refactoring

Utilizing the 1M context window to ingest entire repositories for global bug detection and architectural improvements.

Native Video Analysis

Processing raw video files directly to perform scene detection, transcript generation, and complex visual reasoning.

Autonomous Software Agents

Deploying the model in agentic workflows to resolve real-world GitHub issues with an 80.6% success rate on SWE-bench.

Multi-Modal Content Creation

Generating structured data and creative content across text, image, and audio formats using a unified model.

High-Tier Mathematical Proofs

Solving Olympiad-level math problems and formal proofs using the specialized Thinking Mode for deep reasoning.

Enterprise Knowledge Retrieval

Analyzing massive document archives in a single prompt to extract facts without the need for complex RAG pipelines.

Strengths

Limitations

API Quick Start

deepseek/deepseek-v4-pro

import OpenAI from 'openai'; const deepseek = new OpenAI({ baseURL: 'https://api.deepseek.com', apiKey: process.env.DEEPSEEK_API_KEY, }); const msg = await deepseek.chat.completions.create({ model: 'deepseek-v4-pro', messages: [{ role: 'user', content: 'Optimize this Rust kernel for memory efficiency.' }], }); console.log(msg.choices[0].message.content);Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about DeepSeek v4

“DeepSeek v4's reasoning mode found a concurrency bug in my Rust code that even Claude Opus missed. Truly insane.”

“The era of cost-effective 1M context is finally here. We can now run full-project refactors for pennies.”

“Seeing the model work through a 1M token codebase without losing the 'needle' is the real turning point for 2026.”

“Anthropic and OpenAI have a serious pricing problem now. DeepSeek just made frontier AI a commodity.”

“It beats GPT-5.4 in coding benchmarks while being open source. This is the biggest release of the year.”

“The memory compression is the real magic. 1T parameters on consumer-ish hardware is finally becoming real.”

Related Videos

Watch tutorials, reviews, and discussions about DeepSeek v4

“The memory efficiency is the real story here, slashing KV cache by 90% changes everything”

“Running a 1T model with this level of speed is a massive architectural win”

“The cost per million tokens makes it impossible for small startups to ignore”

“I've never seen an open source model handle 1 million tokens this cleanly”

“It feels like the gap between open and closed models has officially closed”

“DeepSeek is no longer just competing on price; they are leading in long-context reasoning”

“The native video support is surprisingly robust compared to Gemini 2.0”

“Installing this locally is surprisingly easy if you use SGLang”

“Benchmarks on HumanEval show it is essentially at parity with GPT-5”

“The context window makes RAG pipelines almost redundant for medium projects”

“Performance on coding benchmarks is currently unmatched by any other open-weight model”

“It matches or exceeds top tier closed models in massive codebase refactoring”

“The engram memory implementation is a technical marvel in this space”

“We are seeing 90% logic accuracy in Thinking Mode for Olympiad math”

“This release effectively democratizes trillion-parameter intelligence”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of DeepSeek v4 and achieve better results.

Toggle Thinking Modes

Use the standard mode for rapid chat and reserve Thinking Mode specifically for coding and logical proofs.

Leverage Context Caching

Utilize built-in context caching features to reduce costs by up to 90% when using repetitive long-context prompts.

Direct Multimodal Input

Feed raw audio and video files directly into the API to benefit from native architecture rather than pre-transcribing.

System Prompt Optimization

Provide clear JSON schema or tool-use instructions in the system prompt for highly reliable agentic behavior.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Claude Sonnet 4.6

Anthropic

Claude Sonnet 4.6 offers frontier performance for coding and computer use with a massive 1M token context window for only $3/1M tokens.

Gemini 3 Flash

Gemini 3 Flash is Google's high-speed multimodal model featuring a 1M token context window, elite 90.4% GPQA reasoning, and autonomous browser automation tools.

Kimi k2.6

Moonshot

Kimi k2.6 is Moonshot AI's 1T-parameter MoE model featuring a 256K context window, native video input, and elite performance in autonomous agentic coding.

Claude Opus 4.6

Anthropic

Claude Opus 4.6 is Anthropic's flagship model featuring a 1M token context window, Adaptive Thinking, and world-class coding and reasoning performance.

Qwen3.5-397B-A17B

alibaba

Qwen3.5-397B-A17B is Alibaba's flagship open-weight MoE model. It features native multimodal reasoning, a 1M context window, and a 19x decoding throughput...

Gemini 3 Pro

Google's Gemini 3 Pro is a multimodal powerhouse featuring a 1M token context window, native video processing, and industry-leading reasoning performance.

GPT-5.1

OpenAI

GPT-5.1 is OpenAI’s advanced reasoning flagship featuring adaptive thinking, native multimodality, and state-of-the-art performance in math and technical...

Qwen 3.7 Max

alibaba

Qwen 3.7 Max is Alibaba’s flagship AI model for deep reasoning and autonomous agent tasks, featuring a 256k context window and top-tier coding performance.

Frequently Asked Questions

Find answers to common questions about DeepSeek v4