How to Scrape jup.ag: Jupiter DEX Web Scraper Guide

Learn how to scrape jup.ag for real-time Solana token prices, swap routes, and market volumes. Discover Jupiter's official APIs and bypass Cloudflare anti-bot.

Anti-Bot Protection Detected

- Cloudflare

- Enterprise-grade WAF and bot management. Uses JavaScript challenges, CAPTCHAs, and behavioral analysis. Requires browser automation with stealth settings.

- Rate Limiting

- Limits requests per IP/session over time. Can be bypassed with rotating proxies, request delays, and distributed scraping.

- Browser Fingerprinting

- Identifies bots through browser characteristics: canvas, WebGL, fonts, plugins. Requires spoofing or real browser profiles.

- Browser Fingerprinting

- Identifies bots through browser characteristics: canvas, WebGL, fonts, plugins. Requires spoofing or real browser profiles.

About Jupiter

Learn what Jupiter offers and what valuable data can be extracted from it.

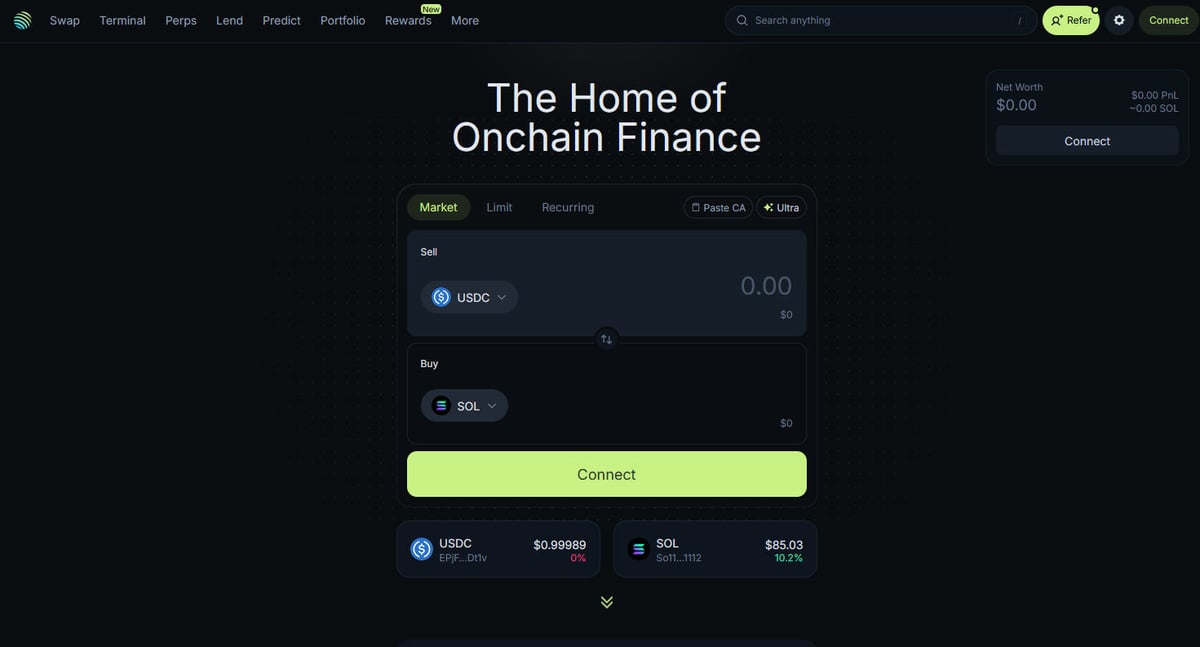

The Hub of Solana DeFi

Jupiter is the primary liquidity aggregator for the Solana blockchain, acting as a "DeFi Superapp" that optimizes trade routing across hundreds of liquidity pools to provide users with the best prices and minimal slippage. It is the central hub for Solana's on-chain finance, offering services ranging from simple token swaps to advanced features like perpetual trading with up to 250x leverage, limit orders, and dollar-cost averaging (DCA). The platform provides critical data for the ecosystem, including real-time pricing, liquidity depth, and comprehensive market metrics for thousands of assets.

Technical Architecture

The website is built on a modern technical stack using Next.js and React, making it a highly dynamic single-page application (SPA). Because prices and routes are calculated in real-time based on current blockchain state, the frontend is constantly updating via WebSockets and high-frequency API calls. For data scientists, developers, and traders, Jupiter's data is considered the gold standard for tracking Solana market sentiment and liquidity shift across the entire ecosystem.

Why the Data Matters

Accessing this data is essential for building trading bots, market dashboards, and conducting historical analysis on one of the fastest-growing blockchain networks. Scrapers often target Jupiter to monitor new token listings, track "whale" movements in perpetual markets, or identify price discrepancies for arbitrage. While the platform offers official APIs, direct web scraping is frequently used to capture the exact UI state and specific routing data that may not be fully exposed in public endpoints.

Why Scrape Jupiter?

Discover the business value and use cases for extracting data from Jupiter.

Real-Time Price Tracking

Monitor Solana token prices in real-time to gain an edge in the highly volatile DeFi market. Jupiter aggregates liquidity across the entire ecosystem, providing the most accurate market rates available.

Trading Bot Optimization

Feed high-frequency swap route and price impact data into automated trading bots for arbitrage or sniping. This allows for faster execution and better price discovery compared to manual trading.

Market Sentiment Analysis

Track the addition of new tokens to the Jupiter 'Strict' list to identify trending projects and community trust. Analyzing volume shifts across different assets helps in predicting broader market movements.

Liquidity Depth Monitoring

Scrape liquidity data to determine the market depth of specific SPL tokens and assess the potential for slippage. This is critical for large-scale traders and institutional liquidity providers.

Historical Performance Data

Collect daily volume and price history for tokens that may not be listed on centralized exchanges. This data is essential for backtesting trading strategies and conducting long-term market research.

Ecosystem Growth Metrics

Measure the total volume and transaction count across the Solana network by using Jupiter as a proxy. This helps developers and investors understand the adoption rate of Solana DeFi over time.

Scraping Challenges

Technical challenges you may encounter when scraping Jupiter.

JavaScript Rendering Dependency

Jupiter is built as a complex Single Page Application (SPA) using React, meaning data is not present in the initial HTML. You must use a tool that can fully render JavaScript to access prices and token lists.

Aggressive Cloudflare Protection

The site uses Cloudflare's Web Application Firewall (WAF) to block non-browser traffic and automated scripts. Bypassing these challenges requires advanced browser fingerprinting and header management.

Dynamic Data Stream

Prices and swap routes update multiple times per second via WebSockets and high-frequency API calls. Capturing consistent snapshots of this data requires low-latency scraping infrastructure and precise timing.

Rate Limiting on Public Endpoints

Public-facing API endpoints and web routes are strictly rate-limited to prevent abuse. Exceeding these limits often results in temporary or permanent IP bans, making residential proxies a necessity.

UI Selector Volatility

Frequent updates to the Jupiter frontend can cause CSS classes and DOM structures to change without notice. This requires scrapers to have robust, flexible selectors or frequent maintenance to avoid breakage.

Scrape Jupiter with AI

No coding required. Extract data in minutes with AI-powered automation.

How It Works

Describe What You Need

Tell the AI what data you want to extract from Jupiter. Just type it in plain language — no coding or selectors needed.

AI Extracts the Data

Our artificial intelligence navigates Jupiter, handles dynamic content, and extracts exactly what you asked for.

Get Your Data

Receive clean, structured data ready to export as CSV, JSON, or send directly to your apps and workflows.

Why Use AI for Scraping

AI makes it easy to scrape Jupiter without writing any code. Our AI-powered platform uses artificial intelligence to understand what data you want — just describe it in plain language and the AI extracts it automatically.

How to scrape with AI:

- Describe What You Need: Tell the AI what data you want to extract from Jupiter. Just type it in plain language — no coding or selectors needed.

- AI Extracts the Data: Our artificial intelligence navigates Jupiter, handles dynamic content, and extracts exactly what you asked for.

- Get Your Data: Receive clean, structured data ready to export as CSV, JSON, or send directly to your apps and workflows.

Why use AI for scraping:

- Full Headless Rendering: Automatio naturally handles the heavy JavaScript rendering of the Jupiter interface, ensuring you see the same data as a human user. You don't have to worry about missing data that only loads after the initial page view.

- Smart Proxy Rotation: Built-in residential proxy rotation allows you to bypass Cloudflare and IP-based rate limiting effortlessly. This ensures your scraping sessions remain uninterrupted even when polling data at high frequencies.

- Visual Data Selection: Easily select the specific token prices, volumes, or routes you want to scrape using a point-and-click interface. There is no need to write complex XPath or CSS selectors to target dynamic elements.

- Automated Scheduling: Set your Jupiter scrapers to run on a precise schedule, such as every minute or every hour, to maintain a fresh dataset. This is perfect for building real-time dashboards or automated price alerts.

- Direct Data Integration: Send your scraped DeFi data directly to Google Sheets, Airtable, or your own database via Webhooks. This eliminates manual data entry and streamlines your trading or research workflow.

No-Code Web Scrapers for Jupiter

Point-and-click alternatives to AI-powered scraping

Several no-code tools like Browse.ai, Octoparse, Axiom, and ParseHub can help you scrape Jupiter. These tools use visual interfaces to select elements, but they come with trade-offs compared to AI-powered solutions.

Typical Workflow with No-Code Tools

Common Challenges

Learning curve

Understanding selectors and extraction logic takes time

Selectors break

Website changes can break your entire workflow

Dynamic content issues

JavaScript-heavy sites often require complex workarounds

CAPTCHA limitations

Most tools require manual intervention for CAPTCHAs

IP blocking

Aggressive scraping can get your IP banned

No-Code Web Scrapers for Jupiter

Several no-code tools like Browse.ai, Octoparse, Axiom, and ParseHub can help you scrape Jupiter. These tools use visual interfaces to select elements, but they come with trade-offs compared to AI-powered solutions.

Typical Workflow with No-Code Tools

- Install browser extension or sign up for the platform

- Navigate to the target website and open the tool

- Point-and-click to select data elements you want to extract

- Configure CSS selectors for each data field

- Set up pagination rules to scrape multiple pages

- Handle CAPTCHAs (often requires manual solving)

- Configure scheduling for automated runs

- Export data to CSV, JSON, or connect via API

Common Challenges

- Learning curve: Understanding selectors and extraction logic takes time

- Selectors break: Website changes can break your entire workflow

- Dynamic content issues: JavaScript-heavy sites often require complex workarounds

- CAPTCHA limitations: Most tools require manual intervention for CAPTCHAs

- IP blocking: Aggressive scraping can get your IP banned

Code Examples

import requests

def get_jupiter_price(token_address):

# Using the official Jupiter Price API V2 is the most reliable method

url = f"https://api.jup.ag/price/v2?ids={token_address}"

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36",

"Accept": "application/json"

}

try:

response = requests.get(url, headers=headers)

response.raise_for_status()

data = response.json()

price_info = data['data'].get(token_address)

if price_info:

print(f"Token: {token_address} | Price: ${price_info['price']}")

except Exception as e:

print(f"An error occurred: {e}")

# Example: Fetching SOL price

get_jupiter_price("So11111111111111111111111111111111111111112")When to Use

Best for static HTML pages where content is loaded server-side. The fastest and simplest approach when JavaScript rendering isn't required.

Advantages

- ●Fastest execution (no browser overhead)

- ●Lowest resource consumption

- ●Easy to parallelize with asyncio

- ●Great for APIs and static pages

Limitations

- ●Cannot execute JavaScript

- ●Fails on SPAs and dynamic content

- ●May struggle with complex anti-bot systems

How to Scrape Jupiter with Code

Python + Requests

import requests

def get_jupiter_price(token_address):

# Using the official Jupiter Price API V2 is the most reliable method

url = f"https://api.jup.ag/price/v2?ids={token_address}"

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36",

"Accept": "application/json"

}

try:

response = requests.get(url, headers=headers)

response.raise_for_status()

data = response.json()

price_info = data['data'].get(token_address)

if price_info:

print(f"Token: {token_address} | Price: ${price_info['price']}")

except Exception as e:

print(f"An error occurred: {e}")

# Example: Fetching SOL price

get_jupiter_price("So11111111111111111111111111111111111111112")Python + Playwright

from playwright.sync_api import sync_playwright

def scrape_jupiter_tokens():

with sync_playwright() as p:

# Launch a browser that can render the Next.js frontend

browser = p.chromium.launch(headless=True)

context = browser.new_context(

user_agent="Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36"

)

page = context.new_page()

page.goto("https://jup.ag/tokens", wait_until="networkidle")

# Wait for the token list items to render in the DOM

# Note: Selectors must be updated based on the current UI build

page.wait_for_selector(".token-item")

tokens = page.query_selector_all(".token-item")

for token in tokens[:10]:

name = token.query_selector(".token-name").inner_text()

price = token.query_selector(".token-price").inner_text()

print(f"{name}: {price}")

browser.close()

scrape_jupiter_tokens()Python + Scrapy

import scrapy

import json

class JupiterTokenSpider(scrapy.Spider):

name = 'jupiter_tokens'

# Directly hitting the token list JSON endpoint used by the frontend

start_urls = ['https://token.jup.ag/all']

def parse(self, response):

# The response is a raw JSON list of all verified tokens

tokens = json.loads(response.text)

for token in tokens[:100]:

yield {

'symbol': token.get('symbol'),

'name': token.get('name'),

'address': token.get('address'),

'decimals': token.get('decimals'),

'logoURI': token.get('logoURI')

}Node.js + Puppeteer

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch({ headless: true });

const page = await browser.newPage();

// Set a realistic User-Agent to help bypass basic filters

await page.setUserAgent('Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36');

// Navigate to the main swap page

await page.goto('https://jup.ag/', { waitUntil: 'networkidle2' });

// Example of extracting a price element using a partial selector

const solPrice = await page.evaluate(() => {

const element = document.querySelector('div[class*="price"]');

return element ? element.innerText : 'Price not found';

});

console.log(`Live SOL Price observed in UI: ${solPrice}`);

await browser.close();

})();What You Can Do With Jupiter Data

Explore practical applications and insights from Jupiter data.

Price Arbitrage Alert System

Identify price differences between Jupiter and other Solana DEXs to execute profitable trades.

How to implement:

- 1Scrape real-time swap rates from Jupiter's Price API.

- 2Compare rates with Orca and Raydium liquidity pools.

- 3Set up automated alerts or execution hooks for arbitrage opportunities.

Use Automatio to extract data from Jupiter and build these applications without writing code.

What You Can Do With Jupiter Data

- Price Arbitrage Alert System

Identify price differences between Jupiter and other Solana DEXs to execute profitable trades.

- Scrape real-time swap rates from Jupiter's Price API.

- Compare rates with Orca and Raydium liquidity pools.

- Set up automated alerts or execution hooks for arbitrage opportunities.

- Solana Market Health Dashboard

Build a macro-level view of Solana DeFi activity for investors.

- Aggregate 24h volume and TVL data for top tokens.

- Categorize tokens by sectors (Meme, AI, RWA).

- Visualize liquidity shifts across different asset classes over time.

- New Token Listing Sniper

Detect and analyze new tokens appearing on Jupiter's verified list immediately.

- Regularly scrape the token list endpoint.

- Diff the new results against a local database to find new additions.

- Analyze initial liquidity and volume to assess token potential.

- Whale and Perps Tracker

Monitor large positions and funding rates in the Jupiter Perpetuals market.

- Scrape open interest and funding rate data from the Perps section.

- Track large transaction logs to identify wallet behavior.

- Build sentiment models based on long/short ratios of major assets.

- Yield Aggregation Service

Provide users with the best lending rates available across Jupiter Lend vaults.

- Scrape APY data for various stablecoins and SOL pairs.

- Calculate net yield after estimated platform fees.

- Automate rebalancing recommendations for portfolio optimization.

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert advice for successfully extracting data from Jupiter.

Prioritize Official API Endpoints

Whenever possible, use Jupiter's official V6 Quote or Price APIs instead of scraping the UI. These endpoints are designed for high-performance programmatic access and are generally more stable.

Implement Exponential Backoff

When your requests hit a rate limit, use a strategy that progressively increases the wait time between retries. This helps prevent your IP from being flagged as malicious by Jupiter's security layer.

Filter via the Strict List

Scrape the Jupiter 'Strict' token list to ensure you are only monitoring verified assets. This is the best way to filter out low-liquidity rugpulls and scam tokens that appear in the 'All' list.

Use High-Quality Residential Proxies

Avoid using datacenter proxies as they are often pre-blacklisted by Cloudflare. Residential or mobile proxies carry more trust and are less likely to trigger CAPTCHAs or 403 Forbidden errors.

Monitor WebSocket Traffic

Use your browser's network inspector to find the WebSocket connections Jupiter uses for live updates. Emulating these connections can be more efficient than reloading the page for real-time price feeds.

Account for Token Decimals

Always extract the 'decimals' field from token metadata when scraping mint addresses. This is crucial for correctly converting raw on-chain integers into human-readable currency amounts.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related Web Scraping

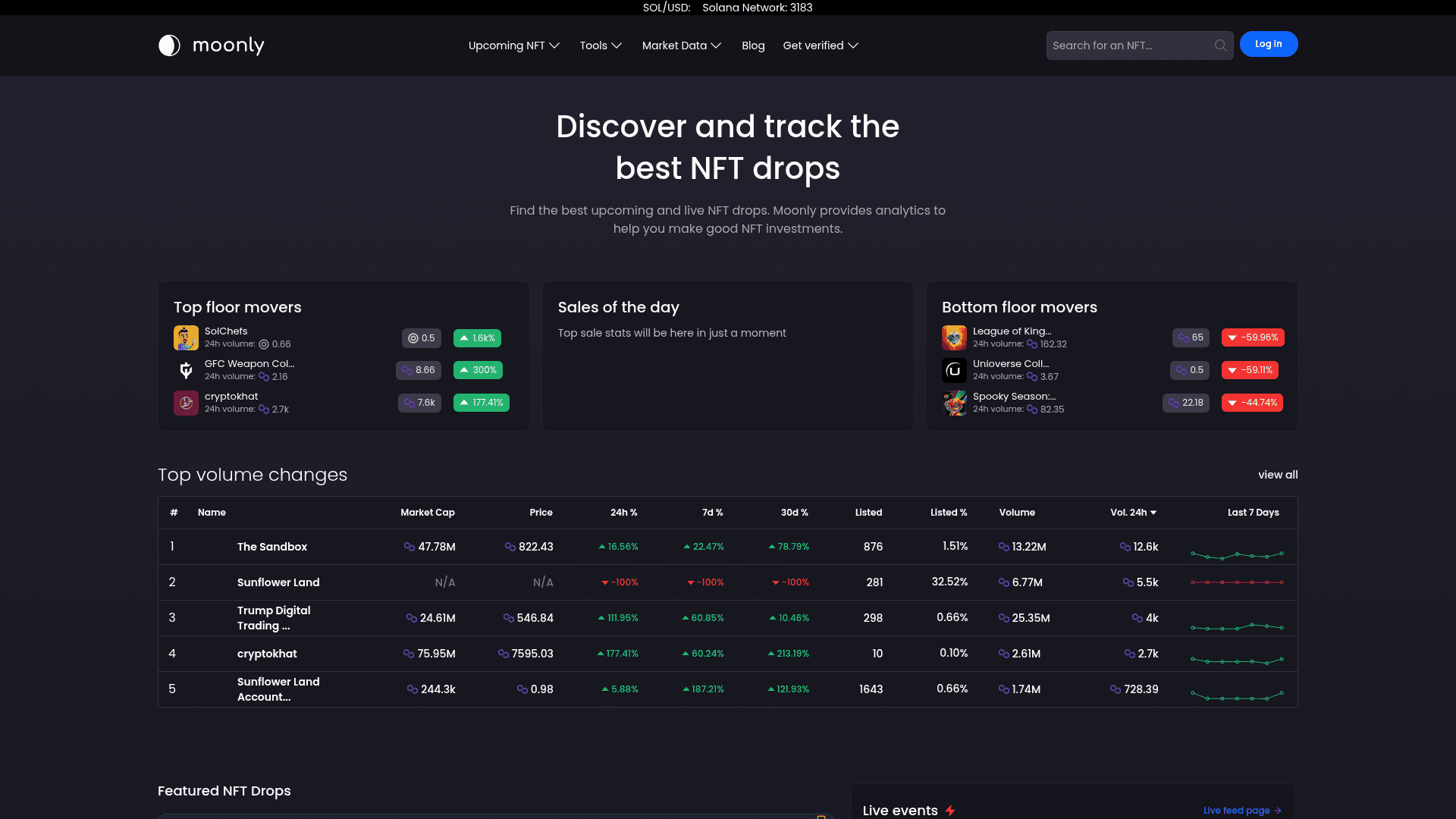

How to Scrape Moon.ly | Step-by-Step NFT Data Extraction Guide

How to Scrape Yahoo Finance: Extract Stock Market Data

How to Scrape Rocket Mortgage: A Comprehensive Guide

How to Scrape Open Collective: Financial and Contributor Data Guide

How to Scrape Indiegogo: The Ultimate Crowdfunding Data Extraction Guide

How to Scrape ICO Drops: Comprehensive Crypto Data Guide

How to Scrape Crypto.com: Comprehensive Market Data Guide

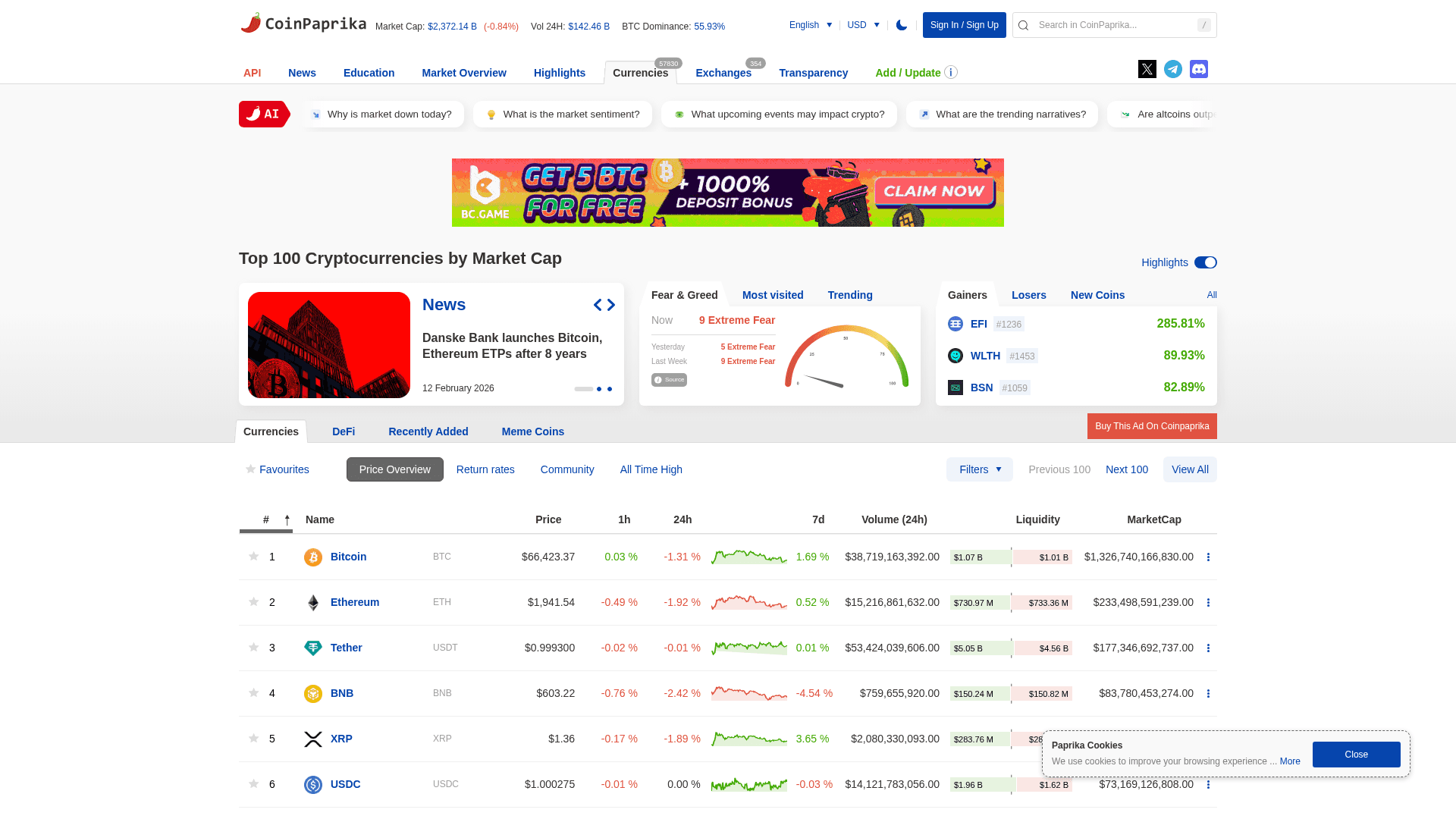

How to Scrape Coinpaprika: Crypto Market Data Extraction Guide

Frequently Asked Questions

Find answers to common questions about Jupiter