How to Scrape We Work Remotely: The Ultimate Guide

Learn how to scrape job listings from We Work Remotely. Extract job titles, companies, salaries, and more for market research or your own job aggregator.

Anti-Bot Protection Detected

- Cloudflare

- Enterprise-grade WAF and bot management. Uses JavaScript challenges, CAPTCHAs, and behavioral analysis. Requires browser automation with stealth settings.

- IP Blocking

- Blocks known datacenter IPs and flagged addresses. Requires residential or mobile proxies to circumvent effectively.

- Rate Limiting

- Limits requests per IP/session over time. Can be bypassed with rotating proxies, request delays, and distributed scraping.

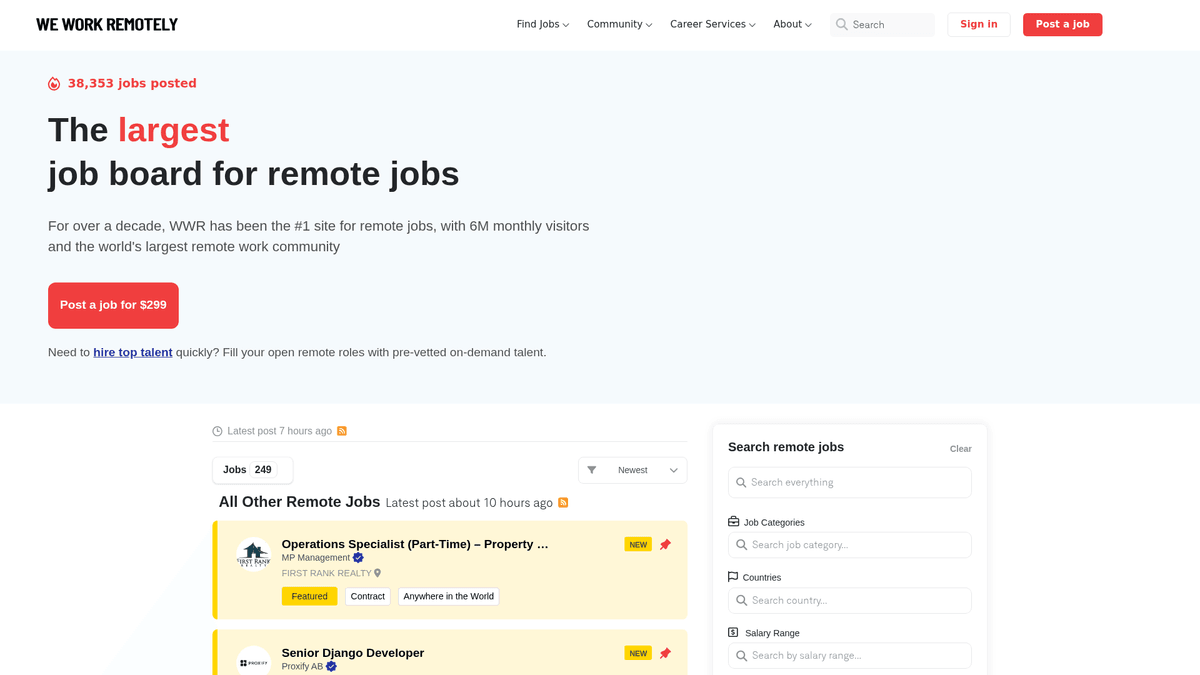

About We Work Remotely

Learn what We Work Remotely offers and what valuable data can be extracted from it.

The Hub for Global Remote Talent

We Work Remotely (WWR) is the most established remote work community globally, boasting over 6 million monthly visitors. It serves as a primary destination for companies moving away from traditional office-based models, offering a diverse array of listings in software development, design, marketing, and customer support.

High-Quality Structured Data

The platform is known for its highly structured data. Each listing typically contains specific regional requirements, salary ranges, and detailed company profiles. This structure makes it an ideal target for web scraping, as the data is consistent and easy to categorize for secondary use cases.

Strategic Value for Data Professionals

For recruiters and market researchers, WWR is a goldmine. Scraping this site allows for real-time tracking of hiring trends, salary benchmarking across different technical sectors, and lead generation for B2B services targeting remote-first companies. It provides a transparent view of the global remote labor market.

Why Scrape We Work Remotely?

Discover the business value and use cases for extracting data from We Work Remotely.

Market Demand Analysis

Track which programming languages, frameworks, and soft skills are trending across the remote work landscape to stay ahead of market shifts.

Salary Benchmarking

Extract salary data from listings to create comprehensive compensation reports for remote-first companies across various technical and creative roles.

B2B Lead Generation

Identify companies that are actively scaling their remote teams to offer specialized services like HR tech, payroll solutions, or recruitment consulting.

Niche Job Aggregation

Power a specialized job board by filtering and aggregating high-quality remote listings into specific niches like Web3, AI, or Design.

Competitive Intelligence

Monitor your competitors' hiring velocity and geographic preferences to understand their growth strategy and potential expansion areas.

Scraping Challenges

Technical challenges you may encounter when scraping We Work Remotely.

Cloudflare Bot Detection

WWR utilizes Cloudflare's security layer, which can detect and block standard automated scripts that do not properly mimic human browser behavior.

Unstructured Salary Data

Salary information is often embedded within the text descriptions in varied formats, requiring complex regex patterns to extract and normalize into numerical values.

Dynamic Content Loading

Navigating between general listings and the 'Load More' pagination requires a scraper capable of handling asynchronous requests without losing data integrity.

Varying HTML Structures

Featured listings and standard listings sometimes use slightly different CSS classes, necessitating flexible selectors to ensure no jobs are missed.

Scrape We Work Remotely with AI

No coding required. Extract data in minutes with AI-powered automation.

How It Works

Describe What You Need

Tell the AI what data you want to extract from We Work Remotely. Just type it in plain language — no coding or selectors needed.

AI Extracts the Data

Our artificial intelligence navigates We Work Remotely, handles dynamic content, and extracts exactly what you asked for.

Get Your Data

Receive clean, structured data ready to export as CSV, JSON, or send directly to your apps and workflows.

Why Use AI for Scraping

AI makes it easy to scrape We Work Remotely without writing any code. Our AI-powered platform uses artificial intelligence to understand what data you want — just describe it in plain language and the AI extracts it automatically.

How to scrape with AI:

- Describe What You Need: Tell the AI what data you want to extract from We Work Remotely. Just type it in plain language — no coding or selectors needed.

- AI Extracts the Data: Our artificial intelligence navigates We Work Remotely, handles dynamic content, and extracts exactly what you asked for.

- Get Your Data: Receive clean, structured data ready to export as CSV, JSON, or send directly to your apps and workflows.

Why use AI for scraping:

- Automated Anti-Bot Handling: Automatio natively handles proxy rotation and browser fingerprinting to bypass Cloudflare and other security measures without manual configuration.

- Visual No-Code Selection: Easily select job titles, company names, and descriptions using a point-and-click interface rather than writing complex CSS or XPath selectors.

- Scheduled Monitoring: Set your scraper to run every hour or day to automatically detect and extract the newest remote job postings the moment they go live.

- Seamless Data Export: Directly push your scraped job listings to Google Sheets, Airtable, or a Webhook to feed your application or CRM in real-time.

No-Code Web Scrapers for We Work Remotely

Point-and-click alternatives to AI-powered scraping

Several no-code tools like Browse.ai, Octoparse, Axiom, and ParseHub can help you scrape We Work Remotely. These tools use visual interfaces to select elements, but they come with trade-offs compared to AI-powered solutions.

Typical Workflow with No-Code Tools

Common Challenges

Learning curve

Understanding selectors and extraction logic takes time

Selectors break

Website changes can break your entire workflow

Dynamic content issues

JavaScript-heavy sites often require complex workarounds

CAPTCHA limitations

Most tools require manual intervention for CAPTCHAs

IP blocking

Aggressive scraping can get your IP banned

No-Code Web Scrapers for We Work Remotely

Several no-code tools like Browse.ai, Octoparse, Axiom, and ParseHub can help you scrape We Work Remotely. These tools use visual interfaces to select elements, but they come with trade-offs compared to AI-powered solutions.

Typical Workflow with No-Code Tools

- Install browser extension or sign up for the platform

- Navigate to the target website and open the tool

- Point-and-click to select data elements you want to extract

- Configure CSS selectors for each data field

- Set up pagination rules to scrape multiple pages

- Handle CAPTCHAs (often requires manual solving)

- Configure scheduling for automated runs

- Export data to CSV, JSON, or connect via API

Common Challenges

- Learning curve: Understanding selectors and extraction logic takes time

- Selectors break: Website changes can break your entire workflow

- Dynamic content issues: JavaScript-heavy sites often require complex workarounds

- CAPTCHA limitations: Most tools require manual intervention for CAPTCHAs

- IP blocking: Aggressive scraping can get your IP banned

Code Examples

import requests

from bs4 import BeautifulSoup

url = 'https://weworkremotely.com/'

headers = {'User-Agent': 'Mozilla/5.0'}

try:

# Send request with custom headers

response = requests.get(url, headers=headers)

soup = BeautifulSoup(response.text, 'html.parser')

# Target job listings

jobs = soup.find_all('li', class_='feature')

for job in jobs:

title = job.find('span', class_='title').text.strip()

company = job.find('span', class_='company').text.strip()

print(f'Job: {title} | Company: {company}')

except Exception as e:

print(f'Error: {e}')When to Use

Best for static HTML pages where content is loaded server-side. The fastest and simplest approach when JavaScript rendering isn't required.

Advantages

- ●Fastest execution (no browser overhead)

- ●Lowest resource consumption

- ●Easy to parallelize with asyncio

- ●Great for APIs and static pages

Limitations

- ●Cannot execute JavaScript

- ●Fails on SPAs and dynamic content

- ●May struggle with complex anti-bot systems

How to Scrape We Work Remotely with Code

Python + Requests

import requests

from bs4 import BeautifulSoup

url = 'https://weworkremotely.com/'

headers = {'User-Agent': 'Mozilla/5.0'}

try:

# Send request with custom headers

response = requests.get(url, headers=headers)

soup = BeautifulSoup(response.text, 'html.parser')

# Target job listings

jobs = soup.find_all('li', class_='feature')

for job in jobs:

title = job.find('span', class_='title').text.strip()

company = job.find('span', class_='company').text.strip()

print(f'Job: {title} | Company: {company}')

except Exception as e:

print(f'Error: {e}')Python + Playwright

import asyncio

from playwright.async_api import async_playwright

async def run():

async with async_playwright() as p:

# Launch headless browser

browser = await p.chromium.launch(headless=True)

page = await browser.new_page()

await page.goto('https://weworkremotely.com/')

# Wait for the main container to load

await page.wait_for_selector('.jobs-container')

jobs = await page.query_selector_all('li.feature')

for job in jobs:

title = await job.query_selector('.title')

if title:

print(await title.inner_text())

await browser.close()

asyncio.run(run())Python + Scrapy

import scrapy

class WwrSpider(scrapy.Spider):

name = 'wwr_spider'

start_urls = ['https://weworkremotely.com/']

def parse(self, response):

# Iterate through listing items

for job in response.css('li.feature'):

yield {

'title': job.css('span.title::text').get(),

'company': job.css('span.company::text').get(),

'url': response.urljoin(job.css('a::attr(href)').get())

}Node.js + Puppeteer

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch();

const page = await browser.newPage();

await page.goto('https://weworkremotely.com/');

// Extract data using evaluate

const jobs = await page.evaluate(() => {

return Array.from(document.querySelectorAll('li.feature')).map(li => ({

title: li.querySelector('.title')?.innerText.trim(),

company: li.querySelector('.company')?.innerText.trim()

}));

});

console.log(jobs);

await browser.close();

})();What You Can Do With We Work Remotely Data

Explore practical applications and insights from We Work Remotely data.

Remote Job Aggregator

Build a specialized job search platform for specific technical niches like Rust or AI.

How to implement:

- 1Scrape WWR daily for new listings

- 2Filter by specific keywords and categories

- 3Store data in a searchable database

- 4Automate social media postings for new jobs

Use Automatio to extract data from We Work Remotely and build these applications without writing code.

What You Can Do With We Work Remotely Data

- Remote Job Aggregator

Build a specialized job search platform for specific technical niches like Rust or AI.

- Scrape WWR daily for new listings

- Filter by specific keywords and categories

- Store data in a searchable database

- Automate social media postings for new jobs

- Salary Trend Analysis

Analyze remote salary data to determine global compensation benchmarks across roles.

- Extract salary fields from job descriptions

- Normalize data into a single currency

- Segment by job role and experience level

- Generate quarterly market reports

- Lead Generation for HR Tech

Identify companies aggressively hiring remote teams to sell HR, payroll, and benefit software.

- Monitor the 'Top 100 Remote Companies' list

- Track frequency of new job postings

- Identify decision-makers at hiring companies

- Outreach with tailored B2B solutions

- Historical Hiring Trends

Analyze long-term data to understand how remote work demand shifts seasonally or economically.

- Archive listings over 12+ months

- Calculate growth rates per category

- Visualize trends using BI tools

- Predict future skill demand

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert advice for successfully extracting data from We Work Remotely.

Parse the RSS Feeds First

Check the /remote-jobs.rss endpoints for various categories; they offer structured XML that is significantly easier and faster to parse than the main HTML.

Use Category-Specific URLs

Target URLs like /categories/remote-programming-jobs to get cleaner results and avoid the noise of the main landing page.

Rotate Residential Proxies

To avoid rate limiting on detail pages, use residential proxies which are less likely to be flagged as bots compared to datacenter IPs.

Target JSON-LD Metadata

Many job pages include structured data in JSON-LD format within the HTML, which provides clean, ready-to-use data for job titles and descriptions.

Randomize Request Intervals

Implement human-like delays between clicking on different job listings to prevent the site's security from identifying a robotic pattern.

Monitor the 'Top 100' Section

Scrape the 'Top 100 Companies' page separately to identify high-value hiring targets that consistently list on the platform.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related Web Scraping

How to Scrape Arc.dev: The Complete Guide to Remote Job Data

How to Scrape Fiverr | Fiverr Web Scraper Guide

How to Scrape Freelancer.com: A Complete Technical Guide

How to Scrape Upwork

How to Scrape Toptal | Toptal Web Scraper Guide

How to Scrape Guru.com: A Comprehensive Web Scraping Guide

How to Scrape Indeed: 2025 Guide for Job Market Data

How to Scrape Charter Global | IT Services & Job Board Scraper

Frequently Asked Questions

Find answers to common questions about We Work Remotely