How to Scrape Substack Newsletters and Posts

Learn how to scrape Substack newsletters and posts for market research. Extract author data, subscriber counts, and engagement metrics from the leading...

Anti-Bot Protection Detected

- Cloudflare

- Enterprise-grade WAF and bot management. Uses JavaScript challenges, CAPTCHAs, and behavioral analysis. Requires browser automation with stealth settings.

- Rate Limiting

- Limits requests per IP/session over time. Can be bypassed with rotating proxies, request delays, and distributed scraping.

- IP Blocking

- Blocks known datacenter IPs and flagged addresses. Requires residential or mobile proxies to circumvent effectively.

- Login Walls

- CAPTCHA

- Challenge-response test to verify human users. Can be image-based, text-based, or invisible. Often requires third-party solving services.

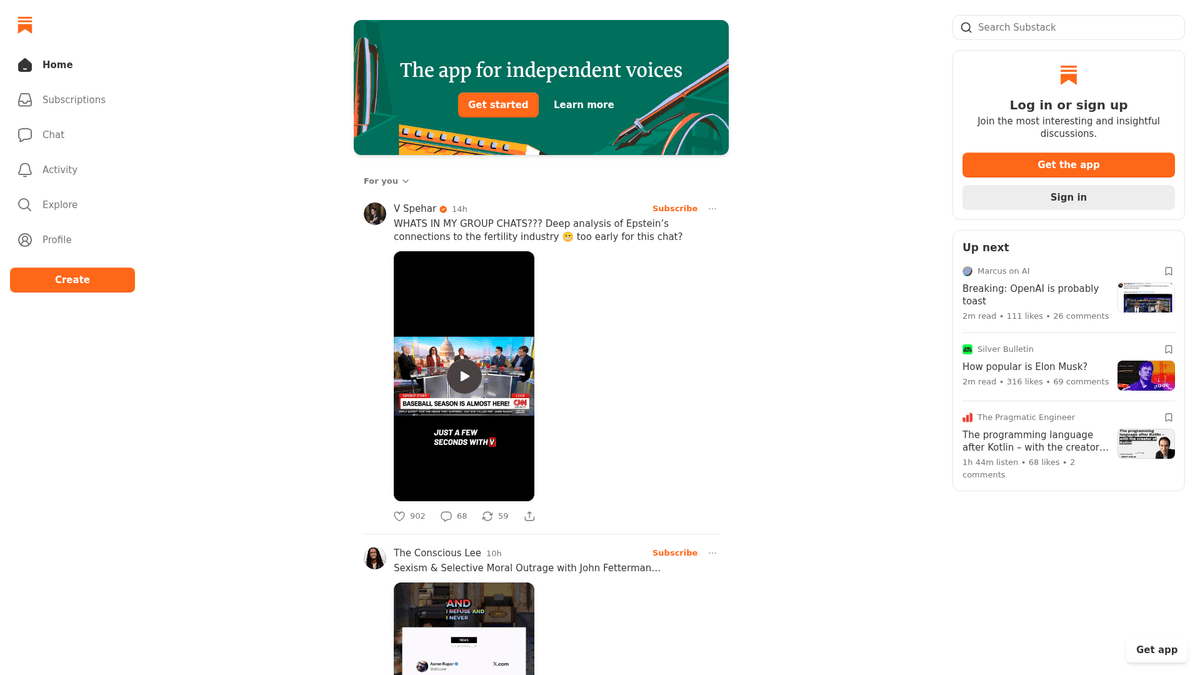

About Substack

Learn what Substack offers and what valuable data can be extracted from it.

Independent Publishing Hub

Substack is a prominent American platform that provides the infrastructure for writers to publish, monetize, and manage subscription newsletters. It has become a central hub for independent journalism, expert analysis, and niche content, allowing creators to bypass traditional media gatekeepers and build direct relationships with their audience through email and the web.

Valuable Data Insights

Each publication typically features an archive of posts, author biographies, and community engagement metrics like likes and comments. This wealth of expert-driven content is highly valuable for organizations seeking specialized insights that are often not available in mainstream news cycles. It is a goldmine for qualitative and quantitative analysis.

Market Relevance

Scraping Substack data is particularly useful for tracking market trends, performing sentiment analysis on high-intent communities, and identifying key influencers within specific industries. The platform hosts thousands of publications ranging from politics and finance to technology and creative writing.

Why Scrape Substack?

Discover the business value and use cases for extracting data from Substack.

Niche Content Aggregation

Consolidate long-form journalism and expert opinions from multiple publications into a single, searchable knowledge base for your organization.

Market Sentiment Analysis

Analyze comments and engagement metrics across specialized communities to gauge public reaction to specific news events or industry trends.

Influencer and Expert Discovery

Identify rising writers and industry thought leaders by tracking subscriber growth and engagement levels across the platform's directory.

Competitive Content Strategy

Monitor the publishing frequency, article length, and engagement patterns of rival newsletters to optimize your own editorial calendar.

Investment Intelligence

Extract financial data and market forecasts from high-tier economic newsletters to inform investment strategies and risk management.

Lead Generation

Find and reach out to authors or highly active community members who are influential within specific technical or business niches.

Scraping Challenges

Technical challenges you may encounter when scraping Substack.

Cloudflare Bot Detection

Substack employs Cloudflare's security layer, which can trigger CAPTCHAs or block automated requests that don't mimic human browser behavior.

Dynamic React Rendering

The platform heavily uses React, meaning the content is loaded dynamically and requires a headless browser to render the full HTML.

Infinite Scrolling Archives

Publication archives load more posts as you scroll, requiring sophisticated automation logic to capture historical data without missing entries.

Strict Rate Limiting

Rapidly requesting multiple publication pages from a single IP address can lead to temporary blocks and 429 'Too Many Requests' errors.

Internal API Security

While data is often served via internal JSON endpoints, these frequently require specific headers and tokens that change periodically.

Scrape Substack with AI

No coding required. Extract data in minutes with AI-powered automation.

How It Works

Describe What You Need

Tell the AI what data you want to extract from Substack. Just type it in plain language — no coding or selectors needed.

AI Extracts the Data

Our artificial intelligence navigates Substack, handles dynamic content, and extracts exactly what you asked for.

Get Your Data

Receive clean, structured data ready to export as CSV, JSON, or send directly to your apps and workflows.

Why Use AI for Scraping

AI makes it easy to scrape Substack without writing any code. Our AI-powered platform uses artificial intelligence to understand what data you want — just describe it in plain language and the AI extracts it automatically.

How to scrape with AI:

- Describe What You Need: Tell the AI what data you want to extract from Substack. Just type it in plain language — no coding or selectors needed.

- AI Extracts the Data: Our artificial intelligence navigates Substack, handles dynamic content, and extracts exactly what you asked for.

- Get Your Data: Receive clean, structured data ready to export as CSV, JSON, or send directly to your apps and workflows.

Why use AI for scraping:

- Anti-Bot Bypass: Automatio includes built-in mechanisms to handle Cloudflare challenges and sophisticated browser fingerprinting automatically.

- Visual No-Code Selection: Extract structured data from complex dynamic layouts by simply clicking on titles, dates, or authors using the point-and-click interface.

- Automated Infinite Scroll: Easily configure the scraper to scroll through long archives and load all historical posts without writing complex JavaScript code.

- Cloud-Based Scheduling: Schedule your Substack scrapers to run on a daily or weekly basis in the cloud, ensuring your database stays updated with the latest posts.

- Direct Integration: Automatically send your scraped newsletter data to Google Sheets, Webhooks, or other APIs for immediate analysis.

No-Code Web Scrapers for Substack

Point-and-click alternatives to AI-powered scraping

Several no-code tools like Browse.ai, Octoparse, Axiom, and ParseHub can help you scrape Substack. These tools use visual interfaces to select elements, but they come with trade-offs compared to AI-powered solutions.

Typical Workflow with No-Code Tools

Common Challenges

Learning curve

Understanding selectors and extraction logic takes time

Selectors break

Website changes can break your entire workflow

Dynamic content issues

JavaScript-heavy sites often require complex workarounds

CAPTCHA limitations

Most tools require manual intervention for CAPTCHAs

IP blocking

Aggressive scraping can get your IP banned

No-Code Web Scrapers for Substack

Several no-code tools like Browse.ai, Octoparse, Axiom, and ParseHub can help you scrape Substack. These tools use visual interfaces to select elements, but they come with trade-offs compared to AI-powered solutions.

Typical Workflow with No-Code Tools

- Install browser extension or sign up for the platform

- Navigate to the target website and open the tool

- Point-and-click to select data elements you want to extract

- Configure CSS selectors for each data field

- Set up pagination rules to scrape multiple pages

- Handle CAPTCHAs (often requires manual solving)

- Configure scheduling for automated runs

- Export data to CSV, JSON, or connect via API

Common Challenges

- Learning curve: Understanding selectors and extraction logic takes time

- Selectors break: Website changes can break your entire workflow

- Dynamic content issues: JavaScript-heavy sites often require complex workarounds

- CAPTCHA limitations: Most tools require manual intervention for CAPTCHAs

- IP blocking: Aggressive scraping can get your IP banned

Code Examples

import requests

from bs4 import BeautifulSoup

import json

url = 'https://example.substack.com/archive'

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) Chrome/119.0.0.0'}

try:

response = requests.get(url, headers=headers)

response.raise_for_status()

soup = BeautifulSoup(response.text, 'html.parser')

posts = soup.find_all('div', class_='post-preview')

for post in posts:

title = post.find('a', class_='post-preview-title').text.strip()

print(f'Post Found: {title}')

except Exception as e:

print(f'Error: {e}')When to Use

Best for static HTML pages where content is loaded server-side. The fastest and simplest approach when JavaScript rendering isn't required.

Advantages

- ●Fastest execution (no browser overhead)

- ●Lowest resource consumption

- ●Easy to parallelize with asyncio

- ●Great for APIs and static pages

Limitations

- ●Cannot execute JavaScript

- ●Fails on SPAs and dynamic content

- ●May struggle with complex anti-bot systems

How to Scrape Substack with Code

Python + Requests

import requests

from bs4 import BeautifulSoup

import json

url = 'https://example.substack.com/archive'

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) Chrome/119.0.0.0'}

try:

response = requests.get(url, headers=headers)

response.raise_for_status()

soup = BeautifulSoup(response.text, 'html.parser')

posts = soup.find_all('div', class_='post-preview')

for post in posts:

title = post.find('a', class_='post-preview-title').text.strip()

print(f'Post Found: {title}')

except Exception as e:

print(f'Error: {e}')Python + Playwright

import asyncio

from playwright.async_api import async_playwright

async def scrape_substack():

async with async_playwright() as p:

browser = await p.chromium.launch(headless=True)

page = await browser.new_page()

await page.goto('https://example.substack.com/archive')

await page.wait_for_selector('.post-preview')

for _ in range(3):

await page.mouse.wheel(0, 1000)

await asyncio.sleep(2)

posts = await page.query_selector_all('.post-preview')

for post in posts:

title = await post.inner_text('.post-preview-title')

print({'title': title})

await browser.close()

asyncio.run(scrape_substack())Python + Scrapy

import scrapy

class SubstackSpider(scrapy.Spider):

name = 'substack'

start_urls = ['https://example.substack.com/archive']

def parse(self, response):

for post in response.css('div.post-preview'):

yield {

'title': post.css('a.post-preview-title::text').get(),

'url': post.css('a.post-preview-title::attr(href)').get(),

'date': post.css('time::attr(datetime)').get()

}Node.js + Puppeteer

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch();

const page = await browser.newPage();

await page.goto('https://example.substack.com/archive');

await page.waitForSelector('.post-preview');

const posts = await page.evaluate(() => {

return Array.from(document.querySelectorAll('.post-preview')).map(item => ({

title: item.querySelector('.post-preview-title')?.innerText,

link: item.querySelector('.post-preview-title')?.href

}));

});

console.log(posts);

await browser.close();

})();What You Can Do With Substack Data

Explore practical applications and insights from Substack data.

Niche Trend Analysis

Marketers can track a collection of top Substacks in specific industries like AI or Crypto to identify emerging topics and public sentiment.

How to implement:

- 1Select 15-20 top-tier Substack publications in a target industry.

- 2Scrape all post titles, content, and category tags weekly.

- 3Run keyword frequency analysis to identify rising topics.

- 4Generate a market momentum report for internal stakeholders.

Use Automatio to extract data from Substack and build these applications without writing code.

What You Can Do With Substack Data

- Niche Trend Analysis

Marketers can track a collection of top Substacks in specific industries like AI or Crypto to identify emerging topics and public sentiment.

- Select 15-20 top-tier Substack publications in a target industry.

- Scrape all post titles, content, and category tags weekly.

- Run keyword frequency analysis to identify rising topics.

- Generate a market momentum report for internal stakeholders.

- Influencer Outreach & Recruitment

Brand partnership teams can identify rising writers in the newsletter space to offer sponsorship or collaborative deals.

- Search Substack's directory for specific niche keywords.

- Scrape author names, bios, and approximate subscriber counts.

- Extract social media links from author profile pages.

- Filter candidates by engagement metrics and initiate contact.

- Competitive Content Strategy

Digital publishers can analyze which content formats perform best for their direct competitors.

- Scrape the full archive of a direct competitor's Substack publication.

- Correlate 'Likes' and 'Comments' counts with post length.

- Identify 'outlier' posts that received significantly higher engagement.

- Adjust internal content calendars based on high-performing verified formats.

- Sentiment Monitoring

Researchers can analyze comment sections to understand how specialized communities react to specific news or product launches.

- Scrape comments from high-engagement posts related to a specific brand.

- Apply NLP sentiment analysis to categorize audience reactions.

- Track sentiment shifts over time relative to major industry announcements.

- Deliver insights to PR teams for rapid response planning.

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert advice for successfully extracting data from Substack.

Target Archive Pages

For historical data, always navigate to the publication's /archive page as it provides the most consistent structure for listing past posts.

Use Residential Proxies

To bypass strict Cloudflare checks, use high-quality residential proxies that make your traffic appear as legitimate home users.

Leverage Embedded JSON

Look for the window._substackData variable in the HTML source code, which often contains structured JSON for the entire page's content.

Implement Random Delays

Avoid pattern detection by introducing randomized wait times of 5-15 seconds between page loads or scrolling actions.

Monitor for Pop-ups

Substack frequently shows subscription or app-download overlays; ensure your automation is configured to close these before scraping.

Rotate User Agents

Constantly change your User-Agent string to represent different modern browsers and operating systems to stay below the radar.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related Web Scraping

Frequently Asked Questions

Find answers to common questions about Substack