GLM-4.7

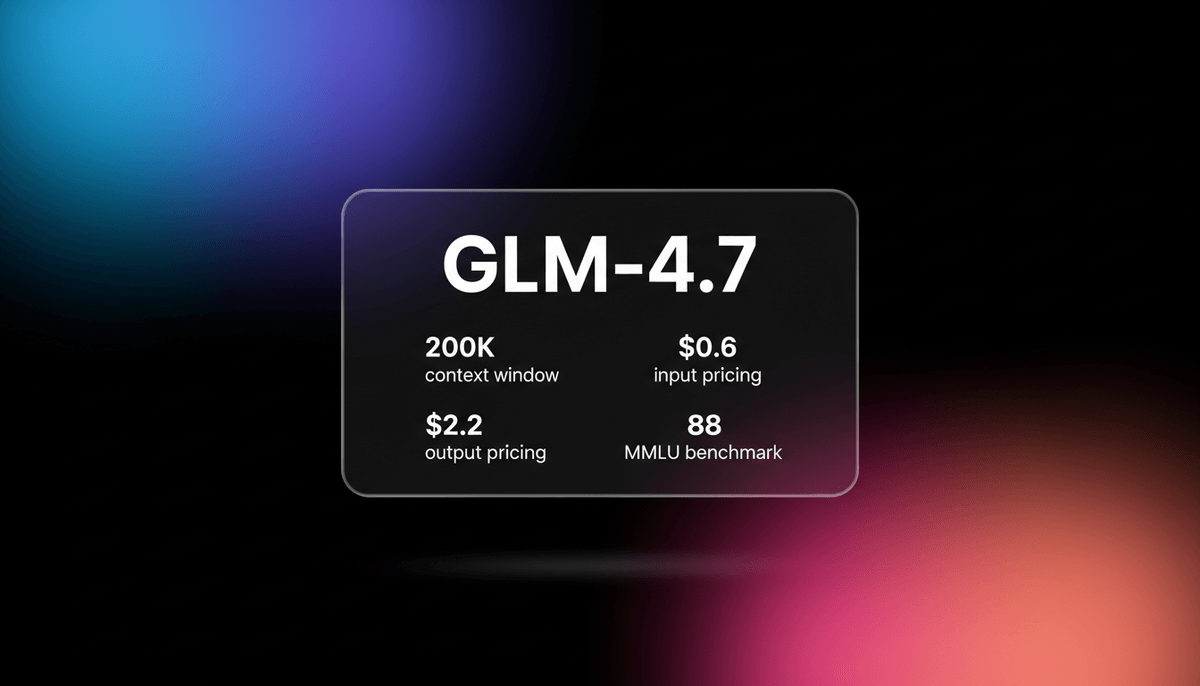

GLM-4.7 by Zhipu AI is a flagship 358B MoE model featuring a 200K context window, elite 73.8% SWE-bench performance, and native Deep Thinking for agentic...

About GLM-4.7

Learn about GLM-4.7's capabilities, features, and how it can help you achieve better results.

Model Overview

GLM-4.7 is a flagship large language model developed by Zhipu AI. It utilizes a Mixture-of-Experts (MoE) architecture with 358 billion total parameters. The model is specifically designed to handle complex agentic tasks and long-context reasoning through its unique Preserved Thinking and Interleaved Thinking capabilities. These features allow the model to maintain stable logic and intermediate reasoning states across multi-turn sessions, addressing the context decay common in autonomous workflows.

Performance and Architecture

The model offers an expansive 200,000-token context window combined with a massive 131,072-token output capacity. This makes it suitable for generating entire applications or analyzing extensive documentation in a single pass. Released under the MIT license as an open-weight model, it provides high-performance coding and reasoning at a fraction of the cost of proprietary alternatives.

Integration and Use

It is fully compatible with the OpenAI API format, simplifying integration into existing software ecosystems. Developers use it for high-stakes software engineering tasks, where it achieves a 73.8% score on SWE-bench Verified. Its ability to process and analyze high volumes of technical documentation between English and Chinese with native-level linguistic nuance makes it a versatile tool for international development teams.

Use Cases

Discover the different ways you can use GLM-4.7 to achieve great results.

Autonomous Software Engineering

Utilizing the 73.8% SWE-bench capability to autonomously debug, refactor, and implement new features across complex repositories.

High-Capacity Document Synthesis

Leveraging the 131k output limit to generate comprehensive technical manuals or entire book chapters from large datasets.

Long-Horizon Agentic Workflows

Deploying agents that use Preserved Thinking to maintain consistency and logic over hundreds of sequential tasks without losing context.

Bilingual Business Intelligence

Processing and analyzing high-volumes of technical documentation between English and Chinese with native-level linguistic nuance.

Automated UI/UX Code Generation

Generating complete React or Next.js front-end architectures with advanced animations and production-ready styling in a single shot.

Competition-Level Mathematical Solving

Solving complex Olympiad-level math problems and symbolic logic puzzles using the dedicated reasoning-heavy thinking mode.

Strengths

Limitations

API Quick Start

zai/glm-4.7

import OpenAI from 'openai';

const client = new OpenAI({

apiKey: 'YOUR_ZAI_API_KEY',

baseURL: 'https://api.z.ai/api/paas/v4/',

});

async function main() {

const response = await client.chat.completions.create({

model: 'glm-4.7',

messages: [{ role: 'user', content: 'Design a scalable React architecture.' }],

thinking: { type: 'enabled' }

});

console.log(response.choices[0].message.content);

}

main();Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about GLM-4.7

“GLM-4.7 handles large codebases reliably with its 128k context. It's been surprisingly useful for subagent tasks to save on primary API costs.”

“Zhipu AI's GLM-4.7 matches proprietary frontier models like GPT-5.1 High in coding. The Preserved Thinking feature is a huge win for autonomous agents.”

“GLM-4.7 continues to be the most intelligent open weights model in the Intelligence Index v4.0, placing ahead of DeepSeek V3.2.”

“Chinese models are closing the gap fast in coding utility. This 73% SWE-bench score is no joke for an open weight release.”

“The reasoning speed is actually quite decent for a model of this size. It handles the complex logic much better than previous iterations.”

“GLM-4.7 lands #6 on the AI Index, surpassing Kimi K2. Discover why this $2 model is replacing GPT-5.2 in coding workflows.”

Related Videos

Watch tutorials, reviews, and discussions about GLM-4.7

“The context length here is 200k and the maximum output tokens is 128k which is quite beefy actually.”

“All right, that is really quite impressive. None of them put in a special feature with that level of complexity.”

“The reasoning speed is actually quite decent for a model of this size.”

“It handles the complex logic much better than previous iterations.”

“This model is a significant step up in terms of logical consistency.”

“The GLM model actually implemented a better architecture by placing all the mock data in one file.”

“This one is definitely a huge leap. Those benchmarks are justified by the testing I've done.”

“It understood the context of the entire project without me needing to remind it.”

“The coding capability is arguably on par with the best models out there.”

“You are getting high-end reasoning at a fraction of the cost.”

“It scored a 73.8 percentage on Swaybench verified, which is absolutely incredible for an open-source model.”

“You can actually see that it functions and actually works. Whereas the Gemini 3 Pro generation doesn't work at all.”

“The speed of generation for this level of intelligence is remarkable.”

“It is clearly designed for developers who need reliable code output.”

“Zhipu AI has really outdone themselves with the MoE architecture tuning here.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of GLM-4.7 and achieve better results.

Enable Thinking Mode for Logic

Set the thinking parameter to enabled for coding or math tasks to utilize the model's internal reasoning traces and improve accuracy.

Use OpenAI-Compatible SDKs

Integrate GLM-4.7 into existing workflows by using the OpenAI SDK and changing the base URL to the Z.ai endpoint.

Maximize the 131K Output

When generating long-form content, provide a detailed outline first to help the model maintain structural coherence over the massive token limit.

Optimize System Prompts for Agents

Define the Preserved Thinking requirements in the system message to ensure the model reuses reasoning states across multi-turn sessions.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Qwen3-Coder-Next

alibaba

Qwen3-Coder-Next is Alibaba Cloud's elite Apache 2.0 coding model, featuring an 80B MoE architecture and 256k context window for advanced local development.

GPT-4o mini

OpenAI

OpenAI's most cost-efficient small model, GPT-4o mini offers multimodal intelligence and high-speed performance at a significantly lower price point.

MiniMax M2.5

minimax

MiniMax M2.5 is a SOTA MoE model featuring a 1M context window and elite agentic coding capabilities at disruptive pricing for autonomous agents.

MiMo V2.5 Pro

Other

MiMo V2.5 Pro is Xiaomi's open-source 1.02T parameter MoE model featuring a 1M context window, native multimodality, and elite agentic coding performance.

Gemini 3.5 Flash

Gemini 3.5 Flash is Google's high-speed multimodal model with a 1M context window, optimized for sub-second agentic loops and complex coding tasks.

Qwen 3.7 Max

alibaba

Qwen 3.7 Max is Alibaba’s flagship AI model for deep reasoning and autonomous agent tasks, featuring a 256k context window and top-tier coding performance.

Qwen3.5-Omni

alibaba

Qwen3.5-Omni is a natively omnimodal AI by Alibaba Cloud, offering seamless audio-visual reasoning, real-time voice chat, and 256k context for low-latency apps.

DeepSeek v4

DeepSeek

DeepSeek v4 is a 1.6T parameter MoE model featuring a 1M token context window and native multimodal support for text, vision, and video at disruptive prices.

Frequently Asked Questions

Find answers to common questions about GLM-4.7