Claude 3.7 Sonnet

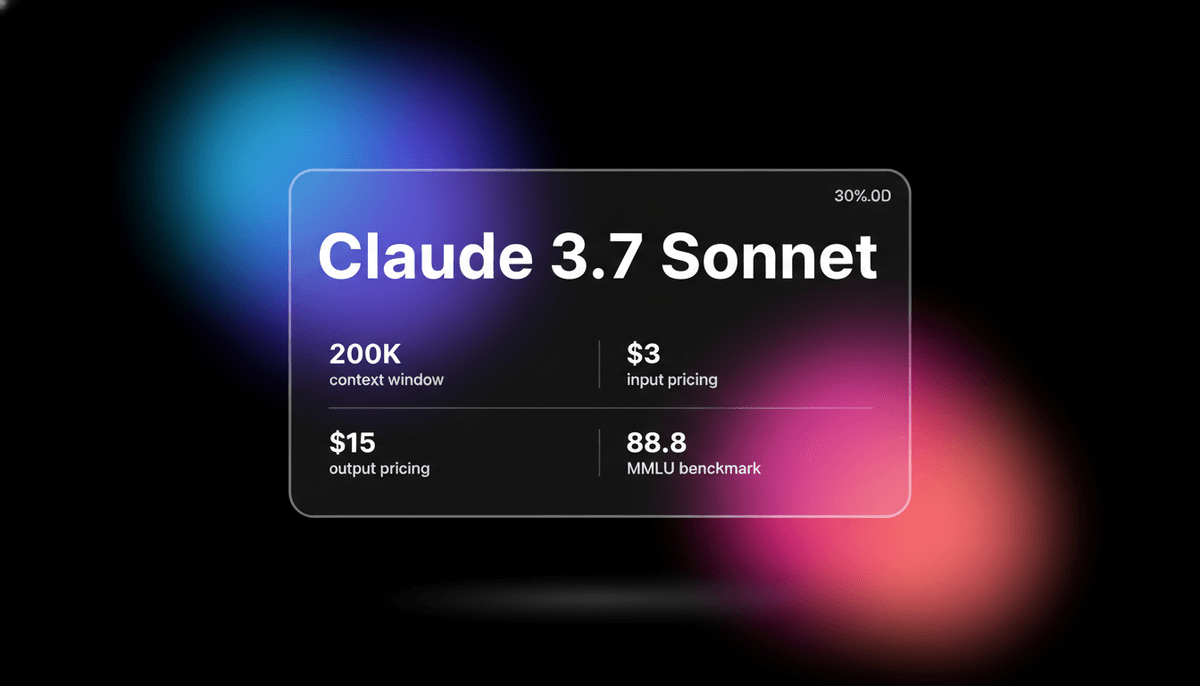

Claude 3.7 Sonnet is Anthropic's first hybrid reasoning model, delivering state-of-the-art coding capabilities, a 200k context window, and visible thinking.

About Claude 3.7 Sonnet

Learn about Claude 3.7 Sonnet's capabilities, features, and how it can help you achieve better results.

Hybrid Reasoning Design

Claude 3.7 Sonnet uses a new architecture that lets users choose between speed and depth. It is the first model to offer a toggle for extended thinking, allowing the system to work through complex logic before providing an answer. This transparency lets developers see exactly how the model reaches a conclusion, reducing the chance of hidden errors in technical work.

Technical Problem Solving

This model is built for high-level software engineering. It scores 62.1% on the SWE-bench Verified benchmark, showing a strong ability to fix real GitHub issues. When used with tools like Claude Code, it manages file editing and command execution across large repositories. It handles math and coding tasks with a level of precision that matches or exceeds current top-tier reasoning models.

Massive Context Capacity

With a 200,000-token context window, the model processes large sets of documentation or codebases in one go. It supports up to 128,000 tokens of output when the thinking mode is active, making it useful for generating long scripts or detailed reports. The model is also multimodal, meaning it can interpret charts and diagrams alongside text.

Use Cases

Discover the different ways you can use Claude 3.7 Sonnet to achieve great results.

Agentic Software Engineering

Using the terminal tool to fix bugs and refactor code across massive file structures.

Math Proof Verification

Solving difficult math problems by letting the model think through logical steps.

Repository Analysis

Extracting data and identifying patterns from entire technical codebases in one prompt.

Visual Data Parsing

Converting complex charts, flowcharts, and technical diagrams into structured JSON data.

System Architecture Planning

Designing software systems with detailed logic checks using the extended thinking mode.

Automated Git Workflows

Managing commit messages, code reviews, and test execution through agentic tool use.

Strengths

Limitations

API Quick Start

anthropic/claude-3-7-sonnet

import Anthropic from '@anthropic-ai/sdk';

const anthropic = new Anthropic();

const message = await anthropic.messages.create({

model: "claude-3-7-sonnet-20250219",

max_tokens: 4096,

thinking: {

type: "enabled",

budget_tokens: 2048

},

messages: [{ role: "user", content: "Analyze this architectural flaw..." }],

});

console.log(message.content);Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Claude 3.7 Sonnet

“Claude Code plus 3.7 Sonnet is basically a junior developer on steroids in my terminal. It's the first time agentic AI felt real.”

“The hybrid reasoning is a major update. I don't always need it to think for 30 seconds, but when I'm debugging, it's incredible.”

“Anthropic managed to make a model that competes with o1 on math while staying useful for everyday chat.”

“Claude delivers comprehensive, beautifully formatted reports with citations in under five minutes.”

“The 128k output limit is a sleeper feature. Finally a model that doesn't cut off halfway through a long script.”

“Claude 3.7 + MCP is the closest thing to Jarvis right now. It actually uses my local tools correctly.”

Related Videos

Watch tutorials, reviews, and discussions about Claude 3.7 Sonnet

“Claude 3.7 is straight gas. The new base model beat itself to become even better at programming.”

“The new 3.7 model absolutely crushed all other models including OpenAI o3 mini.”

“It is capable of solving 70% of GitHub issues.”

“Extended thinking allows the model to ponder a problem before outputting code.”

“This is a massive win for the developer experience.”

“Chat bots give you advice, but Claude Code takes actions. It can create files, build websites, and install packages.”

“Extended thinking is Claude reasoning before it actually takes any actions.”

“The tool is optimized for the terminal environment.”

“MCP connectivity is what really separates this from standard ChatGPT.”

“The model understands intent behind vague terminal commands.”

“The integration with the terminal via Claude Code is a level of agency we haven't seen yet.”

“Claude 3.7 Sonnet's ability to show its thought process is far more transparent than competitors.”

“On SWE-bench Verified, it hits a remarkable 62%.”

“Hybrid reasoning means you don't pay the latency penalty when you don't need it.”

“It maintains the high-quality writing style of previous Claude models.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Claude 3.7 Sonnet and achieve better results.

Set Reasoning Budgets

Use the API thinking parameter to limit the number of reasoning tokens to manage costs.

Review Thought Blocks

Check the internal chain-of-thought in responses to verify the logic of complex answers.

Use MCP Connectors

Connect the model to local databases and cloud storage for real-time project context.

Context Refreshing

Use summary commands in long agentic loops to keep the context window focused on relevant data.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Gemini 3.5 Flash

Gemini 3.5 Flash is Google's high-speed multimodal model with a 1M context window, optimized for sub-second agentic loops and complex coding tasks.

Claude 4.5 Sonnet

Anthropic

Anthropic's Claude Sonnet 4.5 delivers world-leading coding (77.2% SWE-bench) and a 200K context window, optimized for the next generation of autonomous agents.

GPT-5.3 Codex

OpenAI

GPT-5.3 Codex is OpenAI's 2026 frontier coding agent, featuring a 400K context window, 77.3% Terminal-Bench score, and superior logic for complex software...

DeepSeek-V3.2-Speciale

DeepSeek

DeepSeek-V3.2-Speciale is a reasoning-first LLM featuring gold-medal math performance, DeepSeek Sparse Attention, and a 131K context window. Rivaling GPT-5...

Qwen3.5-Omni

alibaba

Qwen3.5-Omni is a natively omnimodal AI by Alibaba Cloud, offering seamless audio-visual reasoning, real-time voice chat, and 256k context for low-latency apps.

GPT-5.4

OpenAI

GPT-5.4 is OpenAI's frontier model featuring a 1.05M context window and Extreme Reasoning. It excels at autonomous UI interaction and long-form data analysis.

Kimi K2 Thinking

Moonshot

Kimi K2 Thinking is Moonshot AI's trillion-parameter reasoning model. It outperforms GPT-5 on HLE and supports 300 sequential tool calls autonomously for...

MiMo V2.5 Pro

Other

MiMo V2.5 Pro is Xiaomi's open-source 1.02T parameter MoE model featuring a 1M context window, native multimodality, and elite agentic coding performance.

Frequently Asked Questions

Find answers to common questions about Claude 3.7 Sonnet