Claude 4.5 Sonnet

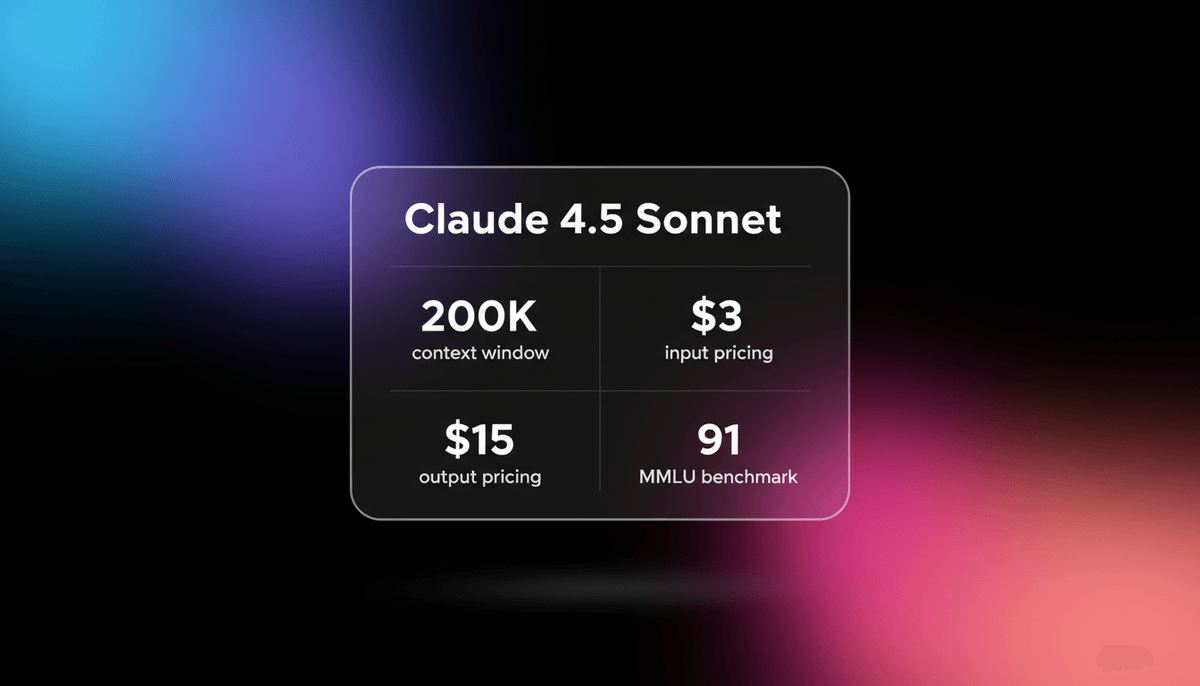

Anthropic's Claude Sonnet 4.5 delivers world-leading coding (77.2% SWE-bench) and a 200K context window, optimized for the next generation of autonomous agents.

About Claude 4.5 Sonnet

Learn about Claude 4.5 Sonnet's capabilities, features, and how it can help you achieve better results.

**The Frontier of Agentic Intelligence**

Claude 4.5 Sonnet represents a major advancement in frontier intelligence, optimized for the era of autonomous AI agents. Released in late 2025, it is a hybrid reasoning model that allows developers to toggle between high-speed execution for routine tasks and extended thinking for complex logical challenges. It leads benchmarks in computer use and tool orchestration, making it a preferred engine for terminal-based agents and multi-file software engineering.

**Precision and Reduced Hallucinations**

The model architecture prioritizes logic and precision, reducing the sycophancy and hallucinations observed in earlier series. With a 64,000-token output limit and a 200,000-token input window, it can process entire repositories while generating full application files in a single pass. It introduces native checkpoints for agentic workflows, allowing systems to roll back and correct mistakes autonomously without human intervention.

**Multimodal and Reasoning Prowess**

Beyond software development, Sonnet 4.5 excels in multimodal document analysis and financial modeling. Its internal logic prioritizes architectural context, enabling it to map out large-scale systems more effectively than predecessors. Whether processing handwritten notes or implementing API integrations, the model maintains high factual accuracy and strict instruction following across long-horizon tasks.

Use Cases

Discover the different ways you can use Claude 4.5 Sonnet to achieve great results.

Autonomous Software Engineering

Managing end-to-end development from initial requirements to automated commits using terminal interfaces.

GUI-Based Automation

Automating web browsing and data entry into legacy applications using native computer use capabilities.

Multi-Agent Orchestration

Delegating specialized tasks to sub-agents like reviewers and builders within a central planning loop.

Complex Code Refactoring

Re-architecting multi-file codebases while maintaining consistency across 200,000 tokens of active context.

Nuanced Financial Analysis

Analyzing quarterly reports and spreadsheets with vision to identify discrepancies and investment insights.

Interactive Data Visualization

Generating dynamic charts from complex datasets using embedded code execution and real-time building.

Strengths

Limitations

API Quick Start

anthropic/claude-4-5-sonnet

import Anthropic from '@anthropic-ai/sdk';

const anthropic = new Anthropic({

apiKey: process.env.ANTHROPIC_API_KEY,

});

const response = await anthropic.messages.create({

model: "claude-4-5-sonnet-20250929",

max_tokens: 1024,

messages: [

{ role: "user", content: "Analyze this codebase for security flaws." }

],

});

console.log(response.content[0].text);Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about Claude 4.5 Sonnet

“Claude 4.5 Sonnet is available everywhere today, the best coding model in the world.”

“This fixes one of the most painful scaling issues with MCP setups. Was watching context evaporate before any actual work began.”

“Claude Code-Sonnet 4.5 is way ahead of Gemini 3.0 Pro for complex Dockerized refactoring tasks.”

“The pattern: Mistakes become documentation. You add a rule to CLAUDE.md and it never happens again.”

“The hybrid reasoning mode is a lifesaver for debugging complex async logic where regular models just loop.”

“Pricing parity with 3.5 Sonnet makes this an easy upgrade for all our production agent pipelines.”

Related Videos

Watch tutorials, reviews, and discussions about Claude 4.5 Sonnet

“This new 4.5 Sonnet model is outperforming even Opus 4.1 on the Swaybench verified test”

“It was able to maintain focus for over 30 hours on complex multi-step tasks”

“It leads on the OS world computer use benchmark with a score of 61.4 percentage”

“The internal reasoning engine handles Python environments with far more stability than 3.5”

“Terminal integration feels much tighter with almost zero hallucinated shell commands”

“Sonnet 4.5 is now leading in agentic tool use... a 20 percent jump, which is really exciting”

“Claude code with Sonnet 4.5 finished the entire Stripe implementation in 15 minutes”

“Claude Sonnet 4.5 was faster by a lot and better by a decent amount”

“The thinking toggle allows you to throw more compute at specific blocks of code”

“It retains context perfectly even when you're 150,000 tokens deep into a massive project”

“It is the best performing model ever when it controls your computer”

“Drop in error rates for coding from 9 percent to basically zero”

“Claude imagine might be the coolest feature... a real time app building experience”

“The MCP integration allows it to search tools without eating up your prompt context”

“Vision latency is significantly reduced when analyzing complex UI layouts”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of Claude 4.5 Sonnet and achieve better results.

Enable MCP Tool Search

Use Model Context Protocol Tool Search to reduce context usage by 85 percent and leave room for active files.

Leverage Agentic Checkpoints

Use the /checkpoint command in terminal interfaces to save progress before major refactors for instant rollback.

Context Budgeting

Clear the history between unrelated tasks to prevent context rot and maintain high logic accuracy.

System Prompt Hierarchy

Define the model persona and strict output constraints in a dedicated configuration file for cross-agent consistency.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

GPT-5.3 Codex

OpenAI

GPT-5.3 Codex is OpenAI's 2026 frontier coding agent, featuring a 400K context window, 77.3% Terminal-Bench score, and superior logic for complex software...

Qwen3.5-Omni

alibaba

Qwen3.5-Omni is a natively omnimodal AI by Alibaba Cloud, offering seamless audio-visual reasoning, real-time voice chat, and 256k context for low-latency apps.

GPT-5.4

OpenAI

GPT-5.4 is OpenAI's frontier model featuring a 1.05M context window and Extreme Reasoning. It excels at autonomous UI interaction and long-form data analysis.

Kimi K2 Thinking

Moonshot

Kimi K2 Thinking is Moonshot AI's trillion-parameter reasoning model. It outperforms GPT-5 on HLE and supports 300 sequential tool calls autonomously for...

GPT-5.2

OpenAI

GPT-5.2 is OpenAI's flagship model for professional tasks, featuring a 400K context window, elite coding, and deep multi-step reasoning capabilities.

Claude 3.7 Sonnet

Anthropic

Claude 3.7 Sonnet is Anthropic's first hybrid reasoning model, delivering state-of-the-art coding capabilities, a 200k context window, and visible thinking.

Qwen3.6-Max-Preview

alibaba

Qwen3.6-Max-Preview is Alibaba's flagship MoE model featuring 1M context, a native thinking mode, and SOTA scores in agentic coding and reasoning.

GLM-5

Zhipu (GLM)

GLM-5 is Zhipu AI's 744B parameter open-weight powerhouse, excelling in long-horizon agentic tasks, coding, and factual accuracy with a 200k context window.

Frequently Asked Questions

Find answers to common questions about Claude 4.5 Sonnet