GPT-4o mini

OpenAI's most cost-efficient small model, GPT-4o mini offers multimodal intelligence and high-speed performance at a significantly lower price point.

About GPT-4o mini

Learn about GPT-4o mini's capabilities, features, and how it can help you achieve better results.

A New Standard for Small Models

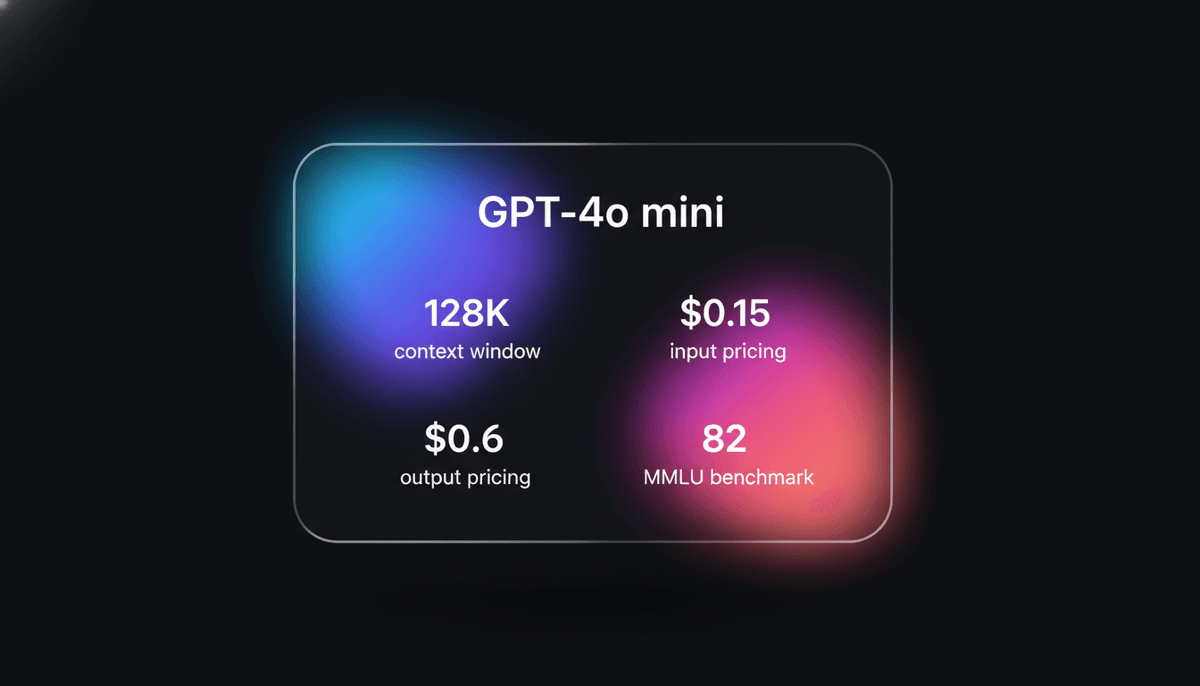

GPT-4o mini represents a significant leap in AI efficiency, designed to replace GPT-3.5 Turbo as the go-to model for developers. Built with a native multimodal architecture, it delivers GPT-4 class performance at a fraction of the cost and latency. It features a massive 128,000 token context window and supports complex outputs of up to 16,384 tokens, making it ideal for processing long-form documents and high-volume data streams.

Intelligence Meets Affordability

Unlike previous small models that sacrificed intelligence for speed, GPT-4o mini maintains high reasoning capabilities across text and vision tasks. It is 60% cheaper than GPT-3.5 Turbo and significantly more capable, scoring 82% on the MMLU benchmark. This model is specifically optimized for applications where low latency and high reliability are paramount, such as real-time customer assistants and large-scale data classification engines.

Use Cases

Discover the different ways you can use GPT-4o mini to achieve great results.

Customer Support Automation

Handling high volumes of customer inquiries with low latency and high accuracy at a fraction of the cost.

Content Summarization

Processing large documents or long-form content into concise summaries within the 128k context window.

Data Extraction

Converting unstructured text or images into structured data formats like JSON for database ingestion.

Multilingual Translation

Providing real-time translation across dozens of languages for chat applications and global communication.

Educational Tutoring

Serving as an interactive study assistant for students needing help with math, science, and language arts.

Basic Vision Tasks

Analyzing images to identify objects, extract text via OCR, or provide descriptions for accessibility.

Strengths

Limitations

API Quick Start

openai/gpt-4o-mini

import OpenAI from "openai";

const openai = new OpenAI();

async function main() {

const completion = await openai.chat.completions.create({

messages: [{ role: "user", content: "Explain quantum physics." }],

model: "gpt-4o-mini",

});

console.log(completion.choices[0].message.content);

}

main();Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about GPT-4o mini

“GPT-4o mini has basically killed the market for fine-tuning older models for basic RAG. The costs are too low to ignore.”

“The speed is just insane. I'm getting tokens back almost instantly for my translation agent.”

“OpenAI really forced the hands of Anthropic and Google with this pricing. $0.15 for 1M tokens is a new floor.”

“I swapped out 3.5 for mini and the logic improvement was visible in the first five minutes of testing.”

“It is finally cheap enough to use LLMs for basic data cleaning at scale without a massive cloud bill.”

“The vision performance for OCR is actually better than some specialized models that cost 10x more.”

Related Videos

Watch tutorials, reviews, and discussions about GPT-4o mini

“It is faster and cheaper than GPT-3.5 Turbo across the board.”

“The vision capabilities for a model this small are genuinely surprising.”

“Pricing is basically a race to zero now with this release.”

“It manages to keep the context window massive while being tiny.”

“Benchmarks show it beating Claude Haiku in almost every category.”

“GPT 40 mini is a lightweight model so it's much faster than GPT 40.”

“It's way way faster than GPT 4.”

“For daily tasks, most users won't even notice the reasoning difference.”

“The image recognition is very consistent for basic objects.”

“It handles complex instructions much better than the old 3.5 model.”

“It currently outperforms their gbd4 on the chat preferences in the LMC leaderboard.”

“Everything looks perfect and you know this particular receipt looks like a typical receipt.”

“The response time is practically sub-second for short prompts.”

“It is very effective at summarizing long PDFs through the API.”

“You can run millions of tokens for just a few dollars.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of GPT-4o mini and achieve better results.

Use for RAG

Utilize the low input cost to perform extensive Retrieval Augmented Generation without high expenses.

Structure with JSON Mode

Use the JSON mode or function calling parameters to ensure consistent data structures for backend workflows.

Batch Processing

Employ OpenAI's Batch API with this model to reduce costs by 50% for non-urgent tasks.

Temperature Tuning

Set a lower temperature between 0.1 and 0.3 for factual extraction tasks to maximize accuracy.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

Qwen3-Coder-Next

alibaba

Qwen3-Coder-Next is Alibaba Cloud's elite Apache 2.0 coding model, featuring an 80B MoE architecture and 256k context window for advanced local development.

GLM-4.7

Zhipu (GLM)

GLM-4.7 by Zhipu AI is a flagship 358B MoE model featuring a 200K context window, elite 73.8% SWE-bench performance, and native Deep Thinking for agentic...

MiniMax M2.5

minimax

MiniMax M2.5 is a SOTA MoE model featuring a 1M context window and elite agentic coding capabilities at disruptive pricing for autonomous agents.

MiMo V2.5 Pro

Other

MiMo V2.5 Pro is Xiaomi's open-source 1.02T parameter MoE model featuring a 1M context window, native multimodality, and elite agentic coding performance.

Gemini 3.5 Flash

Gemini 3.5 Flash is Google's high-speed multimodal model with a 1M context window, optimized for sub-second agentic loops and complex coding tasks.

Qwen 3.7 Max

alibaba

Qwen 3.7 Max is Alibaba’s flagship AI model for deep reasoning and autonomous agent tasks, featuring a 256k context window and top-tier coding performance.

Qwen3.5-Omni

alibaba

Qwen3.5-Omni is a natively omnimodal AI by Alibaba Cloud, offering seamless audio-visual reasoning, real-time voice chat, and 256k context for low-latency apps.

DeepSeek v4

DeepSeek

DeepSeek v4 is a 1.6T parameter MoE model featuring a 1M token context window and native multimodal support for text, vision, and video at disruptive prices.

Frequently Asked Questions

Find answers to common questions about GPT-4o mini