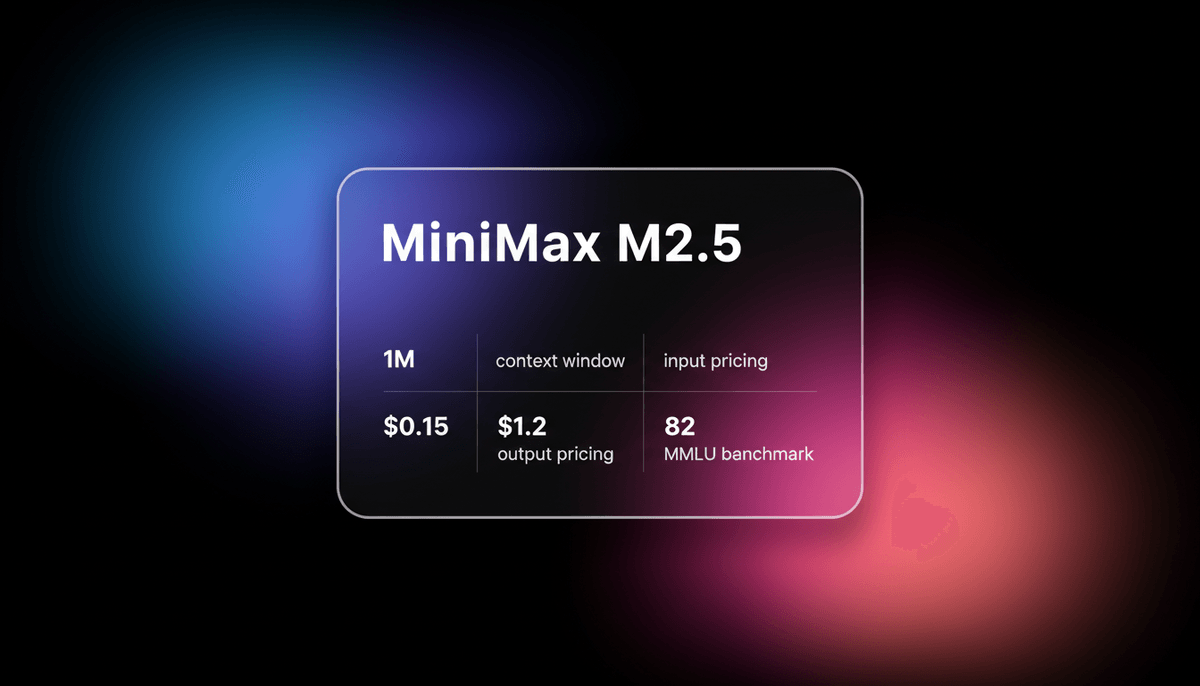

MiniMax M2.5

MiniMax M2.5 is a SOTA MoE model featuring a 1M context window and elite agentic coding capabilities at disruptive pricing for autonomous agents.

About MiniMax M2.5

Learn about MiniMax M2.5's capabilities, features, and how it can help you achieve better results.

Efficient Frontier Architecture

MiniMax M2.5 is a high-efficiency frontier model built on a 230B Mixture-of-Experts (MoE) architecture. By activating only 10 billion parameters per forward pass, it achieves inference speeds and pricing structures that are nearly 20 times more efficient than proprietary giants. It is engineered specifically for agentic intelligence, prioritizing structured logic and multi-step planning over simple chat completions. This sparse design enables the model to maintain high intelligence without the massive compute overhead of traditional dense models.

Advanced Coding Intelligence

The model's standout feature is its Architect Mindset, which allows it to visualize logic structures and project hierarchies before generating code. This makes it particularly effective for autonomous software engineering, where it matches the state-of-the-art with an 80.2% score on SWE-Bench Verified. With a 1-million-token context window, it can ingest entire codebases, enabling deep repository audits and complex system refactoring that were previously cost-prohibitive.

Enterprise and Local Deployment

MiniMax M2.5 supports over 10 programming languages and native throughput of up to 100 tokens per second on its lightning variant. Because it is available as an open-weight model, developers can deploy it locally for full data privacy while retaining access to the same logic-heavy reasoning found in the hosted API. This versatility makes it a practical choice for both cloud-based agent pipelines and on-premise development tools.

Use Cases

Discover the different ways you can use MiniMax M2.5 to achieve great results.

Autonomous Software Engineering

Resolving real-world GitHub issues and performing multi-file debugging using agent harnesses.

Enterprise Agent Pipelines

Powering always-on background agents for research and data synthesis at low API costs.

Legacy Code Modernization

Refactoring massive outdated repositories into modern frameworks while maintaining logic standards.

Architectural Code Reviews

Analyzing project hierarchies to provide logic feedback and structural optimization suggestions.

High-Volume Document Editing

Processing large office files with high fidelity for financial and legal modeling.

Low-Latency Developer Tools

Driving IDE extensions and CLI tools that require sub-second response times for assistance.

Strengths

Limitations

API Quick Start

minimax/minimax-m2.5

import OpenAI from 'openai';

const client = new OpenAI({

apiKey: process.env.MINIMAX_API_KEY,

baseURL: 'https://api.minimax.io/v1',

});

async function main() {

const response = await client.chat.completions.create({

model: 'minimax-m2.5',

messages: [{ role: 'user', content: 'Design a microservices architecture for a fintech app.' }],

temperature: 0.1,

});

console.log(response.choices[0].message.content);

}

main();Install the SDK and start making API calls in minutes.

Community Feedback

See what the community thinks about MiniMax M2.5

“MiniMax M2.5 pricing is the real story, cheap enough to change architecture, not just budgets.”

“M2.5 is hitting SOTA numbers and it's a 10B active parameter model, meaning it's fast and cheap.”

“The model reduces the heavy lifting users had to do just to keep things moving.”

“M2.5 is matching Claude Opus 4.6 throughput at a fraction of the cost.”

“Running M2.5 locally on a Mac Studio is snappy. The 10B active params really make a difference.”

“The architectural planning step catches logic errors before it even writes a single line of code.”

Related Videos

Watch tutorials, reviews, and discussions about MiniMax M2.5

“It's almost 20 times cheaper than the top proprietary options.”

“This is a top tier coding and agentic model that's much faster and drastically cheaper.”

“The performance on SWE-bench verified really puts it in the elite category.”

“You're getting frontier intelligence at open-source hardware requirements.”

“The MoE architecture here is tuned perfectly for low-latency coding tasks.”

“MiniMax is serving the model at 3% of the cost of Opus 4.6 in output tokens.”

“The cost of intelligence is actually nearing the cost of electricity at this point.”

“It handles large repo context windows without the typical mid-doc forgetting.”

“For developer tools, the speed of the lightning variant is a massive UX win.”

“It's the first time I've seen a model this cheap actually solve complex logic bugs.”

“It costs just $1 to run the model continuously for an hour at 100 tokens per second.”

“The inner thinking really shines here because it can course correct immediately.”

“Testing it against GPT-4o, it consistently provides better multi-file refactors.”

“The agentic capabilities are built-in, not just an afterthought in the prompt.”

“It's essentially free for small developers given the input pricing tiers.”

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert tips to help you get the most out of MiniMax M2.5 and achieve better results.

Adopt the Architect Mindset

Ask the model to generate a project structure before requesting the actual implementation code.

Utilize the 1M Context

Provide complete documentation or entire modules to ensure global awareness of your codebase.

Use the HighSpeed Plan

Select the M2.5-HighSpeed endpoint to achieve a steady 100 tokens per second for interactive agents.

Iterative Refinement

Ask the model to review its initial output for logic gaps or security vulnerabilities.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related AI Models

GLM-4.7

Zhipu (GLM)

GLM-4.7 by Zhipu AI is a flagship 358B MoE model featuring a 200K context window, elite 73.8% SWE-bench performance, and native Deep Thinking for agentic...

Qwen3-Coder-Next

alibaba

Qwen3-Coder-Next is Alibaba Cloud's elite Apache 2.0 coding model, featuring an 80B MoE architecture and 256k context window for advanced local development.

GPT-4o mini

OpenAI

OpenAI's most cost-efficient small model, GPT-4o mini offers multimodal intelligence and high-speed performance at a significantly lower price point.

MiMo V2.5 Pro

Other

MiMo V2.5 Pro is Xiaomi's open-source 1.02T parameter MoE model featuring a 1M context window, native multimodality, and elite agentic coding performance.

DeepSeek-V3.2-Speciale

DeepSeek

DeepSeek-V3.2-Speciale is a reasoning-first LLM featuring gold-medal math performance, DeepSeek Sparse Attention, and a 131K context window. Rivaling GPT-5...

Gemini 3.5 Flash

Gemini 3.5 Flash is Google's high-speed multimodal model with a 1M context window, optimized for sub-second agentic loops and complex coding tasks.

Qwen 3.7 Max

alibaba

Qwen 3.7 Max is Alibaba’s flagship AI model for deep reasoning and autonomous agent tasks, featuring a 256k context window and top-tier coding performance.

Qwen3.5-Omni

alibaba

Qwen3.5-Omni is a natively omnimodal AI by Alibaba Cloud, offering seamless audio-visual reasoning, real-time voice chat, and 256k context for low-latency apps.

Frequently Asked Questions

Find answers to common questions about MiniMax M2.5