How to Scrape Arc.dev: The Complete Guide to Remote Job Data

Learn how to scrape remote developer jobs, salary data, and tech stacks from Arc.dev. Extract high-quality tech listings for market research and lead...

Anti-Bot Protection Detected

- Cloudflare

- Enterprise-grade WAF and bot management. Uses JavaScript challenges, CAPTCHAs, and behavioral analysis. Requires browser automation with stealth settings.

- DataDome

- Real-time bot detection with ML models. Analyzes device fingerprint, network signals, and behavioral patterns. Common on e-commerce sites.

- Rate Limiting

- Limits requests per IP/session over time. Can be bypassed with rotating proxies, request delays, and distributed scraping.

- Browser Fingerprinting

- Identifies bots through browser characteristics: canvas, WebGL, fonts, plugins. Requires spoofing or real browser profiles.

- Behavioral Analysis

About Arc

Learn what Arc offers and what valuable data can be extracted from it.

The Premier Remote Talent Marketplace

Arc (formerly CodementorX) is a leading global marketplace for vetted remote software engineers and tech professionals. Unlike generic job boards, Arc operates a highly curated platform that connects top-tier developers with companies ranging from fast-growing startups to established tech giants. The platform is particularly known for its rigorous vetting process and its focus on long-term remote roles rather than short-term gigs.

Rich Tech-Centric Data

The website is a massive repository of structured data, including detailed job descriptions, salary benchmarks across different regions, and specific technical requirements. Each listing typically contains a rich set of attributes such as required tech stacks, timezone overlap needs, and remote work policies (e.g., 'Work from Anywhere' vs. 'Specific Country').

Strategic Value of Arc Data

For recruiters and market analysts, scraping Arc.dev provides high-signal data on compensation trends and emerging technology adoption. Because the listings are vetted and updated frequently, the data is far more accurate than what is found on uncurated aggregators, making it a goldmine for competitive intelligence and specialized recruitment pipelines.

Why Scrape Arc?

Discover the business value and use cases for extracting data from Arc.

Access Vetted Talent Data

Arc filters out low-quality listings, ensuring you only extract high-value roles from verified tech companies and startups.

Real-Time Salary Benchmarking

Collect accurate remote salary and hourly rate data to create competitive compensation models for the global tech market.

Track Tech Stack Adoption

Analyze the demand for specific frameworks and programming languages like Rust, Go, or AI tools by monitoring job requirements over time.

Generate High-Quality Leads

Identify fast-growing companies that are actively scaling their engineering teams, making them prime leads for HR software or recruiting services.

Competitive Hiring Insights

Monitor which technical roles your competitors are prioritizing to gain insights into their product roadmap and expansion strategy.

Build Niche Job Portals

Aggregate premium data from Arc to populate specialized job boards focusing on specific technologies or geographic remote constraints.

Scraping Challenges

Technical challenges you may encounter when scraping Arc.

Aggressive Anti-Bot Protection

The site uses Cloudflare and DataDome, which can detect and block scrapers based on IP reputation and advanced browser fingerprints.

SPA Architecture

Being built with Next.js means content is rendered dynamically on the client side, requiring a headless browser to access the data.

Strict Rate Limiting

Frequent requests to job detail pages often trigger 429 errors or CAPTCHAs if not managed with human-like browsing patterns.

Dynamic Selectors

CSS classes are often obfuscated or change during site deployments, which can break traditional scrapers relying on static HTML attributes.

Scrape Arc with AI

No coding required. Extract data in minutes with AI-powered automation.

How It Works

Describe What You Need

Tell the AI what data you want to extract from Arc. Just type it in plain language — no coding or selectors needed.

AI Extracts the Data

Our artificial intelligence navigates Arc, handles dynamic content, and extracts exactly what you asked for.

Get Your Data

Receive clean, structured data ready to export as CSV, JSON, or send directly to your apps and workflows.

Why Use AI for Scraping

AI makes it easy to scrape Arc without writing any code. Our AI-powered platform uses artificial intelligence to understand what data you want — just describe it in plain language and the AI extracts it automatically.

How to scrape with AI:

- Describe What You Need: Tell the AI what data you want to extract from Arc. Just type it in plain language — no coding or selectors needed.

- AI Extracts the Data: Our artificial intelligence navigates Arc, handles dynamic content, and extracts exactly what you asked for.

- Get Your Data: Receive clean, structured data ready to export as CSV, JSON, or send directly to your apps and workflows.

Why use AI for scraping:

- Bypass Advanced Shielding: Automatio automatically handles browser fingerprinting and uses residential proxies to bypass Cloudflare and DataDome detection effortlessly.

- No-Code Visual Mapping: Extract job data by simply clicking on elements, avoiding the need to write and maintain complex XPaths or custom scripts.

- Dynamic Content Execution: Automatio natively renders JavaScript and waits for elements to appear, ensuring all dynamic Next.js data is captured correctly.

- Scheduled Data Extraction: Easily set your scraper to run daily to capture new job posts and changes in hiring status without any manual intervention.

No-Code Web Scrapers for Arc

Point-and-click alternatives to AI-powered scraping

Several no-code tools like Browse.ai, Octoparse, Axiom, and ParseHub can help you scrape Arc. These tools use visual interfaces to select elements, but they come with trade-offs compared to AI-powered solutions.

Typical Workflow with No-Code Tools

Common Challenges

Learning curve

Understanding selectors and extraction logic takes time

Selectors break

Website changes can break your entire workflow

Dynamic content issues

JavaScript-heavy sites often require complex workarounds

CAPTCHA limitations

Most tools require manual intervention for CAPTCHAs

IP blocking

Aggressive scraping can get your IP banned

No-Code Web Scrapers for Arc

Several no-code tools like Browse.ai, Octoparse, Axiom, and ParseHub can help you scrape Arc. These tools use visual interfaces to select elements, but they come with trade-offs compared to AI-powered solutions.

Typical Workflow with No-Code Tools

- Install browser extension or sign up for the platform

- Navigate to the target website and open the tool

- Point-and-click to select data elements you want to extract

- Configure CSS selectors for each data field

- Set up pagination rules to scrape multiple pages

- Handle CAPTCHAs (often requires manual solving)

- Configure scheduling for automated runs

- Export data to CSV, JSON, or connect via API

Common Challenges

- Learning curve: Understanding selectors and extraction logic takes time

- Selectors break: Website changes can break your entire workflow

- Dynamic content issues: JavaScript-heavy sites often require complex workarounds

- CAPTCHA limitations: Most tools require manual intervention for CAPTCHAs

- IP blocking: Aggressive scraping can get your IP banned

Code Examples

import requests

from bs4 import BeautifulSoup

# Note: Basic requests are often blocked by Arc's Cloudflare setup.

# Using a proper User-Agent and potentially a proxy is mandatory.

url = 'https://arc.dev/remote-jobs'

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/119.0.0.0 Safari/537.36'

}

try:

response = requests.get(url, headers=headers)

# Check for 403 Forbidden which indicates a Cloudflare block

if response.status_code == 200:

soup = BeautifulSoup(response.text, 'html.parser')

# Extract data from the Next.js JSON script for better reliability

data_script = soup.find('script', id='__NEXT_DATA__')

print('Successfully retrieved page source.')

else:

print(f'Blocked by Anti-Bot. Status code: {response.status_code}')

except Exception as e:

print(f'Error: {e}')When to Use

Best for static HTML pages where content is loaded server-side. The fastest and simplest approach when JavaScript rendering isn't required.

Advantages

- ●Fastest execution (no browser overhead)

- ●Lowest resource consumption

- ●Easy to parallelize with asyncio

- ●Great for APIs and static pages

Limitations

- ●Cannot execute JavaScript

- ●Fails on SPAs and dynamic content

- ●May struggle with complex anti-bot systems

How to Scrape Arc with Code

Python + Requests

import requests

from bs4 import BeautifulSoup

# Note: Basic requests are often blocked by Arc's Cloudflare setup.

# Using a proper User-Agent and potentially a proxy is mandatory.

url = 'https://arc.dev/remote-jobs'

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/119.0.0.0 Safari/537.36'

}

try:

response = requests.get(url, headers=headers)

# Check for 403 Forbidden which indicates a Cloudflare block

if response.status_code == 200:

soup = BeautifulSoup(response.text, 'html.parser')

# Extract data from the Next.js JSON script for better reliability

data_script = soup.find('script', id='__NEXT_DATA__')

print('Successfully retrieved page source.')

else:

print(f'Blocked by Anti-Bot. Status code: {response.status_code}')

except Exception as e:

print(f'Error: {e}')Python + Playwright

from playwright.sync_api import sync_playwright

def scrape_arc():

with sync_playwright() as p:

browser = p.chromium.launch(headless=True)

# Use a real user profile or stealth settings

context = browser.new_context(user_agent='Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36')

page = context.new_page()

# Navigate and wait for content to hydrate

page.goto('https://arc.dev/remote-jobs', wait_until='networkidle')

# Wait for the job card elements

page.wait_for_selector('div[class*="JobCard_container"]')

jobs = page.query_selector_all('div[class*="JobCard_container"]')

for job in jobs:

title = job.query_selector('h2').inner_text()

company = job.query_selector('div[class*="JobCard_company"]').inner_text()

print(f'Scraped: {title} @ {company}')

browser.close()

scrape_arc()Python + Scrapy

import scrapy

class ArcSpider(scrapy.Spider):

name = 'arc_jobs'

start_urls = ['https://arc.dev/remote-jobs']

def parse(self, response):

# Scrapy needs a JS middleware (like scrapy-playwright) for Arc.dev

for job in response.css('div[class*="JobCard_container"]'):

yield {

'title': job.css('h2::text').get(),

'company': job.css('div[class*="JobCard_company"]::text').get(),

'salary': job.css('div[class*="JobCard_salary"]::text').get(),

'tags': job.css('div[class*="JobCard_tags"] span::text').getall()

}

next_page = response.css('a[class*="Pagination_next"]::attr(href)').get()

if next_page:

yield response.follow(next_page, self.parse)Node.js + Puppeteer

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch({ headless: true });

const page = await browser.newPage();

await page.setUserAgent('Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36');

await page.goto('https://arc.dev/remote-jobs', { waitUntil: 'networkidle2' });

const jobData = await page.evaluate(() => {

const cards = Array.from(document.querySelectorAll('div[class*="JobCard_container"]'));

return cards.map(card => ({

title: card.querySelector('h2')?.innerText,

company: card.querySelector('div[class*="JobCard_company"]')?.innerText,

location: card.querySelector('div[class*="JobCard_location"]')?.innerText

}));

});

console.log(jobData);

await browser.close();

})();What You Can Do With Arc Data

Explore practical applications and insights from Arc data.

Remote Salary Index

Human Resources departments use this to build competitive compensation packages for remote-first technical roles.

How to implement:

- 1Scrape all listings that include salary ranges for senior developers.

- 2Normalize currency to USD and calculate median pay per tech stack.

- 3Update the index monthly to track inflation and market demand shifts.

Use Automatio to extract data from Arc and build these applications without writing code.

What You Can Do With Arc Data

- Remote Salary Index

Human Resources departments use this to build competitive compensation packages for remote-first technical roles.

- Scrape all listings that include salary ranges for senior developers.

- Normalize currency to USD and calculate median pay per tech stack.

- Update the index monthly to track inflation and market demand shifts.

- Recruitment Pipeline Generator

Tech staffing agencies can identify companies that are aggressively scaling their engineering departments.

- Monitor Arc for companies posting multiple high-priority roles simultaneously.

- Extract company details and growth signals (e.g., 'Exclusive' badges).

- Contact hiring managers at these firms with specialized talent leads.

- Niche Tech Aggregator Board

Developers can create specialized job boards (e.g., 'Rust Remote Only') by filtering and re-publishing Arc's vetted listings.

- Scrape listings filtered by specific tags like 'Rust' or 'Go'.

- Clean the descriptions and remove duplicate entries from other boards.

- Post to a niche site or automated Telegram channel for followers.

- Tech Stack Adoption Analysis

Investors and CTOs use this data to determine which frameworks are gaining dominance in the professional market.

- Extract the 'Primary Stack' and 'Tags' fields from all active listings.

- Aggregate the frequency of frameworks like Next.js vs. React vs. Vue.

- Compare quarterly data to identify year-over-year growth trends.

- Timezone Compatibility Tool

Startups in Europe or LATAM can use this to find companies with compatible overlap requirements.

- Scrape 'Timezone Overlap' requirements from global listings.

- Filter by regions (e.g., 'Europe Overlap' or 'EST Compatibility').

- Analyze which tech hubs are most flexible with remote work hours.

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert advice for successfully extracting data from Arc.

Target the __NEXT_DATA__ tag

Instead of parsing messy HTML, look for the script tag with id='__NEXT_DATA__' which contains the full JSON state of the listings.

Use Residential Proxies

To avoid being flagged by Arc's security, use residential proxies that mimic real user traffic rather than datacenter IPs.

Monitor XHR Requests

Check the browser's Network tab for internal API calls that Arc uses to load more jobs; these often provide cleaner data than the HTML.

Rotate Browser Fingerprints

Ensure your scraper alternates between different User-Agents and browser configurations to prevent behavioral detection patterns.

Implement Random Delays

Mimic human behavior by adding randomized wait times between page navigations to stay under the radar of rate-limiting systems.

Filter by Categories

Scraping specific sub-sections like '/remote-jobs/ai' is often more efficient and less likely to trigger site-wide protection than broad searches.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related Web Scraping

How to Scrape Guru.com: A Comprehensive Web Scraping Guide

How to Scrape Upwork

How to Scrape Toptal | Toptal Web Scraper Guide

How to Scrape Freelancer.com: A Complete Technical Guide

How to Scrape Fiverr | Fiverr Web Scraper Guide

How to Scrape Indeed: 2025 Guide for Job Market Data

How to Scrape Charter Global | IT Services & Job Board Scraper

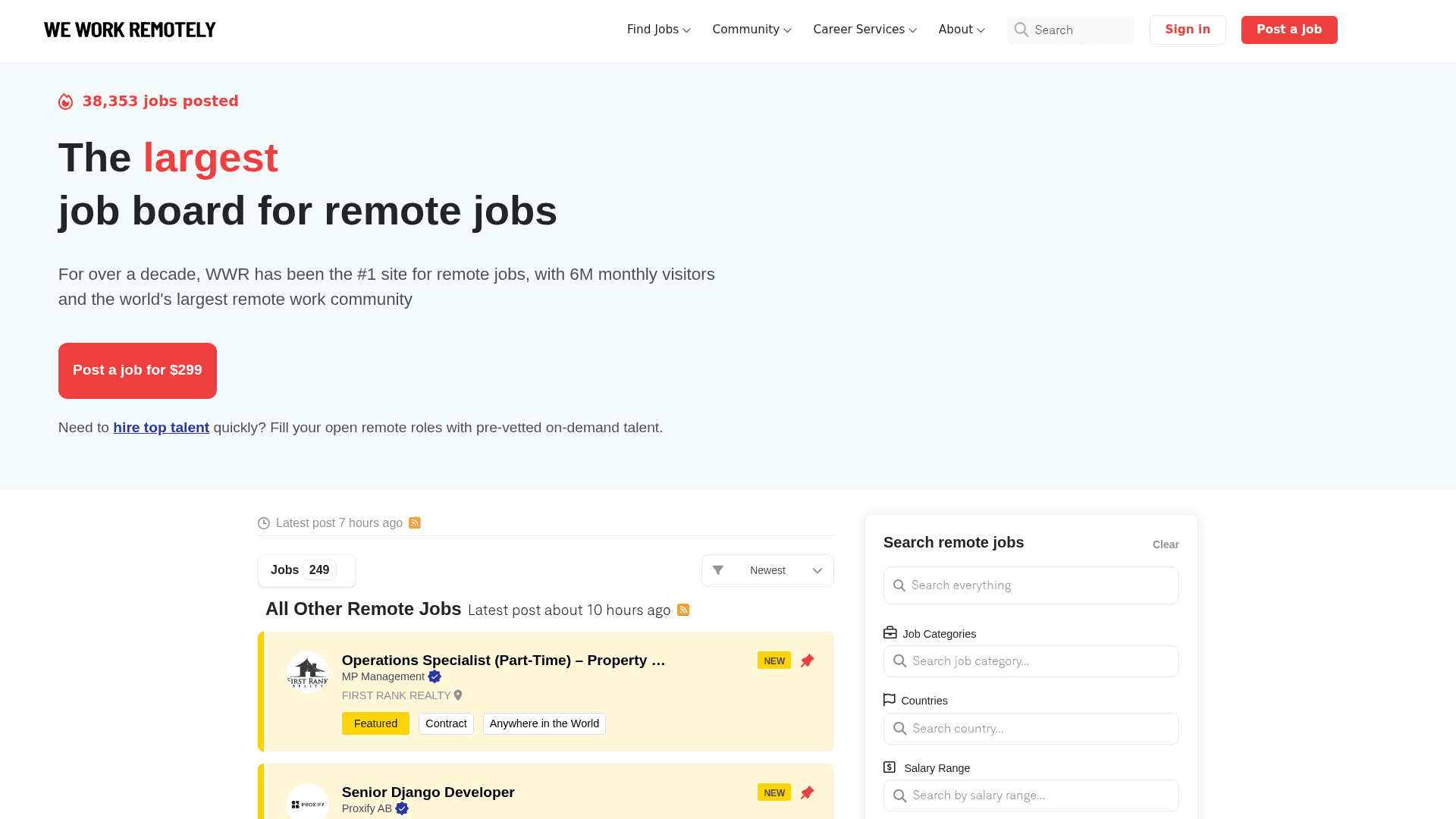

How to Scrape We Work Remotely: The Ultimate Guide

Frequently Asked Questions

Find answers to common questions about Arc