How to Scrape Locations Hawaii | Locations Hawaii Web Scraper

Learn how to scrape rental listings, prices, unit specs, and availability from Locations Hawaii. Get real-time data for Honolulu real estate market analysis.

Anti-Bot Protection Detected

- Cloudflare

- Enterprise-grade WAF and bot management. Uses JavaScript challenges, CAPTCHAs, and behavioral analysis. Requires browser automation with stealth settings.

- Rate Limiting

- Limits requests per IP/session over time. Can be bypassed with rotating proxies, request delays, and distributed scraping.

- IP Blocking

- Blocks known datacenter IPs and flagged addresses. Requires residential or mobile proxies to circumvent effectively.

- JavaScript Rendering Detection

About Locations Hawaii

Learn what Locations Hawaii offers and what valuable data can be extracted from it.

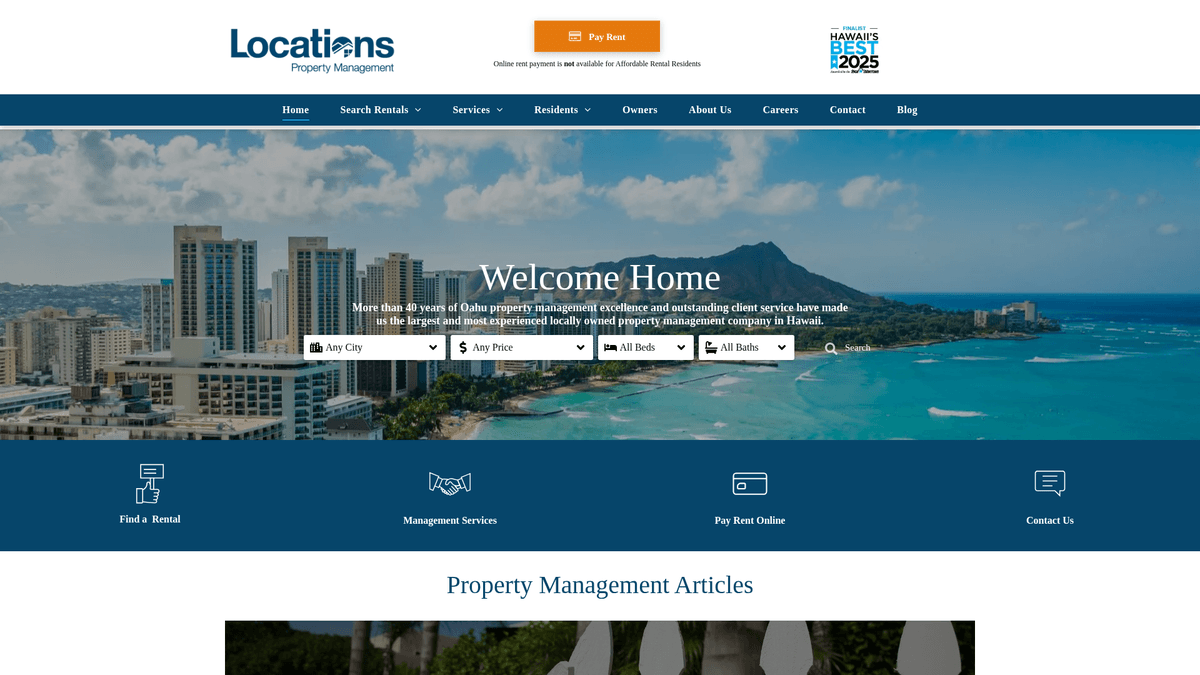

Premier Real Estate and Property Management in Honolulu

Locations Hawaii is one of Hawaii's largest and most trusted real estate firms, established in 1969. Based in Honolulu, they manage an extensive portfolio of residential, commercial, and affordable rental properties throughout the island of Oahu. Their platform serves as a primary hub for local renters to find vacancies in neighborhoods ranging from Waikiki to Kapolei.

AppFolio-Powered Data Structure

The website hosts highly structured data via the AppFolio Property Manager platform. Listings typically include granular details such as street addresses, monthly rent, security deposits, unit dimensions, and specific amenities. Because Locations Hawaii handles a significant share of the local market, their listings are often the first to go live, appearing here before hitting major national aggregators like Zillow.

Strategic Value for Data Collection

Scraping Locations Hawaii is particularly valuable for real estate investors and market analysts. The data allows for monitoring rental price trends, calculating vacancy rates in specific Honolulu zip codes, and performing automated rent benchmarking. By accessing this primary source directly, scrapers can maintain a more accurate view of the Hawaiian rental market than is possible through secondary data providers.

Why Scrape Locations Hawaii?

Discover the business value and use cases for extracting data from Locations Hawaii.

Real-Time Vacancy Tracking

The Hawaii rental market is extremely fast-paced; scraping allows you to monitor new vacancies the moment they are posted to the AppFolio backend.

Localized Market Intelligence

Gather granular data on rent per square foot across specific Honolulu neighborhoods to identify undervalued investment opportunities or competitive pricing.

Affordable Housing Analysis

Locations Hawaii manages a significant portion of the island's affordable rental stock, making this the primary source for tracking subsidized housing availability.

Property Management Benchmarking

Analyze how one of Hawaii's largest property managers structures their leases, pet policies, and amenities to improve your own management strategies.

Lead Generation for Contractors

Identify upcoming vacancies to offer professional cleaning, staging, or maintenance services to property managers exactly when they need turnover support.

Scraping Challenges

Technical challenges you may encounter when scraping Locations Hawaii.

Dynamic AppFolio Rendering

Listings are injected via JavaScript from the AppFolio platform, meaning standard HTML parsers will return empty containers without browser rendering.

Cloudflare Bot Mitigation

The site utilizes Cloudflare to filter out automated traffic, requiring advanced header management and browser fingerprinting to maintain access.

Inconsistent Data Schemas

Data fields like 'Pet Policy' or 'Utilities' are often contained within unstructured description blocks, requiring regex or AI to extract clean values.

IP Rate Limiting

Aggressive scraping of property detail pages can trigger temporary IP bans if requests are not properly distributed across rotating residential proxies.

Scrape Locations Hawaii with AI

No coding required. Extract data in minutes with AI-powered automation.

How It Works

Describe What You Need

Tell the AI what data you want to extract from Locations Hawaii. Just type it in plain language — no coding or selectors needed.

AI Extracts the Data

Our artificial intelligence navigates Locations Hawaii, handles dynamic content, and extracts exactly what you asked for.

Get Your Data

Receive clean, structured data ready to export as CSV, JSON, or send directly to your apps and workflows.

Why Use AI for Scraping

AI makes it easy to scrape Locations Hawaii without writing any code. Our AI-powered platform uses artificial intelligence to understand what data you want — just describe it in plain language and the AI extracts it automatically.

How to scrape with AI:

- Describe What You Need: Tell the AI what data you want to extract from Locations Hawaii. Just type it in plain language — no coding or selectors needed.

- AI Extracts the Data: Our artificial intelligence navigates Locations Hawaii, handles dynamic content, and extracts exactly what you asked for.

- Get Your Data: Receive clean, structured data ready to export as CSV, JSON, or send directly to your apps and workflows.

Why use AI for scraping:

- Headless Browser Automation: Automatio natively renders the JavaScript-heavy listing cards exactly as a human sees them, ensuring no data is missed by the AppFolio scripts.

- Visual Data Mapping: You can point and click to select complex data points like bathroom counts and availability dates without writing a single line of code.

- Smart Pagination Handling: Automatio can be configured to handle 'Load More' buttons and infinite scrolling to capture the entire property catalog in one automated session.

- Direct CRM Integration: Automatically send your scraped rental data to Google Sheets or via Webhooks to your internal real estate analysis tools in real-time.

No-Code Web Scrapers for Locations Hawaii

Point-and-click alternatives to AI-powered scraping

Several no-code tools like Browse.ai, Octoparse, Axiom, and ParseHub can help you scrape Locations Hawaii. These tools use visual interfaces to select elements, but they come with trade-offs compared to AI-powered solutions.

Typical Workflow with No-Code Tools

Common Challenges

Learning curve

Understanding selectors and extraction logic takes time

Selectors break

Website changes can break your entire workflow

Dynamic content issues

JavaScript-heavy sites often require complex workarounds

CAPTCHA limitations

Most tools require manual intervention for CAPTCHAs

IP blocking

Aggressive scraping can get your IP banned

No-Code Web Scrapers for Locations Hawaii

Several no-code tools like Browse.ai, Octoparse, Axiom, and ParseHub can help you scrape Locations Hawaii. These tools use visual interfaces to select elements, but they come with trade-offs compared to AI-powered solutions.

Typical Workflow with No-Code Tools

- Install browser extension or sign up for the platform

- Navigate to the target website and open the tool

- Point-and-click to select data elements you want to extract

- Configure CSS selectors for each data field

- Set up pagination rules to scrape multiple pages

- Handle CAPTCHAs (often requires manual solving)

- Configure scheduling for automated runs

- Export data to CSV, JSON, or connect via API

Common Challenges

- Learning curve: Understanding selectors and extraction logic takes time

- Selectors break: Website changes can break your entire workflow

- Dynamic content issues: JavaScript-heavy sites often require complex workarounds

- CAPTCHA limitations: Most tools require manual intervention for CAPTCHAs

- IP blocking: Aggressive scraping can get your IP banned

Code Examples

import requests

from bs4 import BeautifulSoup

# Locations Hawaii uses JS rendering, so basic requests may return empty templates.

# This example demonstrates the structure once content is loaded.

url = 'https://www.locationsrentals.com/listings'

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36'

}

try:

response = requests.get(url, headers=headers)

response.raise_for_status()

soup = BeautifulSoup(response.text, 'html.parser')

# Target listing containers based on common AppFolio classes

listings = soup.select('.listing-item')

for item in listings:

title = item.select_one('.listing-title').get_text(strip=True)

rent = item.select_one('.listing-rent').get_text(strip=True)

print(f'Listing: {title} | Price: {rent}')

except Exception as e:

print(f'Error occurred: {e}')When to Use

Best for static HTML pages where content is loaded server-side. The fastest and simplest approach when JavaScript rendering isn't required.

Advantages

- ●Fastest execution (no browser overhead)

- ●Lowest resource consumption

- ●Easy to parallelize with asyncio

- ●Great for APIs and static pages

Limitations

- ●Cannot execute JavaScript

- ●Fails on SPAs and dynamic content

- ●May struggle with complex anti-bot systems

How to Scrape Locations Hawaii with Code

Python + Requests

import requests

from bs4 import BeautifulSoup

# Locations Hawaii uses JS rendering, so basic requests may return empty templates.

# This example demonstrates the structure once content is loaded.

url = 'https://www.locationsrentals.com/listings'

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36'

}

try:

response = requests.get(url, headers=headers)

response.raise_for_status()

soup = BeautifulSoup(response.text, 'html.parser')

# Target listing containers based on common AppFolio classes

listings = soup.select('.listing-item')

for item in listings:

title = item.select_one('.listing-title').get_text(strip=True)

rent = item.select_one('.listing-rent').get_text(strip=True)

print(f'Listing: {title} | Price: {rent}')

except Exception as e:

print(f'Error occurred: {e}')Python + Playwright

from playwright.sync_api import sync_playwright

def scrape_listings():

with sync_playwright() as p:

# Launching browser with JS execution enabled

browser = p.chromium.launch(headless=True)

page = browser.new_page()

page.goto('https://www.locationsrentals.com/listings')

# Wait for the dynamic content to load via AppFolio components

page.wait_for_selector('.listing-item')

# Extract data from the rendered DOM

listings = page.query_selector_all('.listing-item')

for listing in listings:

name = listing.query_selector('.listing-title').inner_text()

price = listing.query_selector('.listing-rent').inner_text()

print(f'Property: {name}, Rent: {price}')

browser.close()

scrape_listings()Python + Scrapy

import scrapy

class LocationsHawaiiSpider(scrapy.Spider):

name = 'locations_spider'

start_urls = ['https://www.locationsrentals.com/listings']

def parse(self, response):

# Note: Scrapy requires a middleware like Splash or Selenium to render this JS site.

for listing in response.css('.listing-item'):

yield {

'title': listing.css('.listing-title::text').get(),

'price': listing.css('.listing-rent::text').get(),

'address': listing.css('.listing-address::text').get(),

'url': response.urljoin(listing.css('a::attr(href)').get())

}

# Pagination handle for 'Load More' or next buttons

next_page = response.css('a.next_page::attr(href)').get()

if next_page:

yield response.follow(next_page, self.parse)Node.js + Puppeteer

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch();

const page = await browser.newPage();

// Using networkidle2 to ensure React components are finished loading

await page.goto('https://www.locationsrentals.com/listings', { waitUntil: 'networkidle2' });

const results = await page.evaluate(() => {

const items = Array.from(document.querySelectorAll('.listing-item'));

return items.map(item => ({

title: item.querySelector('.listing-title')?.innerText,

price: item.querySelector('.listing-rent')?.innerText,

specs: item.querySelector('.listing-info')?.innerText

}));

});

console.log(results);

await browser.close();

})();What You Can Do With Locations Hawaii Data

Explore practical applications and insights from Locations Hawaii data.

Rental Yield Calculator

Investors can use the data to calculate potential returns on similar properties by neighborhood.

How to implement:

- 1Scrape monthly rent and square footage for all Honolulu listings.

- 2Compare data against current property sale prices in the same area.

- 3Generate a neighborhood-by-neighborhood yield heatmap.

Use Automatio to extract data from Locations Hawaii and build these applications without writing code.

What You Can Do With Locations Hawaii Data

- Rental Yield Calculator

Investors can use the data to calculate potential returns on similar properties by neighborhood.

- Scrape monthly rent and square footage for all Honolulu listings.

- Compare data against current property sale prices in the same area.

- Generate a neighborhood-by-neighborhood yield heatmap.

- B2B Cleaning Service Leads

Cleaning companies can identify properties that have just been listed as 'Available' to offer move-in/move-out services.

- Scrape the 'Available Date' field for all new listings daily.

- Filter for properties that are currently vacant or transitioning.

- Extract the assigned property manager's contact details for service outreach.

- Dynamic Price Monitoring

Local landlords can adjust their own rental prices based on real-time competitor data.

- Scrape active listings for 2-bedroom units in specific areas like Waikiki.

- Calculate the daily median price for these units.

- Set up alerts for when the market average moves by more than 5%.

- Historical Rent Analysis

Researchers can track how rent prices in Oahu have changed over years for legislative or academic study.

- Set up a recurring scrape to capture data every month.

- Store data in a time-series database to track individual property price changes.

- Analyze the impact of local tourism fluctuations on residential rent prices.

- Relocation Portal Aggregator

Create a niche website specifically for military members or digital nomads moving to Oahu.

- Aggregate listings from Locations Hawaii and other local firms.

- Tag listings based on proximity to bases or coworking hubs.

- Provide a unified search interface with unique local metadata.

Supercharge your workflow with AI Automation

Automatio combines the power of AI agents, web automation, and smart integrations to help you accomplish more in less time.

Pro Tips

Expert advice for successfully extracting data from Locations Hawaii.

Monitor Network XHR Requests

Check the browser's Network tab for JSON responses from the AppFolio API, which often contain cleaner, structured data than the visible HTML.

Use Residential USA Proxies

Targeting Hawaii-based or general USA residential proxies significantly reduces the risk of being flagged by Cloudflare's geo-fencing filters.

Scrape Meta Tags for Summary Data

Listing pages often include structured metadata (Schema.org or OpenGraph) that provides price and location data in a highly reliable format.

Implement Staggered Scheduling

Instead of one massive daily scrape, run smaller, frequent updates every few hours to catch new listings without putting excessive load on the server.

Capture the Availability Date

Always prioritize the availability date field to distinguish between immediate vacancies and properties that are still occupied by current tenants.

Testimonials

What Our Users Say

Join thousands of satisfied users who have transformed their workflow

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Jonathan Kogan

Co-Founder/CEO, rpatools.io

Automatio is one of the most used for RPA Tools both internally and externally. It saves us countless hours of work and we realized this could do the same for other startups and so we choose Automatio for most of our automation needs.

Mohammed Ibrahim

CEO, qannas.pro

I have used many tools over the past 5 years, Automatio is the Jack of All trades.. !! it could be your scraping bot in the morning and then it becomes your VA by the noon and in the evening it does your automations.. its amazing!

Ben Bressington

CTO, AiChatSolutions

Automatio is fantastic and simple to use to extract data from any website. This allowed me to replace a developer and do tasks myself as they only take a few minutes to setup and forget about it. Automatio is a game changer!

Sarah Chen

Head of Growth, ScaleUp Labs

We've tried dozens of automation tools, but Automatio stands out for its flexibility and ease of use. Our team productivity increased by 40% within the first month of adoption.

David Park

Founder, DataDriven.io

The AI-powered features in Automatio are incredible. It understands context and adapts to changes in websites automatically. No more broken scrapers!

Emily Rodriguez

Marketing Director, GrowthMetrics

Automatio transformed our lead generation process. What used to take our team days now happens automatically in minutes. The ROI is incredible.

Related Web Scraping

How to Scrape Century 21 Property Listings

How to Scrape Homes.com: Real Estate Data Extraction Guide

How to Scrape Century 21: A Technical Real Estate Guide

How to Scrape HotPads: A Complete Guide to Extracting Rental Data

How to Scrape Sacramento Delta Property Management

How to Scrape LivePiazza: Philadelphia Real Estate Scraper

How to Scrape RE/MAX (remax.com) Real Estate Listings

How to Scrape Apartments Near Me | Real Estate Data Scraper

Frequently Asked Questions

Find answers to common questions about Locations Hawaii